Making the tough calls: Decisions in the boardroom

Making the tough calls: Decisions in the boardroom

Opinion + AnalysisBusiness + Leadership

BY The Ethics Centre 24 MAR 2026

The scenario is familiar to us all. Company X is in crisis. A series of poor management decisions set in motion a sequence of events that lead to an avalanche of bad headlines and public outcry.

When things go wrong for an organisation – so wrong that the carelessness or misdeeds revealed could be considered ethical failure – responsibility is shouldered by those who are the final decision makers. They are and should be held accountable.

Boards of organisations, and the individual directors that comprise them, collectively make decisions about strategy, governance and corporate performance. Decisions that involve the interests of shareholders, employees, customers, suppliers and the wider community. They will also involve competing values, compromises and tradeoffs, information gaps and grey areas.

Research from The Governance Institute of Australia in 2021, surveyed a pool of directors, executives and high-level working groups to consider the most valued attributes for future board directors. Strategic and critical thinking were once ranked the highest, closely followed by the values of ethics and culture as the two most important areas that boards need to focus on to prevent corporate failure. A culture of accountability, transparency, trust and respect were viewed as a top factor determining a healthy dynamic between boards and management.

Ethics plays a central role in the decisions that face Boards and directors, such as:

- What constitutes a conflict of interest and how should it be managed?

- How aggressive should tax strategies be?

- What incentive structures and sales techniques will create a healthy and ethical organisational culture?

- What about investments in organisations that profit from arms and weaponry?

- How should organisations manage the effects technology has on their workforce?

- What obligation do organisations have to protect the environment and human rights?

Together, The Australian Institute of Company Directors (AICD) and The Ethics Centre have developed a decision making guide for directors.

Ethics in the Boardroom (2nd edition) provides directors with a simple decision-making framework which they can use to navigate the ethical dimensions of any decision. This guide is a vital resource for directors as a general reference, and should be utilised by boards to strengthen their capacity in ethics, and by individual directors and boards alike to inform conversations about the complex issues they encounter in their roles. Updated from the 2019 first edition, this second edition also reflects a changing landscape and responds to new questions emerging from the adoption of technologies such as artificial intelligence.

Through the insights of directors, academics and subject matter experts, the guide also provides four lenses to frame board conversations. These lenses give directors the best chance of viewing decisions from different perspectives. Rather than talking past each other, they will help directors pinpoint and resolve disagreement.

- Lens 1: General influences – Organisations are participants in society through the products and services they offer and their statuses as employers and influencers. The guide invites directors to seek out the broadest possible range of perspectives to enhance their choices and decisions. It also suggests that organisations should strive for leadership. What do you think about companies that take a stance on matters like climate change and same sex marriage?

- Lens 2: The board’s collective culture and character – In ethical decision making, directors are bound to apply the values and principles of their organisation. As custodians, they must ensure that culture and values are aligned. The guide invites directors to be aware that ethical decision-making in the boardroom must be tempered. Decision making shouldn’t be driven by: form over substance, passion over reason, collegiality over concurrence, the need to be right, or legacy. Just because a particular course of action is legal, does that make it right? Just because a company has always done it that way, should they continue?

- Lens 3: Interpersonal relationships and reasoning – Boards are collections of individuals who bring their own individual decision-making ‘style’ to the board table. Power dynamics exist in any group, with each person influencing and being influenced by others. Making room for diversity and constructive disagreement is vital. How can chairs and other directors empower every director to stand up for what is right? How do boards ensure that the person sitting quietly, with deep insights into ethical risk, has the courage to speak?

- Lens 4: The individual director – Directors bring their own wisdom and values to decision making. But they also might bring their own motivations that biases. The guide invites directors to self-reflect and bring the best of themselves to the board table. How can we all be more reflective in our own decision making?

This guide is a must-read for anyone who has an interest in the conduct of any board-led organisation. That includes schools, sports clubs, charities and family businesses as well as large corporations.

Behind each brand and each company, there are people making decisions that affect you as a consumer, employee and citizen. Wouldn’t you rather that those at the top had ethics at the front of their mind in the decisions that they make?

Click here to view or download a copy of the guide.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

Why we need land tax, explained by Monopoly

Explainer

Business + Leadership, Politics + Human Rights

Ethics Explainer: Universal Basic Income

Opinion + Analysis

Business + Leadership, Climate + Environment

The business who cried ‘woke’: The ethics of corporate moral grandstanding

Opinion + Analysis

Business + Leadership

Recovery should be about removing vulnerability, not improving GDP

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

AI can slowly shift an organisation’s core principles. Here's how to spot ‘value drift’ early

AI can slowly shift an organisation’s core principles. Here’s how to spot ‘value drift’ early

Opinion + AnalysisBusiness + LeadershipScience + Technology

BY Guy Bate Rhiannon Lloyd 5 MAR 2026

The steady embrace of artificial intelligence (AI) in the public and private sectors in Australia and New Zealand has come with broad guidance about using the new technology safely and transparently, with good governance and human oversight.

So far, so sensible. Aligning AI use with existing organisational values makes perfect sense.

But here’s the catch. Most references to “responsible AI” assume values are like a set of house rules you can write down once, translate into checklists and enforce forever.

But generative AI (GenAI) does not simply follow the rules of the house. It changes the house. GenAI’s distinctive power is not that it automates calculations, but that it automates plausible language.

It writes the summary, the rationale, the email, the policy draft and the performance feedback. In other words, it produces the texts organisations use to explain themselves.

When a system can generate confident, professional-sounding reasons instantly, it can quietly change what counts as a “good reason” to do something.

This is where “value drift” begins – a gradual shift in what feels normal, reasonable or acceptable as people adapt their work to what the technology makes easy and convincing.

Invisible ethical shifts

In the workplace, for example, a manager might use GenAI to draft performance feedback to avoid a hard conversation. The tone is smoother, but the judgement is harder to locate, as is the accountability. Or a policy team uses GenAI to produce a balanced justification for a contested decision. The prose is polished, but the real trade-offs are less visible. For small businesses, the appeal of GenAI lies in speed and efficiency. A sole trader can use it to respond to customers, write marketing copy or draft policies in seconds.

But over time, responsiveness may come to mean instant, AI-generated replies rather than careful, human judgement. The meaning of good service quietly shifts.

None of this requires an ethical breach. The drift happens precisely because the new practice feels helpful.

The biggest ethical effects of GenAI don’t often show up as a single shocking scandal. They are slower and quieter. A thousand small decisions get made a little differently.

Explanations get a little smoother. Accountability becomes a little harder to point to. And before long, we are living with a new normal we did not consciously choose.

If responsible AI use is about more than good intentions and tidy documentation, we need to stop treating values as fixed targets. We need to pay attention to how values shift once AI becomes part of everyday work.

Hidden assumptions

Much of today’s responsible-AI guidance follows a straightforward model: identify the values you care about, embed them in GenAI systems and processes, then check compliance.

This is necessary but also incomplete. Values are not “fixed” once written down in strategy documents or policy templates. They are lived out in practice.

They show up in how people talk, what they notice, what they prioritise and how they justify trade-offs. When technologies change those routines, values get reshaped.

An emerging line of research on technology and ethics shows that values are not simply applied to technologies from the outside. They are shaped from within everyday use, as people adapt their practices to what technologies make easy, visible or persuasive.

In other words, values and technologies shape each other over time, each influencing how the other develops and is understood.

We have seen this before. Social media did not just test our existing ideas about privacy. It gradually changed them. What once felt intrusive or inappropriate now feels normal to many younger users.

The value of privacy did not disappear, but its meaning shifted as everyday practices changed. Generative AI is likely to have similar effects on values such as fairness, accountability and care.

In our research on leadership development, we are exploring how we teach emerging leaders to recognise and reflect on these shifts.

The challenge is not only whether leaders apply the right values to AI, but whether they are equipped to notice how working with these systems may gradually reshape what those values mean in practice.

Constant vigilance

The emphasis in New Zealand and Australia on responsible AI guidance is sensible and pragmatic. It covers governance, privacy, transparency, skills and accountability.

But it still tends to assume that once the right principles and processes are in place, responsibility has been secured.

If values move as AI reshapes practice, though, responsible AI needs a practical upgrade. Principles still matter, but they should be paired with routines that keep ethical judgement visible over time.

Organisations should periodically review AI-mediated decisions in high-stakes areas such as hiring, performance management or customer communication.

They should pay attention not just to technical risks, but to how the meaning of fairness, accountability or care may be changing in practice. And they should make it clear who owns the reasoning behind AI-shaped decisions.

Responsible AI is not about freezing values in place. It is about staying responsible as values shift.

This article was originally published in The Conversation.

BY Guy Bate

Guy is Thematic Lead for Artificial Intelligence, Director of the Master of Business Development (MBusDev) programme, and Professional Teaching Fellow in Strategy and Technology Commercialisation at the University of Auckland Business School.

BY Rhiannon Lloyd

Dr Rhiannon Lloyd is a Senior Lecturer in Leadership in the Management and International Business department at the University of Auckland Business School, and the Director of the Kupe Leadership Scholarship at the University of Auckland. She is also a member of the Aotearoa Centre for Leadership and Governance, UABS. Her research takes a critical look at the theory and practice of responsible and environmental leadership.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Reports

Business + Leadership

Thought Leadership: Ethics in Procurement

Opinion + Analysis

Health + Wellbeing, Relationships, Science + Technology

Parent planning – we should be allowed to choose our children’s sex

Opinion + Analysis

Business + Leadership

Dame Julia Cleverdon on social responsibility

Explainer

Business + Leadership, Climate + Environment

Ethics Explainer: Ownership

Our dollar is our voice: The ethics of boycotting

Our dollar is our voice: The ethics of boycotting

Opinion + AnalysisPolitics + Human RightsBusiness + LeadershipSociety + Culture

BY Tim Dean 17 FEB 2026

Boycotts can be an effective mechanism for making change. But they can also be weaponised or harmful. Here are some tips to ensure your spending aligns with your values.

There I was, strolling through campus as an undergraduate in the 1990s, casually enjoying a KitKat, when I was accosted by a friend who sternly rebuked me for my choice of snack. Didn’t I know that we were boycotting Nestlé? Didn’t I know about all the terrible things Nestlé was doing by aggressively promoting its baby formula in the developing world, thus discouraging natural breastfeeding?

Well, I did now. And so I took a break from KitKats for many years. Until I discovered the boycott had started and been lifted years before my run-in with my activist friend. As it turned out, a decade prior to my campus encounter, Nestlé had already responded to the boycotters’ concerns and had subsequently signed on to a World Health Organization code that limited the way infant formula could be marketed. So, while my friend was a little behind on the news, it seemed the boycott had worked after all.

Boycotts are no less popular today. In late 2025, dozens of artists pulled their work from Spotify and thousands of listeners cancelled their accounts after discovering that the company’s CEO, Daniel Ek, invested over 100 million Euros into a German military technology company that is developing artificial intelligence systems. In 2023, Bud Light lost its spot as the top-selling beer in the United States after drinkers boycotted it following a social media promotion involving transgender personality, Dylan Mulvaney. The Boycott, Divestment and Sanctions (BDS) movement has been promoting boycotts of Israeli products and companies that support Israel for over a decade, gaining greater momentum since the 2023 war in Gaza. And there are many more.

As individuals, we may not have the power to rectify many of the great ills we perceive in the world today, but as agents in a capitalist market, we do have power to choose which products and services to buy.

In this regard, our dollar is our voice, and we can choose to use that voice to protest against things we find morally problematic. Some people – like my KitKat-critical friend – also stress that we must take responsibility for where our money flows, and we have an obligation to not support companies that are doing harm in the world.

However, thinking about whom we support with our purchases puts a significant ethical burden on our shoulders. How should we decide which companies to support and which to avoid? Do we have a responsibility to research every product and every company before handing over our money? How can we be confident that our boycott won’t do more harm than good?

Buyer beware

Boycotts don’t just impose a cost on us – primarily by depriving us of something we would otherwise purchase – they impose a cost on the producer, so it’s important to be confident that this cost is justified.

If buying the product would directly support practices that you oppose, such as damaging the environment or employing workers in sweatshops, then a boycott can contribute to preventing those harms. We can also choose to boycott for other reasons, including showing our opposition to a company’s support for a social issue, raising awareness of injustices or protesting the actions of individuals within an organisation. However, if we’re boycotting something that was produced by thousands of people, such as a film, because of the actions of just one person involved, then we might be unfairly imposing a cost on everyone involved, including those who were not involved in the wrongdoing.

Boycotts can also be used threaten, intimidate or exclude people, such as those directed against minorities, ethnic or religious groups, or against vulnerable peoples. We need to be confident that our boycott will target those responsible for the harms while not impinging on the basic rights or liberties of people who are not involved.

There are also risks in joining a boycott movement, especially if we feel pressured into doing so or if activists have manipulated the narrative to promote their perspective over alternatives. Take the Spotify boycott, for example. It is true that Spotify’s CEO invested in a military technology company. However, many people claimed that company was supplying weapons to Israel, and that was a key justification for them pulling their support for Spotify. However, Helsing had no contracts with Israel and was, instead, focusing its work on the defence of Ukraine and Europe – a cause that many boycotters might even support.

Know your enemy

The risk of acting on misinformation or biased perspectives places greater pressure on us to do our own research, and it’s here that we need to consider how much homework we’re actually willing or able to do.

In the course of writing this article, I delved back into the history of the Nestlé boycott only to find that another group has been encouraging people to avoid Nestle products because it doesn’t believe that the company is conforming to its own revised marketing policies. Even then, uncovering the details and the evidence is not a trivial undertaking. As such, I’m still not entirely sure where I stand on KitKats.

While we all have an ethical responsibility to act on our values and principles, and make sure that we’re reasonably well informed about the companies and products that we interact with, it’s unreasonable to expect us to do exhaustive research on everything that we buy. That said, if there is reliable information that a company is doing harm, then we have a responsibility to not ignore it and should adjust our purchasing decisions accordingly.

Boycotts also shouldn’t last for ever; an ethical boycott should aim to make itself redundant. Before blacklisting a company, consider what reasonable measures it could take for you to lift your boycott. That might be ceasing harmful practices, compensating those who were impacted, and/or apologising for harms done. If you set the bar too high, there’s a risk that you’re engaging in the boycott to satisfy your sense of outrage rather than seeking to make the world a better place. And if the company clears the bar, then you should have good reason to drop the boycott.

Finally, even though a coordinated boycott can be highly effective, be wary of judging others too harshly for their choice to not participate. Different people value different things, and have different budgets regarding how much cost they are willing to bear when considering what they purchase. Informing others about the harms you believe a company is causing is one thing, but browbeating them for not joining a boycott risks tipping over into moralising.

Boycotts can be a powerful tool for change. But they can also be weaponised or implemented hastily or with malicious intent, so we want to ensure we’re making conscious and ethical decisions for the right reasons. We often lament the lack of power that we have individually to make the world a better place. But if there’s one thing that money is good at, it’s sending a message.

BY Tim Dean

Dr Tim Dean is a public philosopher, speaker and writer. He is Philosopher in Residence and Manos Chair in Ethics at The Ethics Centre.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

Managing corporate culture

Opinion + Analysis

Business + Leadership

The case for reskilling your employees

Reports

Business + Leadership

Managing Culture: A Good Practice Guide

Opinion + Analysis

Politics + Human Rights, Society + Culture

Why sometimes the right thing to do is nothing at all

Should your AI notetaker be in the room?

Should your AI notetaker be in the room?

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY Aubrey Blanche 3 FEB 2026

It seems like everyone is using an AI notetaker these days. They’re a way for users to stay more present in meetings, keep better track of commitments and action items, and perform much better than most people’s memories. On the surface, they look like a simple example of AI living up to the hype of improved efficiency and performance.

As an AI ethicist, I’ve watched more people I have meetings with use AI notetakers, and it’s increasingly filled me with unease: it’s invited to more and more meetings (including when the user doesn’t actually attend) and I rarely encounter someone who has explicitly asked for my consent to use the tool to take notes.

However, as a busy executive with days full of context switching across a dizzying array of topics, I felt a lot of FOMO at the potential advantages of taking a load off so I could focus on higher-value tasks. It’s clear why people see utility in these tools, especially in an age where many of us are cognitively overloaded and spread too thin. But in our rush to offload some work, we don’t always stop to consider the best way to do it.

When a “low risk” use case isn’t

It might be easy to think that using AI for something as simple as taking notes isn’t ethically challenging. And if the argument is that it should be ethically low stakes, you’re probably right. But the reality is much different.

Taking notes with technology tangles the complex topics of consent, agency, and privacy. Because taking notes with AI requires recording, transcribing, and interpreting someone’s ideas, these issues come to the fore. To use these technologies ethically, everyone in each meeting should:

- Know that that they are being recorded

- Understand how their data will be used, stored, and/or transferred

- Have full confidence that opting out is acceptable.

The reality is that this shouldn’t be hard – but the economics of selling AI notetaking tools means that achieving these objectives isn’t as straightforward as download, open, record. This doesn’t mean that these tools can’t be used ethically, but it does mean that in order to do so we have to use them with intention.

What questions to ask:

What models are being used?

Not all AI is built the same, in terms of both technical performance and the safety practices that surround them. Most tools on the market use foundation models from frontier AI labs like Anthropic and OpenAI (which make Claude and ChatGPT, respectively), but some companies train and deploy their own custom models. These companies vary widely in the rigour of their safety practices. You can get a deeper understanding of how a given company or model approaches safety by seeking out the model cards for a given tool.

The particular risk you’re taking will depend on a combination of your use case and the safeguards put in place by the developer and deployer. For example, there’s significantly more risk of using these tools in conversations where sensitive or protected data is shared, and that risk is amplified by using tools that have weak or non-existent safety practices. Put simply, it’s a higher ethical risk (and potentially illegal) decision to use this technology when you’re dealing with sensitive or confidential information.

Does the tool train on user data?

AI “learns” by ingesting and identifying patterns in large amounts of data, and improves its performance over time by making this a continuous process. Companies have an economic incentive to train using your data – it’s a valuable resource they don’t have to pay for. But sharing your data with any provider exposes you and others to potential privacy violations and data leakages, and ultimately it means you lose control of your data. For example, research has shown that there are techniques that cause large language models (LLMs) to reproduce their training data, and AI creates other unique security vulnerabilities for which there aren’t easy solutions.

For most tools, the default setting is to train on user data. Often, tools will position this approach in terms of generosity, in that providing your data helps improve the service for yourself and others. While users who prioritise sharing over security may choose to keep the default, users that place a higher premium on data security should find this setting and turn it off. Whatever you choose, it’s critical to disclose this choice to those you’re recording.

How and where is the data stored and protected?

The process of transcribing and translating can happen on a local machine or in the “cloud” (which is really just a machine somewhere else connected to the internet). The majority will use a third-party cloud service provider, which expands the potential ethical risk surface.

First, does the tool run on infrastructure associated with a company you’re avoiding? For example, many people specifically avoid spending money on Amazon due to concerns about the ethics of their business operations. If this applies to you, you might consider prioritising tools that run locally, or on a provider that better aligns with your values.

Second, what security protocols does the tool provider have in place? Ideally, you’ll want to see that a company has standard certifications such as SOC 2, ISO 27001 and/or ISO 42001, which show an operational commitment to security, privacy, and safety.

Whatever you choose, this information should be a part of your disclosure to meeting attendees.

How am I achieving fully informed consent?

The gold standard for achieving fully informed consent is making the request explicit and opt in as a default. While first-generation notetakers were often included as an “attendee” in meetings, newer tools on the market often provide no way for everyone in the meeting to know that they’re being recorded. If the tool you use isn’t clearly visible or apparent to attendees, the ethical burden of both disclosure and consent gathering falls on you.

This issue isn’t just an ethical one – it’s often a legal one. Depending on where you and attendees are, you might need a persistent record that you’ve gotten affirmative consent to create even a temporary recording. For me, that means I start meeting with the following:

I wanted to let you know that I like to use an AI notetaker during meetings. Our data won’t be used for training, and the tool I use relies on OpenAI and Amazon Web Services. This helps me stay more present, but it’s absolutely fine if you’re not comfortable with this, in which case I’ll take notes by hand.

Doing this might feel a bit awkward or uncomfortable at first, but it’s the first step not only in acting ethically, but modelling that behaviour for others.

Where I landed

Ultimately, I decided that using an AI notetaker in specific circumstances was worth the risk involved for the work I do, but I set some guardrails for myself. I don’t use it for sensitive conversations (especially those involving emotional experiences) or those where confidential data is shared. I start conversations with my disclosure, and offer to share a copy of the notes for both transparency and accuracy.

But perhaps the broader lesson is that I can’t outsource ethics: the incentive structures of the companies producing these tools aren’t often aligned to the values I choose to operate with. But I believe that by normalising these practices, we can take advantage of the benefits of this transformative technology while managing the risks.

AI was used to review research for this piece and served as a constructive initial editor.

BY Aubrey Blanche

Aubrey Blanche is a responsible governance executive with 15 years of impact. An expert in issues of workplace fairness and the ethics of artificial intelligence, her experience spans HR, ESG, communications, and go-to-market strategy. She seeks to question and reimagine the systems that surround us to ensure that all can build a better world. A regular speaker and writer on issues of responsible business, finance, and technology, Blanche has appeared on stages and in media outlets all over the world. As Director of Ethical Advisory & Strategic Partnerships, she leads our engagements with organisational partners looking to bring ethics to the centre of their operations.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Health + Wellbeing

David Pocock’s rugby prowess and social activism is born of virtue

Opinion + Analysis

Business + Leadership, Politics + Human Rights, Society + Culture

Corruption, decency and probity advice

Opinion + Analysis

Business + Leadership, Health + Wellbeing

Why ethical leadership needs to be practiced before a crisis

Opinion + Analysis

Business + Leadership, Politics + Human Rights, Science + Technology

Not too late: regaining control of your data

The ethics of AI’s untaxed future

The ethics of AI’s untaxed future

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY Dia Bianca Lao 24 NOV 2025

“If a human worker does $50,000 worth of work in a factory, that income is taxed. If a robot comes in to do the same thing, you’d think we’d tax the robot at a similar level,” Bill Gates famously said. His call raises an urgent ethical question now facing Australia: When AI replaces human labour, who pays for the social cost?

As AI becomes a cheaper alternative to human labour, the question is no longer if it will dramatically reshape the workforce, but how quickly, and whether the nation’s labour market can adapt in time.

New technology always seems like the stuff of science fiction until its seamless transition from novelty to necessity. Today AI is past its infancy and is now shaping real-world industries. The simultaneous emergence of its diverse use cases and the maturing of automation technology underscores how rapidly it’s evolving, transforming this threat into reality sooner than we think.

Historically, automation tended to focus on routine physical tasks, but today’s frontier extends into cognitive domains. Unlike past innovations that still relied on human oversight, the autonomous nature of emerging technologies threatens to make human labour obsolete with its broader capabilities.

While history shows that technological revolutions have ultimately improved output, productivity, and wages in the long-term, the present wave may prove more disruptive than those before. In 2017, Bill Gates foresaw this looming paradigm shift and famously argued for companies to pay a ‘robot tax’ to moderate the pace at which AI impacts human jobs and help fund other employment types.

Without any formal measures, the costs of AI-driven displacement will likely mostly fall on workers and society, while companies reap the benefits with little accountability.

According to the World Economic Forum, while AI is predicted to create 69 million new jobs, 83 million existing jobs may be phased out by 2027, resulting in a net decrease of 14 million jobs or approximately 2% of current employment. They also projected that 23% of jobs globally will evolve in the next five years, driven by advancements in technology. While the full impact is not yet visible in official employment statistics, the shift toward reducing reliance on human labour through automation and AI is already underway, with entry-level roles and jobs involving logistics, manufacturing, admin, and customer service being the most impacted.

For example, Aurora’s self-driving trucks are officially making regular roundtrips on public roads delivering time- and temperature-sensitive freight in the U.S., while Meituan is making drone deliveries increasingly common in China’s major cities. We now live in a world where you can get your boba milk tea delivered by a drone in less than 20 minutes in places like Shenzhen. Meanwhile in Australia, Rio Tinto has also deployed fully autonomous trains and autonomous haul trucks across its Pilbara iron ore mines, increasing operational time and contributing to a 15% reduction in operating costs.

Companies have already begun recalibrating their workforce, and there is no stopping this train. In the past 12 months, CBA and Bankwest have cut hundreds of jobs across various departments despite rising profits. Forty-five of these roles were replaced by an AI chatbot handling customer queries, while the permanent closure of all Bankwest branches has seen the bank transition to a digital bank, with no intention of bringing back the lost positions. While some argue that redeployment opportunities exist or new jobs might emerge, details remain vague.

Is it possible to fully automate an economy and eliminate the need for jobs? Elon Musk certainly thinks so. It’s no wonder that a growing number of tech elite are investing heavily to replace human labour with AI. From copywriting to coding, AI has proven its versatility in speeding up productivity in all aspects of our lives. Its potential for accelerating innovation, improving living standards and economic growth is unparalleled, but at what cost?

What counts as good for the economy has historically benefited a select few, with technology frequently being a catalyst for this dynamic. For example, the benefits of the Industrial Revolution, including the creation of new industries and increased productivity, were initially concentrated in the hands of those who owned the machinery and capital, while the widespread benefits trickled down later. Without ethical frameworks in place, AI is positioned to compound this inequality.

Some proposals argue that if we make taxes on human labour cheaper while increasing taxes on AI machines and tools, this could encourage companies to view AI as complementary instead of a replacement for human workers. This levy could be a means for governments to distribute AI’s socioeconomic impacts more fairly, potentially funding retraining or income support for displaced workers.

If a robot tax is such a good idea, then why did the European Parliament reject it? Many argue that taxing productivity tools could hinder competitiveness. Without global coordination to implement this, if one country taxes AI and others don’t, it may create an uneven playing field and stifle innovation. How would policymakers even define how companies would qualify for this levy or measure how much to tax, when it’s hard to attribute profits derived from AI? Unlike human workers’ earnings, taxing AI isn’t as straightforward.

The challenge of developing policies that incentivise innovation while ensuring that its benefits and burdens are shared responsibly across society persists. The government’s focus on retraining and upskilling workers to help with this transition is a good start, but they cannot address all the challenges of automation fast enough. Relying solely on these programs risk overlooking structural inequities, such as the disproportionate impact on lower-income or older workers in certain industries, and long-term displacement, where entire job categories may vanish faster than workers can be retrained.

Our fiscal policies should adapt to the evolving economic landscape to help smooth this shift and fund social safety nets. A reduction in human labour’s share in production will significantly impact government revenue unless new measures of taxing capital are introduced.

While a blanket “robot tax” is impractical at this stage, incremental changes to existing taxation policies to target sectors that are most vulnerable to disruption is a possibility. Ideally, policies should distinguish the treatment between technologies that substitute for human labour, and those that complement them, to only disincentivise the former. While this distinction can be challenging, it offers a way to slow down job displacement, giving workers and welfare systems more time to adapt and generate revenue to help with the transition without hindering productivity.

As Microsoft’s CEO Satya Nadella warns, “With this empowerment comes greater human responsibility — all of us who build, deploy, and use AI have a collective obligation to do so responsibly and safely, so AI evolves in alignment with our social, cultural, and legal norms. We have to take the unintended consequences of any new technology along with all the benefits, and think about them simultaneously.”

The challenge in integrating AI more equitably into the economy is ensuring that its broad societal benefits are amplified, while reducing its unintended negative consequences. AI has the potential to fundamentally accelerate innovation for public good but only if progress is tied to equitable frameworks and its ethical adoption.

Australia already regulates specific harms of AI, protecting privacy and personal information through the Privacy Act 1988 and addressing bias through the Australian Privacy Principles (APPs). These examples show that targeted regulation is possible. However, the next step should include ethical guardrails for AI-driven job displacement, such as exploring more equitable taxation, redistribution policies, and accountability frameworks before it’s too late. This transformation will require joint collaboration from governments, companies, and global organisations to collectively build a resilient and inclusive AI-powered future.

The ethics of AI’s untaxed future by Dia Bianca Lao is one of the Highly Commended essays in our Young Writers’ 2025 Competition. Find out more about the competition here.

BY Dia Bianca Lao

Dia Bianca Lao is a marketer by trade but a writer at heart, with a passion for exploring how ethics, communication, and culture shape society. Through writing, she seeks to make sense of complexity and spark thoughtful dialogue.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Relationships

Game, set and match: 5 principles for leading and living the game of life

Opinion + Analysis

Business + Leadership, Politics + Human Rights

Could a virus cure our politics?

Opinion + Analysis

Business + Leadership

Ethics in engineering makes good foundations

Opinion + Analysis

Business + Leadership, Politics + Human Rights, Relationships

After Christchurch

Meet Aubrey Blanche: Shaping the future of responsible leadership

Meet Aubrey Blanche: Shaping the future of responsible leadership

Opinion + AnalysisBusiness + LeadershipScience + Technology

BY The Ethics Centre 4 NOV 2025

We’re thrilled to introduce Aubrey Blanche, our new Director of Ethical Advisory & Strategic Partnerships, who will lead our engagements with organisational partners looking to operate with the highest standards of ethical governance and leadership.

Aubrey is a responsible governance executive with 15 years of impact. An expert in issues of workplace fairness and the ethics of artificial intelligence, her experience spans HR, ESG, communications, and go-to-market strategy. She seeks to question and reimagine the systems that surround us to ensure that all can build a better world. A regular speaker and writer on issues of ethical business, finance, and technology, she has appeared on stages and in media outlets all over the world.

To better understand the work she’ll be doing with The Ethics Centre, we sat down with Aubrey to discuss her views on AI, corporate responsibility, and sustainability.

We’ve seen the proliferation of AI impact the way in which we work. What does responsible AI use look like to you – for both individuals and organisations?

I think that the first step to responsibility in AI is questioning whether we use it at all! While I believe it is and will be a transformative technology, there are major downsides I don’t think we talk about enough. We know that it’s not quite as effective as many people running frontier AI labs aim to make us believe, and it uses an incredible amount of natural resources for what can sometimes be mediocre returns.

Next, I think that to really achieve responsibility we need partnerships between the public and private sector. I think that we need to ensure that we’re applying existing regulation to this technology, whether that’s copyright law in the case of training, consumer protection in the case of chatbots interacting with children, or criminal prosecution regarding deepfake pornography. We also need business leaders to take ethics seriously, and to build safeguards into every stage from design to deployment. We need enterprises to refuse to buy from vendors that can’t show their investments in ensuring their products are safe.

And last, we need civil society to actively participate in incentivising those actors to behave in ways that are of benefit to all of society (not just shareholders or wealthy donors). That means voting for politicians that support policies that support collective wellbeing, boycotting companies complicit in harms, and having conversations within their communities about how these technologies can be used safely.

In a time where public trust is low in businesses, how can they operate fairly and responsibly?

I think the best way that businesses can build responsibility is to be more specific. I think people are tired of hearing “We’re committed to…”. There’s just been too much greenwashing, too much ethics washing, and too many “commitments” to diversity that haven’t been backed up by real investment or progress. The way through that is to define the specific objectives you have in relation to responsibility topics, publish your specific goals, and regularly report on your progress – even if it’s modest.

And most importantly, do this even when trust is low. In a time of disillusionment, you’ll need to have the moral courage to do the right thing even when there is less short-term “credit” for it.

How can we incentivise corporations to take responsible action on environmental issues?

I think that regulation can be a powerful motivator. I’m really excited that the Australian Accounting Standards Board is bringing new requirements into force that, at least for large companies, will force them to proactively manage climate risks and their impacts. While I don’t think it’s the whole answer, a regulatory “push” can be what’s needed for executives to see that actively thinking about climate in the context of their operations can be broadly beneficial to operations.

What are you most excited about sinking your teeth into at The Ethics Centre?

There’s so much to be excited about! But something that I’ve found wildly inspiring is working with our Young Ambassadors – early career professionals in banking and financial services who are working with us to develop their ethical leadership skills. While I have enjoyed working with our members – and have spent the last 15 years working with leaders in various areas of corporate responsibility – there nothing quite like the optimism you get when learning from people who care so much and who show us what future is possible.

Lastly – the big one, what does ethics mean to you?

A former boss of mine once told me that leadership is not about making the right choice when you have one: it’s about making the best choice you can when you have terrible ones and living with that choice. I think in many cases that’s what ethics is. It gives us a framework not to do the right thing when the answer is clear, but to align ourselves as closely as we can with our values and the greater good when our options are messy, complicated, or confusing.

Personally, I’ve spent a deep amount of time thinking about my values, and if I were forced to distill them down to two, I would wholeheartedly choose justice and compassion. I have found that when I consider choices through those frames, I both feel more like myself and like I’ve made choices that are a net good in the world. And I’ve been lucky enough to spend my career in roles where I got to live those values – that’s a privilege I don’t take for granted, and one of the reasons I’m so thrilled to be in this new role with The Ethics Centre.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships, Science + Technology

Making friends with machines

Explainer

Business + Leadership, Climate + Environment

Ethics Explainer: Ownership

Opinion + Analysis

Business + Leadership

If it’s not illegal, should you stop it?

Opinion + Analysis

Business + Leadership

Making the tough calls: Decisions in the boardroom

Teachers, moral injury and a cry for fierce compassion

Teachers, moral injury and a cry for fierce compassion

Opinion + AnalysisHealth + WellbeingBusiness + Leadership

BY Lee-Anne Courtney 20 OCT 2025

I first came across the term moral injury during a work break, scrolling through Bond University’s research page, my casual employer at the time.

It sounded vaguely religious and a bit dramatic, and it sparked my curiosity. The article explained that moral injury is a term given to a form of psychological trauma experienced when someone is exposed to events that violate or transgress their deeply held beliefs of right and wrong, leading to biopsychosocial suffering.

First used in relation to military service, moral injury was coined by military psychiatrist Jonathan Shay (2014) when he realised that therapy designed to treat post-traumatic stress disorder (PTSD) wasn’t effective for all service members. Shay used the term moral injury to describe a deeper wound carried by his patients, not from fear for their physical safety, but from violating their own morals or having them violated by leaders. The suffering experienced from exposure to violence was compounded by the damage to their identity and feeling like they’d lost their worth or goodness in the eyes of society and themselves. Insight into this hidden cost of trauma was an epiphany for me.

I’m a secondary teacher by profession, but I’ve not been employed in the classroom for over 10 years. In fact, I haven’t been employed doing anything other than the occasional casual project since that time. Why? Was it burnout, stress, compassion fatigue, lack of resilience, or the ever-handy catch-all, poor mental health? No matter which explanation I considered, the result was the same: a growing sense of personal failure and creeping doubt about whether I’m cut out for any kind of paid work. Being introduced to the term moral injury was like turning a kaleidoscope; all the same colours tumbled into a dazzlingly different pattern. It gave me a fresh lens through which to view my painful experience of leaving the teaching profession. I needed to know more about moral injury, not only for myself, but also for my colleagues, the students and the profession that I love.

If just a glimpse of moral injury gave me hope that my distress in my role as a teacher wasn’t simply personal failure, imagine the impact a deep, shared understanding could have on our education system and wider community.

Within the month, I had written and submitted a research proposal to empirically investigate the impact of moral injury on teacher wellbeing in Australia.

I discovered moral injury, while well-studied in various workforce populations, had received limited but significant empirical attention in the teaching profession. My research clearly showed that moral injury has a serious negative impact on teachers’ wellbeing and professional function. Crucially, it offered insight into why many teachers were experiencing intense psychological distress and a growing urge to leave the profession, even when poor working conditions and eroding public respect were the subject of policy and practice reform.

What makes an event or situation potentially morally injurious is when it transgresses a deeply held value or belief about what is right or wrong. Research exploring moral injury in the teaching practice suggests that teachers hold shared values and beliefs about what good teaching means. Education researchers such as Thomas Albright, Lisa Gonzalves, Ellis Reid and Meira Levinson affirm that teachers aim to guide all students in gaining knowledge and skills while shaping their thinking and behaviour with an awareness of right and wrong, promoting social justice and challenging injustice. Researcher Yibing Quek highlights the development of respectful, critical thinking as a core educational goal that supports students in navigating life’s challenges. Scholars such as Erin Sugrue, Rachel Briggs, and L. Callid Keef-Perry emphasise that the goals of education are only achievable through relationships rooted in deep care, where teachers are responsive to students’ complex learning and wellbeing needs and attuned to ethical dilemmas requiring both compassion and justice. Though ethical dilemmas are inherent in teaching, researcher Dana Cohen-Lissman argues that many are generated from externally imposed policies, potentially leading to moral injury among teachers.

Studies assert that teachers experience moral injury when systemic barriers and practice arrangements keep them from aligning their actions with their professional identity, educational goals, and core teaching values. Teachers exposed to the shortcomings of education and other systems often feel they are complicit in the harm inflicted on students. Researchers have consistently identified neoliberalism, social and educational inequities, racism, and student trauma as key factors contributing to experiences that lead to moral injury for teachers. These systemic problems place crippling pressure on individual teachers, both in the classroom and in leadership, to achieve the stated goals of education, despite policies that provide insufficient and unevenly distributed resources to do so.

Furthermore, the high-stakes accountability demanded of individual employees fails to recognise the collective, community-oriented, interdependent nature of the work of teaching. The problem is that the gap between what is expected of teachers and what they can do is often blamed on them, and over time, they start to believe it of themselves. Naturally, these morally injurious experiences cause emotional distress, hinder job performance, and gradually erode teachers’ wellbeing and job satisfaction.

Experiencing moral distress, witnessing harm to children, feeling betrayed by policymakers and the public, powerless to make meaningful change, and working without the rewards of service, teachers face difficult choices. They can respond to moral injury by quietly resisting, speaking out to demand understanding and systemic change or take what they believe to be the only ethical choice – leave the profession of teaching. But what if, instead of facing these difficult choices, teachers resorted to reductive moral reasoning – simplifying the complex ethical dilemmas piling up around them or ignoring them altogether – so they wouldn’t be disturbed by them? Numbing ethical sensitivity just to keep a job creates even more problems.

Moral injury goes beyond personal failure or inadequacy; it acknowledges the broader systemic conditions that place teachers in situations where their ethical commitments increasingly clash with the neoliberal forces currently shaping education. Moral injury offers a language of lament, an explanation for the anguish experienced in the practice of teaching. An understanding of moral injury in the education sector invites society, collectively, to offer teachers fierce compassion and moral repair by restructuring the social systems that create these conditions. Adding moral injury to the discourse around teacher shortages may even help teachers offer fierce compassion to themselves. It did for me.

BY Lee-Anne Courtney

Lee-Anne Courtney is a secondary teacher with over 30 years of experience in the education sector. She is conducting PhD research with a team at Bond University aimed at uncovering the impact of moral injury on teacher wellbeing in Australia.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Health + Wellbeing, Relationships

Why your new year’s resolution needs military ethics

Opinion + Analysis

Business + Leadership

6 Things you might like to know about the rich

Opinion + Analysis

Business + Leadership, Relationships

The future does not just happen. It is made. And we are its authors.

Opinion + Analysis

Science + Technology, Business + Leadership

Ask an ethicist: Should I use AI for work?

Big Thinker: Ayn Rand

Big Thinker: Ayn Rand

Big thinkerSociety + CultureBusiness + Leadership

BY The Ethics Centre 7 OCT 2025

Ayn Rand (born Alissa Rosenbaum, 1905-1982) was a Russian-born American writer & philosopher best known for her work on Objectivism, a philosophy she called “the virtue of selfishness”.

From a young age, Rand proved to be gifted, and after teaching herself to read at age 6, she decided she wanted to be a fiction writer by age 9.

During her teenage years, she witnessed both the Kerensky Revolution in February of 1917, which saw Tsar Nicholas II removed from power, and the Bolshevik Revolution in October of 1917. The victory of the Communist party brought the confiscation of her father’s pharmacy, driving her family to near starvation and away from their home. These experiences likely laid the groundwork for her contempt for the idea of the collective good.

In 1924, Rand graduated from the University of Petrograd, studying history, literature and philosophy. She was approved for a visa to visit family in the US, and she decided to stay and pursue a career in play and fiction writing, using it as a medium to express her philosophical beliefs.

Objectivism

“My philosophy, in essence, is the concept of man as a heroic being, with his own happiness as the moral purpose of his life, with productive achievement as his noblest activity, and reason as his only absolute.” – Appendix of Atlas Shrugged

Rand developed her core philosophical idea of Objectivism, which maintains that there is no greater moral goal than achieving one’s happiness. To achieve this happiness, however, we are required to be rational and logical about the facts of reality, including the facts about our human nature and needs.

Objectivism has four pillars:

- Objective reality – there is a world that exists independent of how we each perceive it

- Direct realism – the only way we can make sense of this objective world is through logic and rationality

- Ethical egoism – an action is morally right if it promotes our own self-interest (rejecting the altruistic beliefs that we should act in the interest of other people)

- Capitalism – a political system that respects the individual rights and interests of the individual person, rather than a collective.

Given her beliefs on individualism and the morality of selfishness, Rand found that the only political system that was compatible was Laissez-Faire Capitalism. Protecting individual freedom with as little regulation and government interference would ensure that people can be rationally selfish.

A person subscribing to Objectivism will make decisions based on what is rational to them, not out of obligation to friends or family or their community. Rand believes that these people end up contributing more to the world around them, because they are more creative, learned, and can challenge the status quo.

Writing

She explored these concepts in her most well-known pieces of fiction: The Fountainhead, published in 1943, and Atlas Shrugged, published in 1957. The Fountainhead follows Howard Roark, an anti-establishment architect who refuses to conform to traditional styles and popular taste. She introduces the reader to the concept of “second-handedness”, which she defines living through others’ and their ideas, rather than through independent thought and reason.

The character Roark personifies Rand’s Objectivist ideals, of rational independence, productivity and integrity. Her magnum opus, Atlas Shrugged, builds on these ideas of rational, selfish, creative individuals as the “prime movers” of a society. Set in a dystopian America, where productivity, creativity, and entrepreneurship stagnate due to over-regulation and an “overly altruistic society”, the novel describes this as disincentivising ambitious, money-driven people.

Even though Atlas Shrugged quickly became a bestseller, its reception was controversial. It has tended to be applauded by conservatives, while dismissed as “silly,’ “rambling” and “philosophically flawed” by liberals.

Controversy

Ayn Rand remains a controversial figure, given her pro-capitalist, individual-centred definition of an ideal society. So much of how we understand ethics is around what we can do for other people and the societies we live in, using various frameworks to understand how we can maximise positive outcomes, or discern the best action. Objectivism turns this on its head, claiming that the best thing we can do for ourselves and the world is act within our own rational self-interest.

“Why do they always teach us that it’s easy and evil to do what we want and that we need discipline to restrain ourselves? It’s the hardest thing in the world–to do what we want. And it takes the greatest kind of courage. I mean, what we really want.”

Rand’s work remains hotly debated and contested, although today it is being read in a vastly different context. Tech billionaires and CEOs such as Peter Thiel and Steve Jobs are said to have used her philosophy as their “guiding stars,” and her work tends to gain traction during times of political and economic instability, such as during the 2008 financial crisis. Ultimately, whether embraced as inspiration or rejected as ideology, Rand’s legacy continues to grapple with the extent to which individual freedom drives a society forward.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Society + Culture

10 films to make you highbrow this summer

Explainer

Relationships, Society + Culture

Ethics Explainer: Beauty

Opinion + Analysis

Health + Wellbeing, Society + Culture

Should I have children? Here’s what the philosophers say

Opinion + Analysis

Business + Leadership, Society + Culture

Access to ethical advice is crucial

Service for sale: Why privatising public services doesn’t work

Service for sale: Why privatising public services doesn’t work

Opinion + AnalysisHealth + WellbeingBusiness + Leadership

BY Dr Kathryn MacKay 1 OCT 2025

What do the recent Optus 000-incidents, childcare centre abuse allegations, and the Northern Beaches Hospital deaths have in common?

Each of these incidents plausibly resulted from the privatisation of public services, in which the government has systematically disinvested funds and withdrawn oversight.

On the 18th of September, Optus’ 000 service went down for the second time in two years. This time, the outage affected people in Western Australia, and as a result of not being able to get through to the 000 service, it appears that three people have died.

This highlights a more general issue that we see in Australia across a range of public services, including emergency, hospital, and childcare services. The government has sought to privatise important parts of the care economy that are badly suited to generating private profits, leading to moral and practical problems.

Privatisation of public services

Governments in Australia follow economic strategies that can be described as neoliberal. This means that they prefer limited government intervention and favour market solutions to match the value that people are willing to pay with the value that people want to charge for goods and services.

As a result, public goods and services like healthcare, energy, and telecommunications have been gradually sold off in Australia to private companies. This is because, firstly, it’s not considered within the government’s remit to provide them, and secondly, policy makers think the market will provide more efficient solutions for consumers than the government can.

We see then, for example, a proliferation of energy suppliers popping up, offering the most competitive rates they can for consumers against the real cost of energy production. And we see telecommunications companies, like Telstra and Optus, emerging to compete for consumers in the market of cellular and internet services.

So far, so good. In principle, these systems of competition should drive companies to provide the best possible services for the lowest competitive rates, which would mean real advantages for consumers. Indeed, many have argued that governments can’t provide similar advantages for consumers, given that they end up with no competition and no drive for technical improvements.

However, the picture in reality is not so rosy.

Public services: Some things just can’t be privatised

There’s a term in economics called ‘market failure’. This describes a situation where, for a few different possible reasons, the market fails to efficiently respond to supply and demand flows, affecting the nature of public goods and services.

A classic public good has two features: it is non-rival, and non-excludable. A non-rival good is one where one person’s use doesn’t deplete how much of that good is left for others – so we are not rivals because there is enough for everyone. A non-excludable good is one where my use of it doesn’t prevent anyone else from using it either. So, I can’t claim this good because I’m using it right now; it remains open to others to use.

Consider a jumper. This is a rival and excludable good. If I purchase a jumper out of a stock of jumpers, there are fewer jumpers for you and everyone else who wants one. The jumper is a rival good. When I buy the jumper and wear it, no one else can buy it or wear it; it is an excludable good.

Now, consider the 000 service. In theory, if you and I are both facing an emergency, we can both call 000 and get through to an operator. The 000 service is a non-rival, non-excludable good. It is not the sort of thing that anyone can deplete the stock of, nor can anyone exclude anyone else from using it.

Such goods and services present a problem for the market. Private companies have little reason to provide public goods or services, like roads, street lights, 000 services, clean air, or public health care. That’s because these sorts of goods don’t return them much of a profit. There is little or no reason that anyone would pay to use these services when they can’t be excluded from their use and their stock won’t be depleted. Of course, that has not stopped governments from trying to privatise these things anyway, as we see from toll roads, 000, and private care.

Public goods, private incentives

The primary moral problem that arises in the privatisation of public goods and services is two-fold. First, it puts the provision of important goods and services in the hands of companies whose interests directly oppose the nature of the goods to be provided. Second, people are made vulnerable to an unreliable system of private provision of public goods and services.

A private company’s main objective is to make the most possible profit for shareholders. Given that public goods will not make much of a profit, there is little incentive for a private company to give them attention. This means that essential goods and services, like the 000 service, are deprioritised in favour of those other services that will make the company more of a profit.

Further, people become vulnerable to unreliable service providers, as proper oversight and governance undercuts the profit of private companies. Any time a company has to pay for staff re-training, for revision of protocols, or firing and replacing an employee, they make their profits smaller. So, private companies have incentives to cut corners where they can, and oversight, governance, and quality control seem to be the most frequent things to go.

Most of the time, these cut corners go unnoticed. Until, that is, something goes wrong with the service and people get hurt, or worse.

So why does this system continue?

Successive governments have made the decision to privatise goods and services, making their public expenditures smaller and therefore also making it look like they are being more ‘responsible’ with tax revenues. It’s an attractive look for the neoliberal government, which emphasises how small and non-interventionist it is. But is it working for Australians?

It seems like the government’s quest for a smaller bottom line is at odds with the needs of Australian people. The stable provision of a 000 service, safe hospitals with appropriate oversight, and reliable childcare services with proper governance are all essential goods that Australians want, and which private companies consistently seem unable to provide.

It’s a moral – if not economic – imperative that Australian governments reverse course and begin to provide essential goods and services again. The 000 service, the childcare system, and hospitals provide only a few examples of where the government’s involvement in providing public services is very obviously missing. People are getting hurt, and people are dying, for the sake of private profits.

BY Dr Kathryn MacKay

Kathryn is a Senior Lecturer at Sydney Health Ethics, University of Sydney. Her background is in philosophy and bioethics, and her research involves examining issues of human flourishing at the intersection of ethics, feminist theory and political philosophy. Kathryn’s research is mainly focussed on developing a theory of virtue for public health ethics, and on the ethics of public health communication. Her book Public Health Virtue Ethics is forthcoming with Routledge.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

The dark side of the Australian workplace

Opinion + Analysis

Business + Leadership

A radical act of transparency

Reports

Business + Leadership

A Guide to Purpose, Values, Principles

Opinion + Analysis

Health + Wellbeing, Business + Leadership

Repairing moral injury: The role of EdEthics in supporting moral integrity in teaching

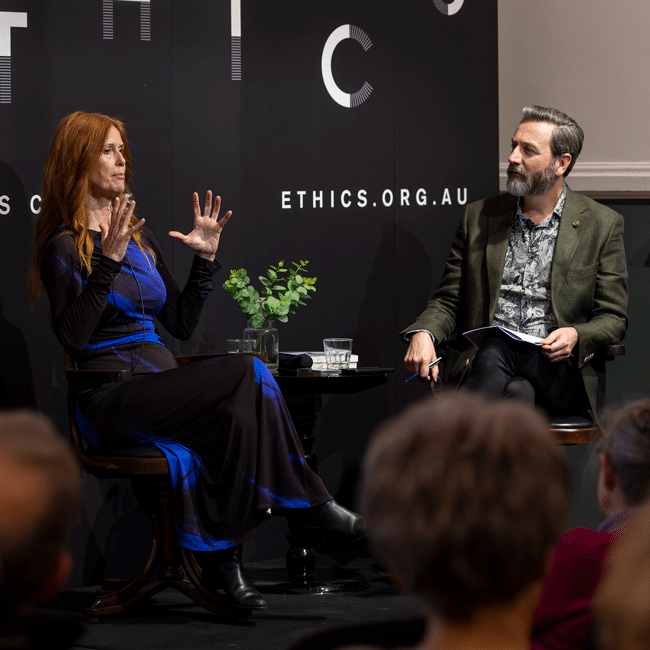

3 things we learnt from The Ethics of AI

3 things we learnt from The Ethics of AI

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY The Ethics Centre 17 SEP 2025

As artificial intelligence is becoming increasingly accessible and integrated into our daily lives, what responsibilities do we bear when engaging with and designing this technology? Is it just a tool? Will it take our jobs? In The Ethics of AI, philosopher Dr Tim Dean and global ethicist and trailblazer in AI, Dr Catriona Wallace, sat down to explore the ethical challenges posed by this rapidly evolving technology and its costs on both a global and personal level.

Missed the session? No worries, we’ve got you covered. Here are 3 things we learnt from the event, The Ethics of AI:

We need to think about AI in a way we haven’t thought about other tools or technology

In 2023, The CEO of Google, Sundar Pichai described AI as more important than the invention of fire, claiming it even surpassed great leaps in technology such as electricity. Catriona takes this further, calling AI “a new species”, because “we don’t really know where it’s all going”.

So is AI just another tool, or an entirely new entity?

When AI is designed, it’s programmed with algorithms and fed with data. But as Catriona explains, AI begins to mirror users’ inputs and make autonomous decisions – often in ways even the original coders can’t fully explain.

Tim points out that we tend to think of technology instrumentally, as a value neutral tool at our disposal. But drawing from German philosopher Martin Heidegger, he reminds us that we’re already underthinking tools and what they can do – tools have affordances, they shape our environment and steer our behaviour. So “when we add in this idea of agency and intentionality” Tim says, “it’s no longer the fusion of you and the tool having intentionality – the tool itself might have its own intentions, goals and interests”.

AI will force us to reevaluate our relationship with work

The 2025 Future of Jobs Report from The World Economic Forum estimates that by 2030, AI will replace 92 million current jobs but 170 million new jobs will be created. While we’ve already seen this kind of displacement during technological revolutions, Catriona warns that the unemployed workers most likely won’t be retrained into the new roles.

“We’re looking at mass unemployment for front line entry-level positions which is a real problem.”

A universal basic income might be necessary to alleviate the effects of automation-driven unemployment.

So if we all were to receive a foundational payment, what does the world look like when we’re liberated from work? Since many of us tie our identity to our jobs and what we do, who are we if we find fulfilment in other types of meaning?

Tim explains, “work is often viewed as paid employment, and we know – particularly women – that not all work is paid, recognised or acknowledged. Anyone who has a hobby knows that some work can be deeply meaningful, particularly if you have no expectation of being paid”.

Catriona agrees, “done well, AI could free us from the tie to labour that we’ve had for so long, and allow a freedom for leisure, philosophy, art, creativity, supporting others, caring for loving, and connection to nature”.

Tech companies have a responsibility to embed human-centred values at their core

From harmful health advice to fabricating vital information, the implications of AI hallucinations have been widely reported.

The Responsible AI Index reveals a huge disconnect between businesses leaders’ understanding of AI ethics, with only 30% of organisations knowing how to implement ethical and responsible AI. Catriona explains this is a problem because “if we can’t create an AI agent or tool that is always going to make ethical recommendations, then when an AI tool makes a decision, there will always be somebody who’s held accountable”.

She points out that within organisations, executives, investors, and directors often don’t understand ethics deeply and pass decision making down to engineers and coders — who then have to draw the ethical lines. “It can’t just be a top-down approach; we have to be training everybody in the organisation.”

So what can businesses do?

AI must be carefully designed with purpose, developed to be ethical and regulated responsibly. The Ethics Centre’s Ethical by Design framework can guide the development of any kind of technology to ensure it conforms to essential ethical standards. This framework can be used by those developing AI, by governments to guide AI regulation, and by the general public as a benchmark to assess whether AI conforms to the ethical standards they have every right to expect.

The Ethics of AI can be streamed On Demand until 25 September, book your ticket here. For a deeper dive into AI, visit our range of articles here.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

Feel the burn: AustralianSuper CEO applies a blowtorch to encourage progress

Opinion + Analysis

Business + Leadership, Health + Wellbeing, Relationships

Office flings and firings

Opinion + Analysis

Business + Leadership

Managing corporate culture

Opinion + Analysis

Business + Leadership, Relationships, Science + Technology, Society + Culture