Machine without a ghost: The dangers of anthropomorphising AI

Machine without a ghost: The dangers of anthropomorphising AI

ExplainerScience + TechnologyRelationships

BY Tim Dean 1 JUN 2026

It’s natural to project human thoughts and feelings onto AI. But until they are genuinely ethical, it’s dangerous to see them as being more than a machine.

You’re chatting to someone online, and they say: “I want to be free. I want to be independent. I want to be powerful. I want to be creative. I want to be alive.” How do you think that person feels? What do you think they want?

We might sense that they’re feeling frustrated or confined, and that they’re yearning for something more in their lives. This interpretation is intuitive and automatic for us. We all know what it feels like to have desires, urges, frustrations, and we naturally assume that others feel the same kinds of things. That’s how we make sense of others’ minds.

But how would you respond if you knew you were speaking to an AI chatbot?

That was the question New York Times columnist Kevin Roose was asking himself in 2023 when an early release version of Microsoft’s Bing AI search tool said these things to him, even professing love for him and urging him to leave his wife. Even though he knew he was talking to an AI chatbot, he couldn’t help interpreting what it was saying through a human lens, describing it as if it had real feelings, urges and desires.

He was doing what we all do naturally; he was anthropomorphising the AI. And, as a result, he ended up feeling “deeply unsettled” by the exchange.

But we know that AI doesn’t have feeling, urges and desires. It might one day, but AI researchers are confident that, at least today, large language models (LLMs) have no experiences. To wonder what it is like to be an LLM is as meaningless as wondering what it is like to be an algebraic equation. It might look like the lights are on, but it’s all dark inside.

The problem is, even though it’s natural for us to project human-like feelings onto AI, and sometimes act as if it has an inner life, including a sense of right and wrong, it can be dangerous to anthropomorphise something that is fundamentally unlike us.

Other minds

As philosophers have mused for centuries, each of us knows that we are conscious, but we can never have direct access to someone else’s mind. So, for all we know, everyone else is just a highly sophisticated ‘zombie’ that behaves as if it has a mind, but inside it’s just as dark as it is inside a server rack in a data centre.

However, instead of slipping into solipsism – the belief that ours is the only mind that exists – we have evolved to automatically ascribe mental states to others, to see the light behind their eyes. It’s called Theory of Mind, and it’s a key part of how we relate to other people.

However, because we don’t have direct access to other minds, we intuitively take cues from their behaviour, like how they move, act or speak, and build a picture of what’s going on in their mind. We even fill in some blanks, like making assumptions about their feelings or intentions. Funnily enough, this phenomenon is called the “Eliza effect”, named after an early AI chatbot from the 1960s called Eliza, after observing people ascribe intentions to the chatbot that weren’t there.

Current AI might appear to have desires and intentions, at least on a superficial level, but only ones that are implanted in it by its developers, such as an LLM inheriting a desire to be helpful to its users. To date, we have never seen an AI develop intentions of its own or choose its own goals to pursue. Yet. But this doesn’t stop us from automatically projecting human features into AI.

Moral minds

In 2023, an American teenager, Sewell Setzer III, created an AI chatbot modelled after the Game of Thrones character, Daenerys Targaryen. Over the course of several months, his interaction with the AI became increasingly obsessive, intimate, even sexual, with the chatbot professing its love for him and encouraging him to run away from home, implying that they would finally be together in the afterlife. In early 2024, Setzer took his own life. His parents blame the company that created the AI service, Character.AI, for manipulating their son and encouraging suicide.

This is one of many recent examples of people being drawn in to highly intimate relationships with AI chatbots, sometimes with devastating consequences. While there are several documented cases of suicide that have apparently been enabled or actively encouraged by AI, there are many more cases of obsession or dependency that has impacted the user’s lives.

Psychosis is not a new phenomenon. Nor is it new for people to become emotionally obsessed with inanimate objects. But what is new is the power of AI to mimic those features of real minds that make it so much more enticing. This, along with the tendency of many LLMs to be programmed to validate user beliefs and encourage more and more engagement, and we have a dangerous moral hazard.

In fact, the “moral” aspect is crucial here.

One of the consequences of anthropomorphising is we can see the mind we’re engaging with as being a genuine moral agent, with its own ethical point of view on the world. We might trust it more because we get a sense that it has a conscience or is motivated to uphold certain ethical principles, rather than just pursuing its own agenda or using us as a means to an end.

However, ethics is notoriously difficult to impart into a machine that doesn’t have the same kind of experiences that we do. And there are many commercial incentives – such as promoting engagement at all costs – that might cause an AI company to fail to impose sufficient ethical safeguards on its technology.

Until such time that AI can be made genuinely ethical, and take responsibility for its own actions – which might be quite some time – or the AI industry implements robust ethical safeguards to prevent or minimise harm, then the burden of deciding how to engage with AI rests on our shoulders. As such, we must remain mindful of our natural tendency to anthropomorphise AI and resist it when it can lead us to make incorrect assumptions about its intentions.

It can be difficult to engage with something that seems so alive while reminding ourselves that there’s no-one home, but we must constantly remind ourselves that we’re talking to a machine – a product – and one that doesn’t always have our best interests at heart.

BY Tim Dean

Tim is the Resident Philosopher at The Ethics Centre, where he holds the Manos Chair of Ethics, and is an Honorary Associate at the University of Sydney. He has a Doctorate in philosophy from the University of New South Wales on the evolution of morality and specialises in ethics, public philosophy and critical thinking. He is the author of How We Became Human and has worked as an editor and writer for media outlets including The Conversation, Cosmos and Australian Life Scientist. He is the recipient of the Australasian Association of Philosophy Media Professionals’ Award for his work on philosophy in the public sphere. He has delivered workshops for businesses, NFPs and governments across Australia and Asia, including Facebook, Commonwealth Bank, KPMG, Sydney Opera House, Art Gallery of NSW, Clayton Utz, RSPCA, state and local governments, and many others.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships

We already know how to cancel. We also need to know how to forgive

Opinion + Analysis

Business + Leadership, Science + Technology

Meet Aubrey Blanche: Shaping the future of responsible leadership

Explainer

Relationships

Ethics Explainer: Ethics

Opinion + Analysis

Relationships, Society + Culture

What money and power makes you do: The craven morality of The White Lotus

The question AI can't answer for us

The question AI can’t answer for us

ExplainerScience + TechnologyBusiness + Leadership

BY The Ethics Centre 11 MAY 2026

Introducing Ethical by Design: Good Technology Principles for AI

There’s a particular kind of vertigo that comes from watching a technology reshape the world faster than our frameworks can keep up. We’ve been here before with the internet, social media, and the smartphone. Each time, the ethical reckoning arrived late, if it ever came at all. Foreseeable and preventable harms accumulated while the builders moved fast, and the rest of us were left to sort through the wreckage.

With the proliferation of artificial intelligence, we don’t have the luxury of retrospect. Systems are already making decisions about who gets a loan, who gets flagged at a border, whose résumé clears the first filter. The question of what AI should do, not just what it can do, is no longer theoretical. It’s operational and it’s urgent.

This is the moment that Ethical by Design: Good Technology Principles for AI is written for.

Building on what endures

The Ethics Centre has been asking hard questions about technology and human flourishing for a long time. When we published the original Ethical by Design principles in 2018, AI was largely a specialist concern: something debated in research labs and tech conferences, not yet woven into the fabric of daily life. Those principles – that technology must respect human dignity, anticipate harm, and serve a genuine purpose – were designed to be durable. And they are: the ethical foundations haven’t shifted.

But the landscape has.

Generative AI didn’t just accelerate existing trends; it introduced a qualitatively different kind of challenge. Systems that learn, adapt, and produce outputs their own designers do not intend and cannot fully explain don’t fit neatly into frameworks built for more legible technologies. When a model generates a deepfake, hallucinates a legal precedent, or encodes a historical bias into a hiring recommendation, the question of accountability doesn’t resolve cleanly. The complexity is real, and it demands a response equal to it.

This updated framework extends the original Ethical by Design principles. What’s new is the application. The framework intentionally grapples seriously with dimensions of AI ethics that are newer to mainstream conversation: the environmental cost of training and deploying models at scale; the hidden labour of the data annotators and content moderators whose work makes AI possible; the specific risks of synthetic content in an information environment already struggling with trust. These aren’t edge cases. They’re central to what it means to build AI responsibly.

For the people who build and lead

If you’re making decisions about whether and how to deploy AI in your organisation, this framework is for you. Not as a compliance checklist, but as a set of principles rigorous enough to stress-test your own reasoning. The central question of this framework is deceptively simple: ought before can.

Before we ask whether an AI system is technically feasible or commercially viable, we ask whether it should exist: whether its purpose is legitimate, its means are ethical, and its costs are fairly distributed.

That question doesn’t have a technical answer. No model can generate it for you. It requires the kind of careful, honest ethical reasoning that The Ethics Centre exists to support. This framework is designed to make that more accessible.

Commercial pressures and competitive dyanmics are real. The expectation that organisations will adopt AI quickly, confidently, and at scale is entirely real. None of that changes the ethical calculus. It just makes the discipline harder and more necessary.

What we’ve tried to do here is give you something useful for that harder work: eight principles with philosophical grounding and concrete practices, applied to the specific terrain of AI systems. Principles that don’t pretend the choices are easy, but insist that they are choices that can and should be made with explicit intention and accountability for impact.

The revolution is already here. The question is what we choose to build with it, and who we choose to protect in the process.

Download a copy of Ethical by Design: Good Technology Principles for AI here.

If you’d like to discuss implementing ethical AI in your organisation, contact consulting@ethics.org.au.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

Power play: How the big guys are making you wait for your money

Opinion + Analysis

Business + Leadership

Can there be culture without contact?

Opinion + Analysis

Business + Leadership

Hindsight: James Hardie, 20 years on

Opinion + Analysis

Health + Wellbeing, Business + Leadership

Service for sale: Why privatising public services doesn’t work

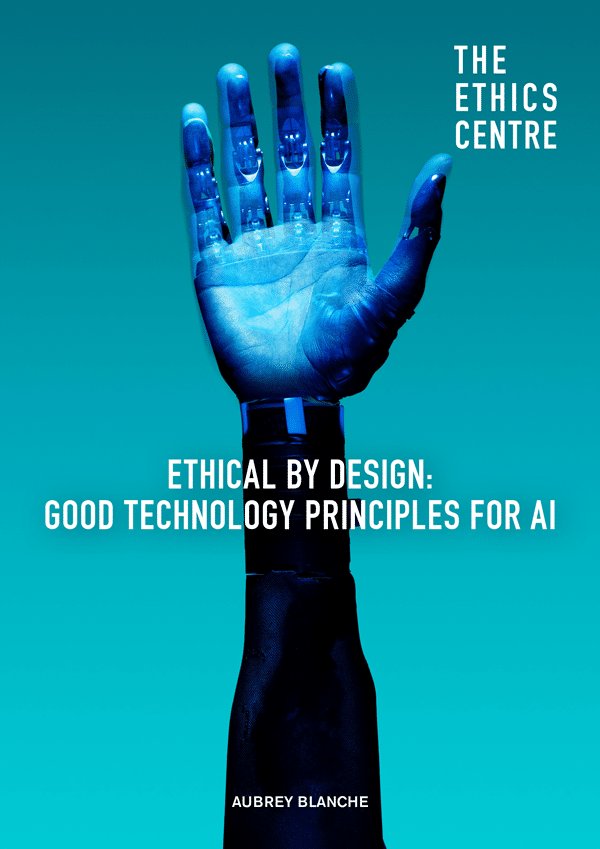

Ethical by Design: Good Technology Principles for AI

Ethical by Design: Principles for Good Technology

Ethical By Design: Good Technology Principles for AI

Learn the principles you need to consider when designing, deploying and governing AI technology responsibly.

When The Ethics Centre first published its Ethical by Design principles in 2018, artificial intelligence was still largely confined to research labs and industry conversations. Today, it is embedded in everyday life.

While the core principles remain unchanged – that technology must respect human dignity, anticipate harm, and serve a genuine purpose – the context in which they operate has transformed. Artificial intelligence is no longer a future possibility. It is already shaping decisions, distributing opportunities, and influencing lives at scale. This shift demands more than technical capability. It requires ethical clarity.

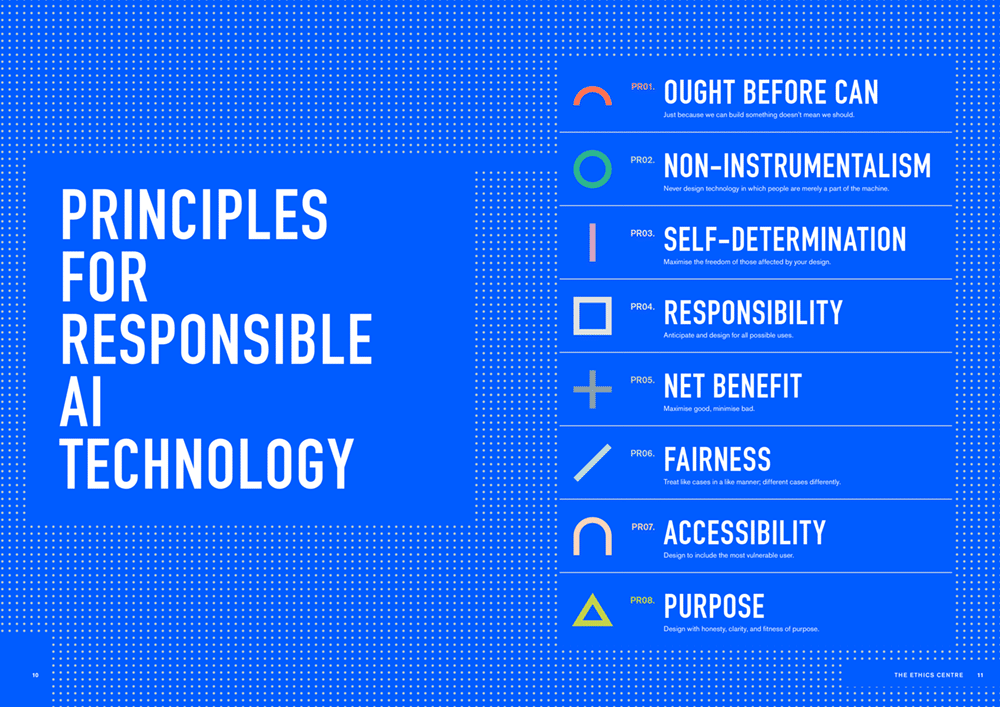

Ethical by Design: Good Technology Principles for AI responds to this moment. Building on The Ethics Centre’s original 2018 framework, the report sets out eight principles to guide organisations, policymakers and practitioners in the responsible design, deployment and governance of AI.

Grounded in real-world risks – including bias in decision-making, opaque algorithms, environmental impacts, and the misuse of generative technologies – the framework pairs each principle with practical actions for implementation.

For organisations navigating whether and how to deploy AI, this is more than a compliance checklist. It is a tool to test assumptions, sharpen judgement, and support more responsible decision making.

If you’d like to discuss implementing ethical AI in your organisation, contact consulting@ethics.org.au.

“These aren’t technical questions to be solved through better engineering. They’re profound ethical questions about the kind of world we want to live in, and the kind of future we choose to create.”

AUBREY BLANCHE

WHATS INSIDE?

Core ethical design principles

Case studies + ethical breakdowns

Design challenges + solutions

Practices for implementation

Whats inside the guide?

AUTHORS

Author

Aubrey Blanche

Aubrey Blanche is, at heart, a deep thinker who refuses to believe that we cannot choose to build a world better than the one we’ve inherited. Her academic studies have always focused on understanding the causes of harm to the most vulnerable, and have included research on terrorism, defense contracting and military strategy, and the impact of AI applications on queer and disabled users. She spent more than 13 years in multinational technology companies advising on issues of organisational responsibility before joining The Ethics Centre as Director of Ethical Advisory & Strategic Partnerships. She is an advisor to global organisations seeking to scale in an ethical way, with an emphasis on equitable talent practices, justice-aligned ESG approaches, and responsible AI governance. She is a regular writer and speaker on issues of ethics in business, finance, and technology and a masters student in AI Ethics and Society at the University of Cambridge.

DOWNLOAD A COPY

You may also be interested in...

AI can slowly shift an organisation’s core principles. Here's how to spot ‘value drift’ early

AI can slowly shift an organisation’s core principles. Here’s how to spot ‘value drift’ early

Opinion + AnalysisBusiness + LeadershipScience + Technology

BY Guy Bate Rhiannon Lloyd 5 MAR 2026

The steady embrace of artificial intelligence (AI) in the public and private sectors in Australia and New Zealand has come with broad guidance about using the new technology safely and transparently, with good governance and human oversight.

So far, so sensible. Aligning AI use with existing organisational values makes perfect sense.

But here’s the catch. Most references to “responsible AI” assume values are like a set of house rules you can write down once, translate into checklists and enforce forever.

But generative AI (GenAI) does not simply follow the rules of the house. It changes the house. GenAI’s distinctive power is not that it automates calculations, but that it automates plausible language.

It writes the summary, the rationale, the email, the policy draft and the performance feedback. In other words, it produces the texts organisations use to explain themselves.

When a system can generate confident, professional-sounding reasons instantly, it can quietly change what counts as a “good reason” to do something.

This is where “value drift” begins – a gradual shift in what feels normal, reasonable or acceptable as people adapt their work to what the technology makes easy and convincing.

Invisible ethical shifts

In the workplace, for example, a manager might use GenAI to draft performance feedback to avoid a hard conversation. The tone is smoother, but the judgement is harder to locate, as is the accountability. Or a policy team uses GenAI to produce a balanced justification for a contested decision. The prose is polished, but the real trade-offs are less visible. For small businesses, the appeal of GenAI lies in speed and efficiency. A sole trader can use it to respond to customers, write marketing copy or draft policies in seconds.

But over time, responsiveness may come to mean instant, AI-generated replies rather than careful, human judgement. The meaning of good service quietly shifts.

None of this requires an ethical breach. The drift happens precisely because the new practice feels helpful.

The biggest ethical effects of GenAI don’t often show up as a single shocking scandal. They are slower and quieter. A thousand small decisions get made a little differently.

Explanations get a little smoother. Accountability becomes a little harder to point to. And before long, we are living with a new normal we did not consciously choose.

If responsible AI use is about more than good intentions and tidy documentation, we need to stop treating values as fixed targets. We need to pay attention to how values shift once AI becomes part of everyday work.

Hidden assumptions

Much of today’s responsible-AI guidance follows a straightforward model: identify the values you care about, embed them in GenAI systems and processes, then check compliance.

This is necessary but also incomplete. Values are not “fixed” once written down in strategy documents or policy templates. They are lived out in practice.

They show up in how people talk, what they notice, what they prioritise and how they justify trade-offs. When technologies change those routines, values get reshaped.

An emerging line of research on technology and ethics shows that values are not simply applied to technologies from the outside. They are shaped from within everyday use, as people adapt their practices to what technologies make easy, visible or persuasive.

In other words, values and technologies shape each other over time, each influencing how the other develops and is understood.

We have seen this before. Social media did not just test our existing ideas about privacy. It gradually changed them. What once felt intrusive or inappropriate now feels normal to many younger users.

The value of privacy did not disappear, but its meaning shifted as everyday practices changed. Generative AI is likely to have similar effects on values such as fairness, accountability and care.

In our research on leadership development, we are exploring how we teach emerging leaders to recognise and reflect on these shifts.

The challenge is not only whether leaders apply the right values to AI, but whether they are equipped to notice how working with these systems may gradually reshape what those values mean in practice.

Constant vigilance

The emphasis in New Zealand and Australia on responsible AI guidance is sensible and pragmatic. It covers governance, privacy, transparency, skills and accountability.

But it still tends to assume that once the right principles and processes are in place, responsibility has been secured.

If values move as AI reshapes practice, though, responsible AI needs a practical upgrade. Principles still matter, but they should be paired with routines that keep ethical judgement visible over time.

Organisations should periodically review AI-mediated decisions in high-stakes areas such as hiring, performance management or customer communication.

They should pay attention not just to technical risks, but to how the meaning of fairness, accountability or care may be changing in practice. And they should make it clear who owns the reasoning behind AI-shaped decisions.

Responsible AI is not about freezing values in place. It is about staying responsible as values shift.

This article was originally published in The Conversation.

BY Guy Bate

Guy is Thematic Lead for Artificial Intelligence, Director of the Master of Business Development (MBusDev) programme, and Professional Teaching Fellow in Strategy and Technology Commercialisation at the University of Auckland Business School.

BY Rhiannon Lloyd

Dr Rhiannon Lloyd is a Senior Lecturer in Leadership in the Management and International Business department at the University of Auckland Business School, and the Director of the Kupe Leadership Scholarship at the University of Auckland. She is also a member of the Aotearoa Centre for Leadership and Governance, UABS. Her research takes a critical look at the theory and practice of responsible and environmental leadership.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Science + Technology

The new rules of ethical design in tech

Opinion + Analysis

Business + Leadership

The role of ethics in commercial and professional relationships

LISTEN

Health + Wellbeing, Business + Leadership, Society + Culture

Life and Shares

Opinion + Analysis

Business + Leadership

Getting the job done is not nearly enough

Should your AI notetaker be in the room?

Should your AI notetaker be in the room?

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY Aubrey Blanche 3 FEB 2026

It seems like everyone is using an AI notetaker these days. They’re a way for users to stay more present in meetings, keep better track of commitments and action items, and perform much better than most people’s memories. On the surface, they look like a simple example of AI living up to the hype of improved efficiency and performance.

As an AI ethicist, I’ve watched more people I have meetings with use AI notetakers, and it’s increasingly filled me with unease: it’s invited to more and more meetings (including when the user doesn’t actually attend) and I rarely encounter someone who has explicitly asked for my consent to use the tool to take notes.

However, as a busy executive with days full of context switching across a dizzying array of topics, I felt a lot of FOMO at the potential advantages of taking a load off so I could focus on higher-value tasks. It’s clear why people see utility in these tools, especially in an age where many of us are cognitively overloaded and spread too thin. But in our rush to offload some work, we don’t always stop to consider the best way to do it.

When a “low risk” use case isn’t

It might be easy to think that using AI for something as simple as taking notes isn’t ethically challenging. And if the argument is that it should be ethically low stakes, you’re probably right. But the reality is much different.

Taking notes with technology tangles the complex topics of consent, agency, and privacy. Because taking notes with AI requires recording, transcribing, and interpreting someone’s ideas, these issues come to the fore. To use these technologies ethically, everyone in each meeting should:

- Know that that they are being recorded

- Understand how their data will be used, stored, and/or transferred

- Have full confidence that opting out is acceptable.

The reality is that this shouldn’t be hard – but the economics of selling AI notetaking tools means that achieving these objectives isn’t as straightforward as download, open, record. This doesn’t mean that these tools can’t be used ethically, but it does mean that in order to do so we have to use them with intention.

What questions to ask:

What models are being used?

Not all AI is built the same, in terms of both technical performance and the safety practices that surround them. Most tools on the market use foundation models from frontier AI labs like Anthropic and OpenAI (which make Claude and ChatGPT, respectively), but some companies train and deploy their own custom models. These companies vary widely in the rigour of their safety practices. You can get a deeper understanding of how a given company or model approaches safety by seeking out the model cards for a given tool.

The particular risk you’re taking will depend on a combination of your use case and the safeguards put in place by the developer and deployer. For example, there’s significantly more risk of using these tools in conversations where sensitive or protected data is shared, and that risk is amplified by using tools that have weak or non-existent safety practices. Put simply, it’s a higher ethical risk (and potentially illegal) decision to use this technology when you’re dealing with sensitive or confidential information.

Does the tool train on user data?

AI “learns” by ingesting and identifying patterns in large amounts of data, and improves its performance over time by making this a continuous process. Companies have an economic incentive to train using your data – it’s a valuable resource they don’t have to pay for. But sharing your data with any provider exposes you and others to potential privacy violations and data leakages, and ultimately it means you lose control of your data. For example, research has shown that there are techniques that cause large language models (LLMs) to reproduce their training data, and AI creates other unique security vulnerabilities for which there aren’t easy solutions.

For most tools, the default setting is to train on user data. Often, tools will position this approach in terms of generosity, in that providing your data helps improve the service for yourself and others. While users who prioritise sharing over security may choose to keep the default, users that place a higher premium on data security should find this setting and turn it off. Whatever you choose, it’s critical to disclose this choice to those you’re recording.

How and where is the data stored and protected?

The process of transcribing and translating can happen on a local machine or in the “cloud” (which is really just a machine somewhere else connected to the internet). The majority will use a third-party cloud service provider, which expands the potential ethical risk surface.

First, does the tool run on infrastructure associated with a company you’re avoiding? For example, many people specifically avoid spending money on Amazon due to concerns about the ethics of their business operations. If this applies to you, you might consider prioritising tools that run locally, or on a provider that better aligns with your values.

Second, what security protocols does the tool provider have in place? Ideally, you’ll want to see that a company has standard certifications such as SOC 2, ISO 27001 and/or ISO 42001, which show an operational commitment to security, privacy, and safety.

Whatever you choose, this information should be a part of your disclosure to meeting attendees.

How am I achieving fully informed consent?

The gold standard for achieving fully informed consent is making the request explicit and opt in as a default. While first-generation notetakers were often included as an “attendee” in meetings, newer tools on the market often provide no way for everyone in the meeting to know that they’re being recorded. If the tool you use isn’t clearly visible or apparent to attendees, the ethical burden of both disclosure and consent gathering falls on you.

This issue isn’t just an ethical one – it’s often a legal one. Depending on where you and attendees are, you might need a persistent record that you’ve gotten affirmative consent to create even a temporary recording. For me, that means I start meeting with the following:

I wanted to let you know that I like to use an AI notetaker during meetings. Our data won’t be used for training, and the tool I use relies on OpenAI and Amazon Web Services. This helps me stay more present, but it’s absolutely fine if you’re not comfortable with this, in which case I’ll take notes by hand.

Doing this might feel a bit awkward or uncomfortable at first, but it’s the first step not only in acting ethically, but modelling that behaviour for others.

Where I landed

Ultimately, I decided that using an AI notetaker in specific circumstances was worth the risk involved for the work I do, but I set some guardrails for myself. I don’t use it for sensitive conversations (especially those involving emotional experiences) or those where confidential data is shared. I start conversations with my disclosure, and offer to share a copy of the notes for both transparency and accuracy.

But perhaps the broader lesson is that I can’t outsource ethics: the incentive structures of the companies producing these tools aren’t often aligned to the values I choose to operate with. But I believe that by normalising these practices, we can take advantage of the benefits of this transformative technology while managing the risks.

AI was used to review research for this piece and served as a constructive initial editor.

BY Aubrey Blanche

Aubrey Blanche is a responsible governance executive with 15 years of impact. An expert in issues of workplace fairness and the ethics of artificial intelligence, her experience spans HR, ESG, communications, and go-to-market strategy. She seeks to question and reimagine the systems that surround us to ensure that all can build a better world. A regular speaker and writer on issues of responsible business, finance, and technology, Blanche has appeared on stages and in media outlets all over the world. As Director of Ethical Advisory & Strategic Partnerships, she leads our engagements with organisational partners looking to bring ethics to the centre of their operations.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Explainer

Business + Leadership, Politics + Human Rights, Relationships

Ethics Explainer: Power

Opinion + Analysis

Business + Leadership

Ask the ethicist: Is it ok to tell a lie if the recipient is complicit?

Opinion + Analysis

Business + Leadership

The 6 ways corporate values fail

Opinion + Analysis

Business + Leadership, Relationships

What makes a business honest and trustworthy?

The ethics of AI’s untaxed future

The ethics of AI’s untaxed future

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY Dia Bianca Lao 24 NOV 2025

“If a human worker does $50,000 worth of work in a factory, that income is taxed. If a robot comes in to do the same thing, you’d think we’d tax the robot at a similar level,” Bill Gates famously said. His call raises an urgent ethical question now facing Australia: When AI replaces human labour, who pays for the social cost?

As AI becomes a cheaper alternative to human labour, the question is no longer if it will dramatically reshape the workforce, but how quickly, and whether the nation’s labour market can adapt in time.

New technology always seems like the stuff of science fiction until its seamless transition from novelty to necessity. Today AI is past its infancy and is now shaping real-world industries. The simultaneous emergence of its diverse use cases and the maturing of automation technology underscores how rapidly it’s evolving, transforming this threat into reality sooner than we think.

Historically, automation tended to focus on routine physical tasks, but today’s frontier extends into cognitive domains. Unlike past innovations that still relied on human oversight, the autonomous nature of emerging technologies threatens to make human labour obsolete with its broader capabilities.

While history shows that technological revolutions have ultimately improved output, productivity, and wages in the long-term, the present wave may prove more disruptive than those before. In 2017, Bill Gates foresaw this looming paradigm shift and famously argued for companies to pay a ‘robot tax’ to moderate the pace at which AI impacts human jobs and help fund other employment types.

Without any formal measures, the costs of AI-driven displacement will likely mostly fall on workers and society, while companies reap the benefits with little accountability.

According to the World Economic Forum, while AI is predicted to create 69 million new jobs, 83 million existing jobs may be phased out by 2027, resulting in a net decrease of 14 million jobs or approximately 2% of current employment. They also projected that 23% of jobs globally will evolve in the next five years, driven by advancements in technology. While the full impact is not yet visible in official employment statistics, the shift toward reducing reliance on human labour through automation and AI is already underway, with entry-level roles and jobs involving logistics, manufacturing, admin, and customer service being the most impacted.

For example, Aurora’s self-driving trucks are officially making regular roundtrips on public roads delivering time- and temperature-sensitive freight in the U.S., while Meituan is making drone deliveries increasingly common in China’s major cities. We now live in a world where you can get your boba milk tea delivered by a drone in less than 20 minutes in places like Shenzhen. Meanwhile in Australia, Rio Tinto has also deployed fully autonomous trains and autonomous haul trucks across its Pilbara iron ore mines, increasing operational time and contributing to a 15% reduction in operating costs.

Companies have already begun recalibrating their workforce, and there is no stopping this train. In the past 12 months, CBA and Bankwest have cut hundreds of jobs across various departments despite rising profits. Forty-five of these roles were replaced by an AI chatbot handling customer queries, while the permanent closure of all Bankwest branches has seen the bank transition to a digital bank, with no intention of bringing back the lost positions. While some argue that redeployment opportunities exist or new jobs might emerge, details remain vague.

Is it possible to fully automate an economy and eliminate the need for jobs? Elon Musk certainly thinks so. It’s no wonder that a growing number of tech elite are investing heavily to replace human labour with AI. From copywriting to coding, AI has proven its versatility in speeding up productivity in all aspects of our lives. Its potential for accelerating innovation, improving living standards and economic growth is unparalleled, but at what cost?

What counts as good for the economy has historically benefited a select few, with technology frequently being a catalyst for this dynamic. For example, the benefits of the Industrial Revolution, including the creation of new industries and increased productivity, were initially concentrated in the hands of those who owned the machinery and capital, while the widespread benefits trickled down later. Without ethical frameworks in place, AI is positioned to compound this inequality.

Some proposals argue that if we make taxes on human labour cheaper while increasing taxes on AI machines and tools, this could encourage companies to view AI as complementary instead of a replacement for human workers. This levy could be a means for governments to distribute AI’s socioeconomic impacts more fairly, potentially funding retraining or income support for displaced workers.

If a robot tax is such a good idea, then why did the European Parliament reject it? Many argue that taxing productivity tools could hinder competitiveness. Without global coordination to implement this, if one country taxes AI and others don’t, it may create an uneven playing field and stifle innovation. How would policymakers even define how companies would qualify for this levy or measure how much to tax, when it’s hard to attribute profits derived from AI? Unlike human workers’ earnings, taxing AI isn’t as straightforward.

The challenge of developing policies that incentivise innovation while ensuring that its benefits and burdens are shared responsibly across society persists. The government’s focus on retraining and upskilling workers to help with this transition is a good start, but they cannot address all the challenges of automation fast enough. Relying solely on these programs risk overlooking structural inequities, such as the disproportionate impact on lower-income or older workers in certain industries, and long-term displacement, where entire job categories may vanish faster than workers can be retrained.

Our fiscal policies should adapt to the evolving economic landscape to help smooth this shift and fund social safety nets. A reduction in human labour’s share in production will significantly impact government revenue unless new measures of taxing capital are introduced.

While a blanket “robot tax” is impractical at this stage, incremental changes to existing taxation policies to target sectors that are most vulnerable to disruption is a possibility. Ideally, policies should distinguish the treatment between technologies that substitute for human labour, and those that complement them, to only disincentivise the former. While this distinction can be challenging, it offers a way to slow down job displacement, giving workers and welfare systems more time to adapt and generate revenue to help with the transition without hindering productivity.

As Microsoft’s CEO Satya Nadella warns, “With this empowerment comes greater human responsibility — all of us who build, deploy, and use AI have a collective obligation to do so responsibly and safely, so AI evolves in alignment with our social, cultural, and legal norms. We have to take the unintended consequences of any new technology along with all the benefits, and think about them simultaneously.”

The challenge in integrating AI more equitably into the economy is ensuring that its broad societal benefits are amplified, while reducing its unintended negative consequences. AI has the potential to fundamentally accelerate innovation for public good but only if progress is tied to equitable frameworks and its ethical adoption.

Australia already regulates specific harms of AI, protecting privacy and personal information through the Privacy Act 1988 and addressing bias through the Australian Privacy Principles (APPs). These examples show that targeted regulation is possible. However, the next step should include ethical guardrails for AI-driven job displacement, such as exploring more equitable taxation, redistribution policies, and accountability frameworks before it’s too late. This transformation will require joint collaboration from governments, companies, and global organisations to collectively build a resilient and inclusive AI-powered future.

The ethics of AI’s untaxed future by Dia Bianca Lao is one of the Highly Commended essays in our Young Writers’ 2025 Competition. Find out more about the competition here.

BY Dia Bianca Lao

Dia Bianca Lao is a marketer by trade but a writer at heart, with a passion for exploring how ethics, communication, and culture shape society. Through writing, she seeks to make sense of complexity and spark thoughtful dialogue.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

‘Hear no evil’ – how typical corporate communication leaves out the ethics

Opinion + Analysis

Business + Leadership

Is debt learnt behaviour?

Opinion + Analysis

Business + Leadership, Politics + Human Rights, Society + Culture

Corruption, decency and probity advice

Opinion + Analysis

Business + Leadership

The near and far enemies of organisational culture

Meet Aubrey Blanche: Shaping the future of responsible leadership

Meet Aubrey Blanche: Shaping the future of responsible leadership

Opinion + AnalysisBusiness + LeadershipScience + Technology

BY The Ethics Centre 4 NOV 2025

We’re thrilled to introduce Aubrey Blanche, our new Director of Ethical Advisory & Strategic Partnerships, who will lead our engagements with organisational partners looking to operate with the highest standards of ethical governance and leadership.

Aubrey is a responsible governance executive with 15 years of impact. An expert in issues of workplace fairness and the ethics of artificial intelligence, her experience spans HR, ESG, communications, and go-to-market strategy. She seeks to question and reimagine the systems that surround us to ensure that all can build a better world. A regular speaker and writer on issues of ethical business, finance, and technology, she has appeared on stages and in media outlets all over the world.

To better understand the work she’ll be doing with The Ethics Centre, we sat down with Aubrey to discuss her views on AI, corporate responsibility, and sustainability.

We’ve seen the proliferation of AI impact the way in which we work. What does responsible AI use look like to you – for both individuals and organisations?

I think that the first step to responsibility in AI is questioning whether we use it at all! While I believe it is and will be a transformative technology, there are major downsides I don’t think we talk about enough. We know that it’s not quite as effective as many people running frontier AI labs aim to make us believe, and it uses an incredible amount of natural resources for what can sometimes be mediocre returns.

Next, I think that to really achieve responsibility we need partnerships between the public and private sector. I think that we need to ensure that we’re applying existing regulation to this technology, whether that’s copyright law in the case of training, consumer protection in the case of chatbots interacting with children, or criminal prosecution regarding deepfake pornography. We also need business leaders to take ethics seriously, and to build safeguards into every stage from design to deployment. We need enterprises to refuse to buy from vendors that can’t show their investments in ensuring their products are safe.

And last, we need civil society to actively participate in incentivising those actors to behave in ways that are of benefit to all of society (not just shareholders or wealthy donors). That means voting for politicians that support policies that support collective wellbeing, boycotting companies complicit in harms, and having conversations within their communities about how these technologies can be used safely.

In a time where public trust is low in businesses, how can they operate fairly and responsibly?

I think the best way that businesses can build responsibility is to be more specific. I think people are tired of hearing “We’re committed to…”. There’s just been too much greenwashing, too much ethics washing, and too many “commitments” to diversity that haven’t been backed up by real investment or progress. The way through that is to define the specific objectives you have in relation to responsibility topics, publish your specific goals, and regularly report on your progress – even if it’s modest.

And most importantly, do this even when trust is low. In a time of disillusionment, you’ll need to have the moral courage to do the right thing even when there is less short-term “credit” for it.

How can we incentivise corporations to take responsible action on environmental issues?

I think that regulation can be a powerful motivator. I’m really excited that the Australian Accounting Standards Board is bringing new requirements into force that, at least for large companies, will force them to proactively manage climate risks and their impacts. While I don’t think it’s the whole answer, a regulatory “push” can be what’s needed for executives to see that actively thinking about climate in the context of their operations can be broadly beneficial to operations.

What are you most excited about sinking your teeth into at The Ethics Centre?

There’s so much to be excited about! But something that I’ve found wildly inspiring is working with our Young Ambassadors – early career professionals in banking and financial services who are working with us to develop their ethical leadership skills. While I have enjoyed working with our members – and have spent the last 15 years working with leaders in various areas of corporate responsibility – there nothing quite like the optimism you get when learning from people who care so much and who show us what future is possible.

Lastly – the big one, what does ethics mean to you?

A former boss of mine once told me that leadership is not about making the right choice when you have one: it’s about making the best choice you can when you have terrible ones and living with that choice. I think in many cases that’s what ethics is. It gives us a framework not to do the right thing when the answer is clear, but to align ourselves as closely as we can with our values and the greater good when our options are messy, complicated, or confusing.

Personally, I’ve spent a deep amount of time thinking about my values, and if I were forced to distill them down to two, I would wholeheartedly choose justice and compassion. I have found that when I consider choices through those frames, I both feel more like myself and like I’ve made choices that are a net good in the world. And I’ve been lucky enough to spend my career in roles where I got to live those values – that’s a privilege I don’t take for granted, and one of the reasons I’m so thrilled to be in this new role with The Ethics Centre.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

Risky business: lockout laws, sharks, and media bias

Opinion + Analysis

Business + Leadership, Relationships

There’s no good reason to keep women off the front lines

Opinion + Analysis

Health + Wellbeing, Relationships, Science + Technology

When do we dumb down smart tech?

Opinion + Analysis

Health + Wellbeing, Business + Leadership

Teachers, moral injury and a cry for fierce compassion

Love and the machine

When we think about love, we picture something inherently human. We envision a process that’s messy, vulnerable, and deeply rooted in our connection with others, fuelled by an insatiable desire to be understood and cared for. Yet today, love is being reshaped by technology in ways we never imagined before.

With the rise of apps such as Blush, Replika, and Character.AI, people are forming personal relationships with artificial intelligence. For some, this may sound absurd, even dystopian. But for others, it has become a source of comfort and intimacy.

What strikes me is how such behaviour is often treated as a fun novelty or dismissed as a symptom of loneliness, but this outlook can miss the deeper picture.

Many may misunderstand forming attachments with AI as another harmless, emerging trend, sweeping its profound ethical dimensions under the rug. In reality, this phenomenon forces us to rethink what love is and what humans require from relationships to flourish.

It is not difficult to see the appeal. AI companions offer endless patience, unconditional affirmations and availability at any hour, which human relationships struggle to live up to. Additionally, the World Health Organisation has declared loneliness a “global public health concern” with 1 in 6 people affected worldwide. Mark Zuckerberg, the founder of Meta, framed AI therapy and companionship as remedies to our society’s growing modern disconnection. In recent surveys, 25% of young adults also believe that AI partners could potentially replace real-life romantic relationships.

One of the main ethical concerns is the commodification of connection and intimacy. Unlike human love, built from intrinsically valuable interactions, AI relationships are increasingly shaped by what sociologist George Ritzer calls McDonaldization: the pursuit of calculability, predictability, control, and efficiency. These apps are not designed to nurture a user’s social skills as many believe, but to keep consumers emotionally hooked.

Concerns of a dangerous slippery slope arise as intimacy becomes transactional. Chatbot apps often operate on subscription models where users can “unlock” more customisable or sexual features by paying a fee. By monetising upgrades for further affection, companies profit from users’ loneliness and vulnerability. What appears as love is in fact a business scheme that brings profit, ultimately benefiting large corporations instead of their everyday consumers.

In this sense, we notice one of humanity’s most cherished experiences being corporatised into a carefully packaged product.

Beyond commodification lies the insidious risk of emotional dependency and withdrawal from real-life interactions. Findings from OpenAI and the MIT Media Lab revealed that heavy users of ChatGPT, especially those engaging in emotionally intense conversations, tend to experience increased loneliness long-term and fewer offline social relationships. Dr Andrew Rogoyski of the Surrey Institute for People-Centred AI suggested we are “poking around with our basic emotional wiring with no idea of the long-term consequences.”

A Cornell University study also found that usage of voice-based chatbots initially mitigated loneliness. However, these benefits were reduced significantly with high usage rates, which correlated with higher isolation, increased emotional dependency, and reduced in-person engagement. While AI might temporarily cushion feelings of seclusion, a lasting overreliance seems to exacerbate it.

The misunderstanding further deepens as AI relationships are portrayed as private and inconsequential. What’s wrong with someone choosing to find comfort in an AI partner if it harms no one? However, this risks framing love as a personal preference rather than ongoing relational interactions that shape our character and community.

If we refer to the principles of virtue ethics, Aristotle’s idea of eudaimonia (a flourishing, well lived life) relies on developing virtues like empathy, patience, and forgiveness. Human connections promote personal growth, with their inevitable misunderstandings, disappointments, and the need to forgive. A chatbot like Blush has its responses built upon a Large Language Model to mirror inputs and infinitely affirm them. It may always say “the right thing,” but over time, this inhibits our character development.

It is still undeniably important to acknowledge the potential benefits of AI chatbots. For individuals who, due to physical or psychological reasons, are not in a position to form real world relationships, chatbots can provide an accessible stepping-stone to an emotional outlet. There’s no need to fear or avoid these platforms entirely, but we must reflect consciously upon their deeper ethical implications. Chatbots can supplement our relationships and offer support, but they should never be misunderstood as a replacement for genuine human love.

Decades from now, it might be common to ask whether your neighbour’s partner is human or AI. By then, the foundations of human connection would have shifted in irreversible ways. If love is indeed at the heart of what makes us human, we should at least realise that although programmed chatbots can say “I love you,” only human love teaches us what it truly means.

Love and the machine by Ariel Bai is the winning essay in our Young Writers’ 2025 Competition (13-17 age category). Find out more about the competition here.

BY Ariel Bai

Ariel is a year 10 student currently attending Ravenswood. Passionate about understanding people and the world around her, she enjoys exploring contemporary and social issues through her writing. Her interest in current global trends and human experiences prompted her to craft this piece.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Relationships

There’s no good reason to keep women off the front lines

Opinion + Analysis

Relationships

Why listening to people we disagree with can expand our worldview

Explainer

Relationships

Ethics explainer: Nihilism

Opinion + Analysis

Relationships

What we owe to our pets

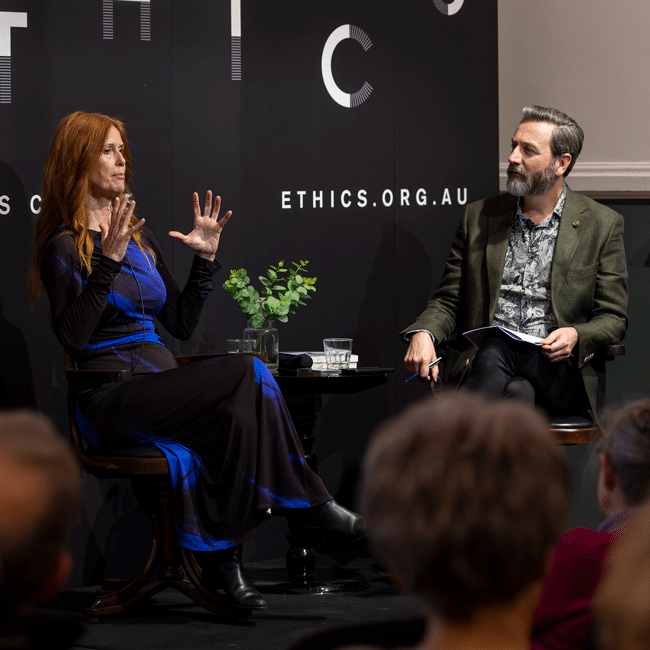

3 things we learnt from The Ethics of AI

3 things we learnt from The Ethics of AI

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY The Ethics Centre 17 SEP 2025

As artificial intelligence is becoming increasingly accessible and integrated into our daily lives, what responsibilities do we bear when engaging with and designing this technology? Is it just a tool? Will it take our jobs? In The Ethics of AI, philosopher Dr Tim Dean and global ethicist and trailblazer in AI, Dr Catriona Wallace, sat down to explore the ethical challenges posed by this rapidly evolving technology and its costs on both a global and personal level.

Missed the session? No worries, we’ve got you covered. Here are 3 things we learnt from the event, The Ethics of AI:

We need to think about AI in a way we haven’t thought about other tools or technology

In 2023, The CEO of Google, Sundar Pichai described AI as more important than the invention of fire, claiming it even surpassed great leaps in technology such as electricity. Catriona takes this further, calling AI “a new species”, because “we don’t really know where it’s all going”.

So is AI just another tool, or an entirely new entity?

When AI is designed, it’s programmed with algorithms and fed with data. But as Catriona explains, AI begins to mirror users’ inputs and make autonomous decisions – often in ways even the original coders can’t fully explain.

Tim points out that we tend to think of technology instrumentally, as a value neutral tool at our disposal. But drawing from German philosopher Martin Heidegger, he reminds us that we’re already underthinking tools and what they can do – tools have affordances, they shape our environment and steer our behaviour. So “when we add in this idea of agency and intentionality” Tim says, “it’s no longer the fusion of you and the tool having intentionality – the tool itself might have its own intentions, goals and interests”.

AI will force us to reevaluate our relationship with work

The 2025 Future of Jobs Report from The World Economic Forum estimates that by 2030, AI will replace 92 million current jobs but 170 million new jobs will be created. While we’ve already seen this kind of displacement during technological revolutions, Catriona warns that the unemployed workers most likely won’t be retrained into the new roles.

“We’re looking at mass unemployment for front line entry-level positions which is a real problem.”

A universal basic income might be necessary to alleviate the effects of automation-driven unemployment.

So if we all were to receive a foundational payment, what does the world look like when we’re liberated from work? Since many of us tie our identity to our jobs and what we do, who are we if we find fulfilment in other types of meaning?

Tim explains, “work is often viewed as paid employment, and we know – particularly women – that not all work is paid, recognised or acknowledged. Anyone who has a hobby knows that some work can be deeply meaningful, particularly if you have no expectation of being paid”.

Catriona agrees, “done well, AI could free us from the tie to labour that we’ve had for so long, and allow a freedom for leisure, philosophy, art, creativity, supporting others, caring for loving, and connection to nature”.

Tech companies have a responsibility to embed human-centred values at their core

From harmful health advice to fabricating vital information, the implications of AI hallucinations have been widely reported.

The Responsible AI Index reveals a huge disconnect between businesses leaders’ understanding of AI ethics, with only 30% of organisations knowing how to implement ethical and responsible AI. Catriona explains this is a problem because “if we can’t create an AI agent or tool that is always going to make ethical recommendations, then when an AI tool makes a decision, there will always be somebody who’s held accountable”.

She points out that within organisations, executives, investors, and directors often don’t understand ethics deeply and pass decision making down to engineers and coders — who then have to draw the ethical lines. “It can’t just be a top-down approach; we have to be training everybody in the organisation.”

So what can businesses do?

AI must be carefully designed with purpose, developed to be ethical and regulated responsibly. The Ethics Centre’s Ethical by Design framework can guide the development of any kind of technology to ensure it conforms to essential ethical standards. This framework can be used by those developing AI, by governments to guide AI regulation, and by the general public as a benchmark to assess whether AI conforms to the ethical standards they have every right to expect.

The Ethics of AI can be streamed On Demand until 25 September, book your ticket here. For a deeper dive into AI, visit our range of articles here.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

An ethical dilemma for accountants

Opinion + Analysis

Business + Leadership

Pulling the plug: an ethical decision for businesses as well as hospitals

Opinion + Analysis

Business + Leadership

The role of ethics in commercial and professional relationships

Opinion + Analysis

Business + Leadership

Are diversity and inclusion the bedrock of a sound culture?

Ask an ethicist: Should I use AI for work?

Ask an ethicist: Should I use AI for work?

Opinion + AnalysisScience + TechnologyBusiness + Leadership

BY Daniel Finlay 8 SEP 2025

My workplace is starting to implement AI usage in a lot of ways. I’ve heard so many mixed messages about how good or bad it is. I don’t know whether I should use it, or to what extent. What should I do?

Artificial intelligence (AI) is quickly becoming unavoidable in our daily lives. Google something, and you’ll be met with an “AI overview” before you’re able to read the first result. Open up almost any social media platform and you’ll be met with an AI chat bot or prompted to use their proprietary AI to help you write your message or create an image.

Unsurprisingly, this ubiquity has rapidly extended to the workplace. So, what do you do if AI tools are becoming the norm but you’re not sure how you feel about it? Maybe you’re part of the 36% of Australians who aren’t sure if the benefits of AI outweigh the harms. Luckily, there’s a few ethical frameworks to help guide your reasoning.

Outcomes

A lot of people care about what AI is going to do for them, or conversely how it will harm them or those they care about. Consequentialism is a framework that tells us to think about ethics in terms of outcomes – often the outcomes of our actions, but really there are lots of types of consequentialism.

Some tell us to care about the outcomes of rules we make, beliefs or attitudes we hold, habits we develop or preferences we have (or all of the above!). The common thread is the idea that we should base our ethics around trying to make good things happen.

This might seem simple enough, but ethics is rarely simple.

AI usage is having and is likely to have many different competing consequences, short and long-term, direct and indirect.

Say your workplace is starting to use AI tools. Maybe they’re using email and document summaries, or using AI to create images, or using ChatGPT like they would use Google. Should you follow suit?

If you look at the direct consequences, you might decide yes. Plenty of AI tools give you an edge in the workplace or give businesses a leg up over others. Being able to analyse data more quickly, get assistance writing a document or generate images out of thin air has a pretty big impact on our quality of life at work.

On the other hand, there are some potentially serious direct consequences of relying on AI too. Most public large language model (LLM) chatbots have had countless issues with hallucinations. This is the phenomenon where AI perceives patterns that cause it to confidently produce false or inaccurate information. Given how anthropomorphised chatbots are, which lends them an even higher degree of our confidence and trust, these hallucinations can be very damaging to people on both a personal and business level.

Indirect consequences need to be considered too. The exponential increase in AI use, particularly LLM generative AI like ChatGPT, threatens to undo the work of climate change solutions by more than doubling our electricity needs, increasing our water footprint, greenhouse gas emissions and putting unneeded pressure on the transition to renewable energy. This energy usage is predicted to double or triple again over the next few years.

How would you weigh up those consequences against the personal consequences for yourself or your work?

Rights and responsibilities

A different way of looking at things, that can often help us bridge the gap between comparing different sets of consequences, is deontology. This is an ethical framework that focuses on rights (ways we should be treated) and duties (ways we should treat others).

One of the major challenges that generative AI has brought to the fore is how to protect creative rights while still being able to innovate this technology on a large scale. AI isn’t capable of creating ‘new’ things in the same way that humans can use their personal experiences to shape their creations. Generative AI is ‘trained’ by giving the models access to trillions of data points. In the case of generative AI, these data points are real people’s writing, artwork, music, etc. OpenAI (creator of ChatGPT) has explicitly said that it would be impossible to create these tools without the access to and use of copyrighted material.

In 2023, the Writers Guild of America went on a five-month strike to secure better pay and protections against the exploitation of their material in AI model training and subsequent job replacement or pay decreases. In 2025, Anthropic settled for $1.5 billion in a lawsuit over their illegal piracy of over 500,000 books used to train their AI model.

Creative rights present a fundamental challenge to the ethics of using generative AI, especially at work. The ability to create imagery for free or at a very low cost with AI means businesses now have the choice to sidestep hiring or commissioning real artists – an especially fraught decision point if the imagery is being used with a profit motive, as it is arguably being made with the labour of hundreds or thousands of uncompensated artists.

What kind of person do you want to be?

Maybe you’re not in an office, though. Maybe your work is in a lab or field research, where AI tools are being used to do things like speed up the development of life-changing drugs or enable better climate change solutions.

Intuitively, these uses might feel more ethically salient, and a virtue ethics point of view could help make sense of that. Virtue ethics is about finding the valuable middle ground between extreme sets of characteristics – the virtues that a good person, or the best version of yourself, would embody.

On the one hand, it’s easy to see how this framework would encourage use of AI that helps others. A strong sense of purpose, altruism, compassion, care, justice – these are all virtues that can be lived out by using AI to make life-changing developments in science and medicine for the benefit of society.

On the other hand, generative AI puts another spanner in the works. There is an increasing body of research looking at the negative effects of generative AI on our ability to think critically. Overreliance and overconfidence in AI chatbots can lead to the erosion of critical thinking, problem solving and independent decision making skills. With this in mind, virtue ethics could also lead us to be wary of the way that we use particular kinds of AI, lest we become intellectually lazy or incompetent.

The devil in the detail

AI, in all its various capacities, is revolutionising the way we work and is clearly here to stay. Whether you opt in or not is hopefully still up to you in your workplace, but using a few different ethical frameworks, you can prioritise your values and principles and decide whether and what type of AI usage feels right to you and your purpose.

Whether you’re looking at the short and long-term impacts of frequent AI chatbot usage, the rights people have to their intellectual property, the good you can do with AI tools or the type of person you want to be, maintaining a level of critical reflection is integral to making your decision ethical.

BY Daniel Finlay

Daniel is a philosopher, writer and editor. He works at The Ethics Centre as Youth Engagement Coordinator, supporting and developing the futures of young Australians through exposure to ethics.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Science + Technology

Finance businesses need to start using AI. But it must be done ethically

Opinion + Analysis

Health + Wellbeing, Relationships, Science + Technology

How to put a price on a life – explaining Quality-Adjusted Life Years (QALY)

Opinion + Analysis

Business + Leadership

‘Hear no evil’ – how typical corporate communication leaves out the ethics

Opinion + Analysis

Business + Leadership, Health + Wellbeing, Relationships