FODI returns: Why we need a sanctuary to explore dangerous ideas

FODI returns: Why we need a sanctuary to explore dangerous ideas

Opinion + AnalysisSociety + Culture

BY The Ethics Centre 22 MAY 2024

New and challenging ideas are endangered species these days. They are surrounded by predators from all sides.

There are the entrenched interests that want to maintain the status quo. There are trolls who will beat them down just for laughs. Then there is the threat of cancellation by those who deem challenging ideas unworthy of being expressed at all.

Yet, as their natural habitat is being invaded, we as a society need these ideas to thrive more than ever before. We must continually challenge our assumptions, for history has shown us how often they end up being false. We must be wary of the status quo, and the powers that work to preserve it for their own benefit (and our detriment). We need to have open and honest conversations, lest our minds – and our ideas – become outdated and stale in a fast-moving world.

What we need is a sanctuary where new and challenging ideas can be nurtured and kept safe from the predators until they’re strong enough to be released into the wilderness and thrive. We need a space where they can be heard with open ears and challenged by open minds. A space where discourse is steered by good faith and reason rather than tribalism and fury.

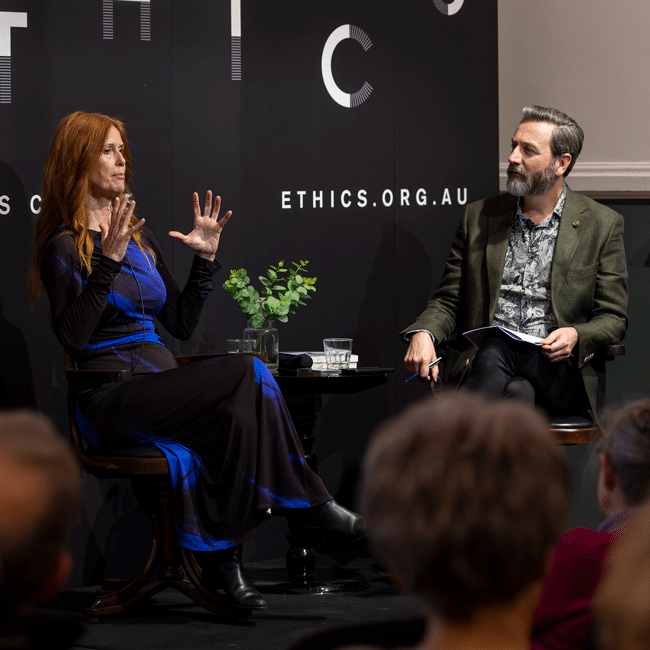

FODI Festival Director, Danielle Harvey, says that is what The Festival of Dangerous Ideas (FODI, to its fans) is all about this year. “In a litany of entrenched ideas, 24-hour news cycles, shallow information and self-censorship, we desperately need a space where we can engage with challenging ideas in good faith.”

FODI is a “sanctuary”, not just as a refuge to keep us safe from the noise, the trolls and the bad faith speakers found in the wilderness of public discourse, but a space where we’re safe to engage with powerful and provocative ideas.

A lot is said about “safe spaces” these days. But they’re typically talking about only one type: “safe from…” spaces, where people can be protected from things that might be harmful, triggering, discriminatory or distressing. These spaces are important in a world filled with dangers, because we should always respect the inherent dignity and vulnerability of others, and seek to protect them from harm.

But if we only have “safe from…” spaces, we risk shutting down difficult conversations that we might have to have. We might stifle precisely the kinds of discourse that could make “safe from…” spaces less necessary. We can end up being coddled rather than becoming resilient or testing our own ideas.

FODI exemplifies another kind of safe space: “safe to…”. This is a space where people are able to express themselves authentically and in good faith without fear of reprisal, where they can engage with difficult and controversial topics that might even be deemed offensive in other contexts. These are the conversations we have to have if we’re to combat the problems that make “safe from…” spaces necessary.

“FODI is gives us an opportunity to hear powerful and provocative speakers from around the world talk on important and rousing topics,” says Harvey. It’s also a sanctuary. One where “audiences can engage with these ideas in a way that we, unfortunately, can’t in the wild. In our sanctuary you are safe from hype and safe to listen and to ask questions.”

Such a sanctuary needs to be carefully curated to enable open good faith engagement with dangerous ideas. That’s why this year’s FODI will be special. When you come to FODI, you will enter a sacred space, with a shared understanding of our mutual purpose and a will to create a better world. A space to let curiosity guide you, where good faith reigns, where you’re free from intimidation and shame for what you choose to believe and express, a safe place to think deeply about the world.

The Festival of Dangerous Ideas returns 24-25 August 2024 to Carriageworks, Sydney. Subscribe for program updates at festivalofdangerousideas.com.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Society + Culture

Strange bodies and the other: The horror of difference

Opinion + Analysis

Society + Culture

The power of community to bring change

Opinion + Analysis

Society + Culture

The ethical honey trap of nostalgia

Opinion + Analysis

Business + Leadership, Society + Culture

The Ethics Institute: Helping Australia realise its full potential

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Is there such a thing as ethical investing?

Is there such a thing as ethical investing?

Opinion + AnalysisBusiness + Leadership

BY The Ethics Centre 22 APR 2024

If you’re looking to start investing in the stock market (and you’ve stumbled across this article), chances are you might be investigating how you can do it ethically – without supporting companies that are actively causing harm to the world.

“Ethical investing” has no fixed definition in Australia, Life and Shares host Cris Parker points out in The Ethics Centre’s latest podcast. Susheela Peres Da Costa, Chair of the Responsible Investment Association of Australasia (RIAA), says typically ethical, or responsible, investing is about, “consumers assuming something is screened out of the portfolio – what you don’t invest in is a typical ethical investment question.”

But finding out which companies are involved in activities you don’t want to financially support is not as cut and dried as you might hope.

“There’s a lot of seductively simple solutions out there,” Peres Da Costa says, such as points-based system ESG (Environmental, Social and Governance Investing).

“Some of the most profitable companies involved in some of the most harmful activities actually do very well on some of the scoring systems,” Da Costa says, “because they’ve got great volunteering programs and programs for replacing their light bulbs with LEDs, and they give a lot of money to charity. But investors need to be smart about not falling for the gloss. As someone who’s professionally analysed company reports, I would never, ever believe anything in a value statement…”

“However, we really need to address the elephant in the room, which is actually the cost. It is just so expensive to obtain proper financial advice – to give you an example, the median price in Australia is $5,000 per year.”

Even if you don’t think of yourself as being in the stock market, if you have super in Australia, then your super fund is investing your money on your behalf. Happily, many super funds offer investment packages classified as “sustainable”, which aim to buy into clean and renewable energy shares and avoid companies involved in fossil fuels.

The RIAA ranks Australian super funds on their responsible investment approaches: its Responsible Investment Super Studies highlight funds that choose shares in companies that aim to do good for people and the earth and screen out those with poor or questionable practices.

Still, the waters are murky as to which companies are doing the most harm, and which are trying to do good. As Life and Shares host Cris Parker points out, one of the best performing sustainable exchange traded funds in the past year had a large holding in mining company BHP.

But that’s not necessarily a bad thing, says Da Costa. “It’s easy to think about mining companies as those that dig up the ground, tear down the trees and all those things. But they’re also the ones that are producing the lithium, for example, and all the rare earths that we need to transition from our dependence on fossil fuels. So I think one of the things you have to be really careful of is assuming that a company is one thing, good or bad.”

“One of the things you have to be really careful of is assuming that a company is one thing, good or bad.” – Peres Da Costa

Of course, there’s no legislation to prevent you from investing in whatever you want – and if you don’t buy shares in a particular company, someone else probably will. Unless you have the financial power to buy a controlling stake, you won’t be able to control board decisions as a shareholder. But it doesn’t mean you can’t try.

“If your objective is to have an impact in the world, then the levers that you have available to you are very different if you’re a consumer versus an institution, and you need to have a theory of change about how you have that impact,” Da Costa says.

A theory of change is a conceptual model outlining the specific actions and interventions your investments will use to achieve the desired impact. In the case of sustainability and climate change, this means putting your money into renewables, divesting from fossil fuel companies, and being an active shareholder – paying attention to what your invested companies are doing, and wielding your shareholder voting power at AGMs (Annual General Meetings).

One simple action that should be on every good global citizen’s to-do list is checking your super fund’s ethical position and investments, along with checking where your bank invests and if your power company uses renewables or coal and gas.

Looking to your own values and ethics, and using those as a guide to what you will and won’t invest in, is probably your best personal guide for how to invest ethically.

For instance, does it bother you to earn dividends from companies involved in mining and fossil fuels, weapons manufacturing, supply chains that aren’t signatories to sustainability or anti-slavery regulation, and alcohol, tobacco, or unethical pharmaceutical companies? Are you willing to make trade-offs and invest in a company doing harm in one area but good in another? As Da Costa says, you can’t assume “a company is one thing, good or bad.”

Whether you’re actively trading shares and avidly following financial news or just tinkering with your super investment options, the stock market will always be an ethical minefield. But with research and the knowledge of how to take control of your money and how it’s used, you can start investing more responsibly and purposefully.

Life and Shares unpicks the share market so you can make decisions you’ll be proud of. Listen now on Spotify and Apple Podcasts.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Relationships

So your boss installed CCTV cameras

Explainer

Business + Leadership

Ethics Explainer: Social license to operate

Opinion + Analysis

Business + Leadership

Ready or not – the future is coming

Opinion + Analysis

Business + Leadership

United Airlines shows it’s time to reframe the conversation about ethics

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

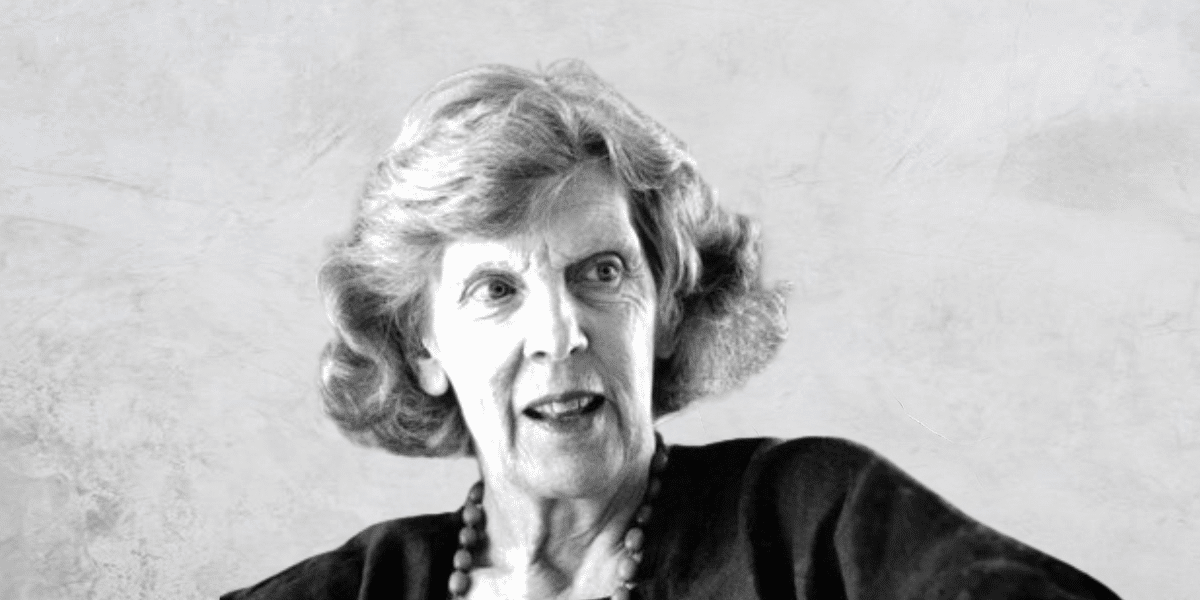

Big Thinker: Philippa Foot

Philippa Foot (1920-2010) is one of the founders of contemporary virtue ethics, reviving the dominant Aristotelian ethics in the 20th century. She introduced a genre of decision problems in philosophy as part of the analysis in debates around abortion and the doctrine of double effect.

Philippa Foot was born in England in 1920. While receiving no formal education throughout her childhood, she obtained a place at Somerville College, one of the two women’s colleges at Oxford. After receiving a degree in 1942 in politics, philosophy and economics, she briefly worked as an economist for the British Government. Besides this, she spent her life at Oxford as a lecturer, tutor, and fellow, interspersed with visiting professorships to various American colleges, including Cornell, MIT, City University of New York and University of California Los Angeles.

Virtue ethics

In the philosophical world, Philippa Foot is best known for her work repopularising virtue ethics in the 20th century. Virtue ethics defines good actions as ones that embody virtuous character traits, like courage, loyalty, or wisdom. This is distinct from deontological ethical theories which encourage us to think about the action itself and its consequences or purpose instead of the kind of person who is doing the action.

“What I believe is that there are a whole set of concepts that apply to living things and only to living things, considered in their own right. These would include, for instance, function, welfare, flourishing, interests, the good of something. And I think that all these concepts are a cluster. They belong together.”

The doctrine of double effect

Imagine you are the driver of a runaway trolley that is barrelling down the tracks. You have the option to do nothing, and let five people die, or the option to switch the tracks and kill one person.

This is Philippa Foot’s famous trolley problem. This thought experiment encourages us to think about the moral differences between actively causing death (e.g. pulling a lever to get the trolley to change tracks) and passively or indirectly causing death (doing nothing, allowing the trolley to kill five people). Utilitarians might argue that five deaths is far less desirable than one death, but many people instinctively feel that actively causing a death has a different moral weight than doing nothing.

Perhaps Foot’s most influential paper is The Problem of Abortion and the Doctrine of Double Effect, published in 1967. Here, she explains what is called the Doctrine of the Double Effect, which explains why some very bad actions (like killing) might be permissible because of their potentially positive outcomes. The trolley problem is one example of the doctrine of double effect, but she also uses various other cases.

“The words “double effect” refer to the two effects that an action may produce: the one aimed at, and the one foreseen but in no way desired. By “the doctrine of the double effect” I mean the thesis that it is sometimes permissible to bring about by oblique intention what one may not directly intend.”

For example, what if one person needed a large dose of a rare medicine to save their life, but that same amount of medicine could save the lives of five others who each needed less? Would we think that the “oblique intention” of a nurse who administers the medicine to one person instead of the five people is justified?

Foot finds that it would be wise to save the five people by giving them each a one-fifth dose of the medicine. However, she encourages us to interrogate why this feels different from the organ donor case, where we save five people who need organ transplants by sacrificing one person.

“My conclusion is that the distinction between direct and oblique intention plays only a quite subsidiary role in determining what we say in these cases, while the distinction between avoiding injury and bringing aid is very important indeed.”

When the trolley problem is taken to its logical conclusion, these fallacies become even more obvious. As John Hacker-Wright writes, the trolley problem “raises the question of why it seems permissible to steer a trolley aimed at five people toward one person while it seems impermissible to do something such as killing one healthy man to use his organs to save five people who will otherwise die.”

Foot has also contributed to moral philosophy with her writing on determinism and free will, reasons for action, goodness and choice, and discussions of moral beliefs and moral arguments.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Explainer

Society + Culture, Politics + Human Rights

Ethics Explainer: Moral Courage

LISTEN

Society + Culture

Festival of Dangerous Ideas (FODI)

Opinion + Analysis

Society + Culture

Infographic: Tear Down the Tech Giants

Big thinker

Politics + Human Rights, Society + Culture

Big Thinker: Slavoj Žižek

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Shame

Flushed cheeks, lowered gaze and an interminable voice in your head criticising your very being.

Imagine you’re invited to two different events by different friends. You decide to go to one over the other, but instead of telling your friend the truth, you pretend you’re sick. At first, you might be struck with a bit of guilt for lying to your friend. Then, afterwards, they see photos of you from the other event and confront you about it.

In situations like this, something other than guilt might creep in. You might start to think it’s more than just a mistake; that this lie is a symptom of a larger problem: that you’re a bad, disrespectful person who doesn’t deserve to be invited to these things in the first place. This is the moral emotion of shame.

Guilt says, “I did something bad”, while shame whispers, “I am bad”.

Shame is a complicated emotion. It’s most often characterised by feelings of inadequacy, humiliation and self-consciousness in relation to ourselves, others or social and cultural standards, sometimes resulting in a sense of exposure or vulnerability, although many philosophers disagree about which of these are necessary aspects of shame.

One approach to understanding shame is through the lens of self-evaluation, which says that shame arises from a discrepancy between self-perception and societal norms or personal standards. According to this view, shame emerges when we perceive ourselves as falling short of our own expectations or the expectations of others – though it’s unclear to what extent internal expectations can be separated from social expectations or the process of socialisation.

Other approaches lean more heavily on our appraisal of social expectations and our perception of how we are viewed by others, even imaginary others. These approaches focus on the arguably unavoidably interpersonal nature of shame, viewing it as a response to social rejection or disapproval.

This social aspect is such a strong part of shame that it can persist even when we’re alone. One way to exemplify this is to draw similarity between shame and embarrassment. Imagine you’re on an empty street and you trip over, sprawling onto the path. If you’re not immediately overcome with annoyance or rage, you’ll probably be embarrassed.

But there’s no one around to see you, so why?

Similarly, taking the example we began with, imagine instead that no one ever found out that you lied about being sick. It’s possible you might still feel ashamed.

In both of these cases, you’re usually reacting to an imagined audience – you might be momentarily imagining what it would feel like if someone had witnessed what you did, or you might have a moment of viewing yourself from the outside, a second of heightened self-awareness.

Many philosophers who take this social position also see shame as a means of social control – notably among them is Martha Nussbaum, known for her academic career highlighting the importance of emotions in philosophy and life.

Nussbaum argues that shame is very often ‘normatively distorted’, in that because shame is reactive to social norms, we often end up internalising societal prejudices or unjust beliefs, leading to a sense of shame about ourselves that should not be a source of shame. For example, people often feel ashamed of their race, gender, sexual orientation, or disability due to societal stigma and discrimination.

Where shame can go wrong

The idea of shame as a prohibitive and often unjust feeling is a sentiment shared by many who work with domestic violence and sexual assault survivors, who note that this distortive nature of shame is what prevents many women from coming forward with a report.

Even in cases where shame seems to be an appropriate response, it often still causes damage. At the Festival of Dangerous Ideas session in 2022, World Without Rape, panellist and journalist Jess Hill described an advertisement she once saw:

“…a group of male friends call out their mate who was talking to his wife aggressively on the phone. The way in which they called him out came from a place of shame, and then the men went back to having their beers like nothing happened.” Hill encourages us to think: where will the man in the ad take his shame with him at the end of the night? It will likely go home with him, perpetuating a cycle of violence.

Likewise, co-panellist and historian Joanna Bourke noted something similar: “rapists have extremely high levels of abuse and drug addictions because they actually do feel shame”. Neither of these situations seem ‘normatively distorted’ in Nussbaum’s sense, and yet they still seem to go wrong. Bourke and other panellists suggested that what is happening here is not necessarily a failing of shame, but a failing of the social processes surrounding it.

Shame opens us to vulnerability, but to sit with vulnerability and reflect requires us to be open to very difficult conversations.

If the social framework for these conversations isn’t set up, we end up with unjust shame or just shame that, unsupported, still manifests itself ultimately in further destruction.

However, this nuance is far from intuitive. While people are saddened by the idea of victims feeling shame, they often feel righteous in their assertions that perpetrators of crimes or transgressors of socials norms should feel shame, and that their lack of shame is something that causes the shameful behaviour in the first place.

Shame certainly has potential to be a force for good if it reminds us of moral standards, or in trying to avoid it we are motivated to abide by moral standards, but it’s important to retain a level of awareness that shame alone is often not enough to define and maintain the ethical playing field.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

WATCH

Relationships

Unconscious bias

Opinion + Analysis

Relationships, Society + Culture

I’m really annoyed right now: ‘Beef’ and the uses of anger

Opinion + Analysis

Politics + Human Rights, Relationships

Why victims remain silent and then find their voice

Opinion + Analysis

Relationships

How to respectfully disagree

Read me once, shame on you: 10 books, films and podcasts about shame

Read me once, shame on you: 10 books, films and podcasts about shame

Opinion + AnalysisSociety + Culture

BY The Ethics Centre 7 MAR 2024

Shame is something we have all experienced at some point. Of all the moral emotions, it can be the most destructive to a healthy sense of self. But do we ever deserve to feel it?

In order to unpack it’s complexities, we’ve compiled 10 books, films, series and podcasts which tackle the ethics of shame.

Jon Ronson – Shame Culture, Festival of Dangerous Ideas

Welsh journalist, Jon Ronson in his FODI 2015 talk examines the emergence of public shaming as an internet phenomenon, and how we can combat this culture. Based on his book, So You’ve Been Publicly Shamed, Ronson highlights several individuals behind high profile shaming, who after careless actions have been subject to a relentless lynch mob.

Disgrace – J. M. Coetzee

Fictional novel by South African author, J.M. Coetzee tells the story of a middle-aged Cape Town professor’s fall from grace following his forced resignation from a university after pursuing an inappropriate affair. The professor struggles to come to terms with his own behaviour, sense of self as well as his relationships around him.

The Whale

American film directed by Darren Aronofsky where a reclusive and unhealthy English teacher, hides out in his flat and eats his way to death. He is desperate to reconnect with his teenage daughter for a last chance at redemption.

The Kite Runner – Khaled Hosseini

Fictional novel by Afghan-American author, Khaled Hosseini which tells the story of Amir, a young boy from the Wazir Akbar Khan district of Kabul, and how shame can be a destructive force in an individual’s life.

World Without Rape, Festival of Dangerous Ideas

This panel discussion with Joanna Bourke, Jess Hill, Saxon Mullins, Bronwyn Penrith and Sisonke Msimang from FODI 2022 examines rape and its use in war, the home and society as an enduring part of history and modern life. The panel examines the role of shame from both a victim’s and perpetrator’s point of view and whether it is key to tackling the issue.

Muriel’s Wedding

The Australian beloved classic from P.J. Hogan portrays a young social outcast who embezzles money and attempts to fake a new life for herself.

The List – Yomi Adegoke

British fictional novel by Yomi Adegoke about a high-profile female journalist’s world that is upended when her fiancé’s name turns up in a viral social media post. The story is a timely exploration of the real-world impact of online life.

Shame

British psychological drama directed by Steve McQueen, exploring the uncompromising nature of sex addiction.

Reputation Rehab

Australian documentary series that believes we shouldn’t be consigned to a cultural scrapheap, and that most people are more than a punchline and deserve a second chance. Hosted by Zoe Norton Lodge and Kirsten Drysdale, guests include Nick Kyrigos, Abbie Chatfield and Osher Gunsberg.

It’s a Sin

British TV series depicting the lives of a group of gay men and their friends during the 1980-1990s HIV/AIDS crisis in the UK. The series unpacks the mechanics of shame and how it was built into queer lives, potentially affecting their own behaviour.

For a deeper dive, join us for The Ethics of Shame on Wednesday 27 March, 2024 at 6:30pm. Tickets available here.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Society + Culture

Four causes of ethical failure, and how to correct them

Opinion + Analysis

Society + Culture

Ethics on your bookshelf

Opinion + Analysis

Relationships, Society + Culture

Barbie and what it means to be human

Opinion + Analysis

Relationships, Society + Culture

Bring back the anti-hero: The strange case of depiction and endorsement

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Losing the thread: How social media shapes us

Losing the thread: How social media shapes us

Opinion + AnalysisSociety + CultureRelationships

BY Daniel Finlay The Ethics Centre 4 MAR 2024

“I feel like I invited two friend groups to the same party.”

The slowly spiralling mess that is Twitter received another beating last year in the form of a rival platform announcement: Threads. And although this was a potentially exciting development for all the scorned tweeters out there, amid the hype, noise and hubbub of this new platform I noticed something interesting.

Some people weren’t sure how to act.

Twitter has long been associated with performative behaviour of many kinds (as well as genuine activism and journalism of many kinds). Influencers, comedians, politicians and every aspiring Joe Schmoe adopt personas that often amount to some combination of sarcastic, cynical, snarky and bluntly relatable.

Now, you would think that people migrating to a rival app with ostensibly the same function would just port these personas over. And you would be right, except for the hiccup of Threads being tied directly to Instagram accounts.

Why does this matter? As many users have pointed out, the kinds of things people say and do on Twitter and Instagram are markedly different, partially because of the different audiences and partially because of the different medium focus (visual versus textual). As a result, some people are struggling with the concept of having family and friends viewing their Twitter-selves, so to speak.

These posts can of course be taken with a grain of salt. Most people aren’t truly uncomfortable with recreating their Twitter identities on Threads. In fact, somewhat ironically, reinforcing their group identity as “(ex-)Twitter users” is the underlying function of these posts – signalling to other tweeters that “Hey, I’m one of you”.

The incongruity between Instagram and Twitter personas or expression has been pointed out by some others in varying depth and is something you might have noticed yourself if you spend much of your free time on either platform. In short, Instagram is mostly a polished, curated, image-first representation of ourselves, whereas Twitter is mostly a stream-of-consciousness conversation mill (which lends itself to more polarising debate). There are plenty of overlapping users, but the way they appear on each platform is often vastly different.

With this in mind, I’ve been thinking: How do our online identities reflect on us? How do these identities shape how we use other platforms? Do social media personas reflect a type of code-switching or self-monitoring, or are they just another way of pandering to the masses?

What does it say about us when we don’t share certain aspects of ourselves with certain people?

This apparent segmentation of our personality isn’t new or unique to social media. I’m sure you can recall a moment of hesitation or confusion when introducing family to friends, or childhood friends to hobby friends, or work friends to close friends. It’s a feeling that normally stems from having to confront the (sometimes subtle) ways that we change the way we speak and act and are around different groups of people.

Maybe you’re a bit more reserved around colleagues, or more comical around acquaintances, or riskier with old friends. Whatever it is, having these worlds collide can get you questioning which “you” is really you.

There isn’t usually an easy answer to that, either. Identity is a slippery thing that philosophers and psychologists and sociologists have been wrangling for a long time. One basic idea is that humans are complex, and we can’t be expected to be able to communicate or display all the elements of our psyche to every person in our lives in the same ways. While that’s a tempting narrative, it’s important to be aware of the difference between adapting and pandering.

Adapting is something we all do to various degrees.

In psychology, it’s called self-monitoring – modifying our behaviours in response to our environment or company. This can be as simple as not swearing in front of family or speaking more formally at work. Sometimes adapting can even feel like a necessity. People on the autism spectrum often “mask” their symptoms and behaviours by supressing them and/or mimicking neurotypical behaviours to fit in or avoid confrontation.

In lots of ways, social media has enhanced our ability to adapt. The way we appear online can be something highly crafted, but this is where we can sometimes run into the issue of pandering. In this context, by pandering I mean inauthentically expressing ourselves for some kind of personal gain. The key issue here is authenticity.

As Dr Tim Dean said in an earlier article in this series, “you can’t truly understand who someone is without also understanding all the groups to which they belong”. In many ways, social media platforms constitute (and indicate further) groups to which we belong, each with their own styles, tones, audiences, expectations and subcultures. But it is this very scaffolding that can cause people to pander to their in-groups, whether it simply be to fit in, or in search of power, fame or money.

I want to stress that even pandering in and of itself isn’t necessarily unethical. Sometimes pandering is something we need to do; sometimes it’s meaningless or harmless. However, sometimes it amounts to a violation of our own values. Do we really want to be the kinds of people who go against our principles for the sake of fitting in?

That’s what struck me when I read all of the confused messaging on the release of Threads. It’s one thing to not value authenticity very highly; it’s another to disvalue it completely by acting in ways that oppose our core values and principles. Sometimes social media can blur these lines. When we engage in things like mindless dogpiling or reposting uncited/unchecked information, we’re often acting in ways we wouldn’t act elsewhere without realising it, and that’s worth reflecting on.

It’s certainly something that I’ve noticed myself reflecting on since then. For some, our online personas can be an outlet for aspects of our personality that we don’t feel welcome expressing elsewhere. But for others, the ease with which social media allows us to craft the way we present poses a challenge to our sense of identity.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

LISTEN

Health + Wellbeing, Business + Leadership, Society + Culture

Life and Shares

Opinion + Analysis

Health + Wellbeing, Relationships

Anthem outrage reveals Australia’s spiritual shortcomings

WATCH

Relationships

Purpose, values, principles: An ethics framework

Big thinker

Politics + Human Rights, Society + Culture

Big Thinker: Slavoj Žižek

BY Daniel Finlay

Daniel is a philosopher, writer and editor. He works at The Ethics Centre as Youth Engagement Coordinator, supporting and developing the futures of young Australians through exposure to ethics.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Moral hazards

When individuals are able to avoid bearing the costs of their decisions, they can be inclined towards more risky and unethical behaviour.

Sailing across the open sea in a tall ship laden with trade goods is a risky business. All manner of misfortune can strike, from foul weather to uncharted shoals to piracy. Shipping businesses in the 19th century knew this only too well, so when the budding insurance industry started offering their services to underwrite the ships and cargo, and cover the costs should they experience misadventure, they jumped at the opportunity.

But the insurance companies started to notice something peculiar: insured ships were more likely to meet with misfortune than ships that were uninsured. And it didn’t seem to be mere coincidence. Instead, it turned out that shipping companies covered by insurance tended to invest less in safety and were more inclined to make risky decisions, such as sailing into more dangerous waters to save time. After all, they had the safety net of insurance to bail them out should anything go awry.

Naturally, the insurance companies were not impressed, and they soon coined a term for this phenomenon: “moral hazard”.

Risky business

Moral hazard is usually defined as the propensity for the insured to take greater risks than they might otherwise take. So the owners of a building insured against fire damage might be less inclined to spend money on smoke alarms and extinguishers. Or an individual who insures their car against theft might be less inclined to invest in a more reliable car alarm.

But it’s a concept that has applications beyond just insurance.

Consider the banks that were bailed out following the 2008 collapse of the subprime mortgage market in the United States. Many were considered “too big to fail”, and it seems they knew it. Their belief that the government would bail them out rather than let them collapse gave the banks’ executives a greater incentive to take riskier bets. And when those bets didn’t pay off, it was the public that had to foot much of the bill for their reckless behaviour.

There is also evidence that the existence of government emergency disaster relief, which helps cover the costs of things like floods or bushfires, might encourage people to build their homes in more risky locations, such as in overgrown bushland or coastal areas prone to cyclone or flood.

What makes moral hazards “moral” is that they allow people to avoid taking responsibility for their actions. If they had to bear the full cost of their actions, then they would be more likely to act with greater caution. Things like insurance, disaster relief and bank bailouts all serve to shift the costs of a risky decision from the shoulders of the decision-maker onto others – sometimes placing the burden of that individual’s decision on the wider public.

Perverse incentives

While the term “moral hazard” is typically restricted examples involving insurance, there is a general principle that applies across many domains of life. If we put people into a situation where they are able to offload the costs of their decisions onto others, then they are more inclined to entertain risks that they would otherwise avoid or engage in unethical behaviour.

Like the salesperson working for a business they know will be closing in the near future might be more inclined to sell an inferior or faulty product to a customer, knowing that they won’t have to worry about dealing with warranty claims.

This means there’s a double edge to moral hazards. One is born by the individual who has to resist the opportunity to shirk their personal responsibility. The other is born by those who create the circumstances that create the moral hazard in the first place.

Consider a business that has a policy saying the last security guard to check whether the back door is locked is held responsible if there is a theft. That might give security guards an incentive to not check the back door as often, thus decreasing the chance that they are the last one to check it, but increasing the chance of theft.

Insurance companies, governments and other decision-makers need to ensure that the policies and systems they put in place don’t create perverse incentives that steer people towards reckless or unethical behaviour. And if they are unable to eliminate moral hazards, they need to put in place other policies that provide oversight and accountability for decision making, and punish those who act unethically.

Few systems or processes will be perfect, and we always require individuals to exercise their ethical judgement when acting within them. But the more we can avoid creating the conditions for moral hazards, the less incentives we’ll create for people to act unethically.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Politics + Human Rights

We are on the cusp of a brilliant future, only if we choose to embrace it

Opinion + Analysis

Business + Leadership, Society + Culture

There’s something Australia can do to add $45b to the economy. It involves ethics.

Opinion + Analysis

Science + Technology, Business + Leadership

3 things we learnt from The Ethics of AI

Opinion + Analysis

Science + Technology, Business + Leadership, Society + Culture

AI might pose a risk to humanity, but it could also transform it

BFSO Young Ambassadors: Investing in our future leaders

BFSO Young Ambassadors: Investing in our future leaders

Opinion + AnalysisBusiness + Leadership

BY The Ethics Centre 15 FEB 2024

In 2018 ethics became a household word in financial services, partly because of the Hayne Royal Commission which identified the unethical behaviours and cultures within the industry. While behavioural and cultural change starts with individuals, empowerment doesn’t come easy, particularly at the beginning of one’s career.

Aimed at supporting young professionals, the Banking and Financial Services Oath (BFSO) Young Ambassador Program encourages people early in their careers to adopt a strong ethical foundation in the banking and financial services industry.

We sat down with three former BFSO alumni, Max Mennen (NAB), Michelle Lim (RBA), and Elle Griffin (CBA), to discuss the impact the program has had on them, and what ethics looks like in an industry that’s been through turmoil and has a responsibility to function above and beyond the legal requirements.

TEC: What first drove you to apply for the BFSO program?

Max: My experience in banking up until I did the BFSO program was in a customer facing role and I was exposed to a lot of opinions about banks – why they’re flawed and their wrongdoings. And that wasn’t just from customers, that was just the general introduction that I had while working.

The BFSO certainly aligned with me questioning these sorts of opinions that I’d heard, finding the truth to them and more so seeing how you can rectify [the behaviours that cause] those opinions. It helped me interrogate what work I was doing and how I could make it better.

Elle: I wanted to make an impact outside of my day-to-day role and feel like I was contributing to something bigger than just the bank, but to the community. It also gave me a real responsibility to think about broader issues going into becoming a banker, that we all had a role to play in improving our profession.

Michelle: Coming from a psychology background, I was really interested in contributing to understanding the culture and the conduct that led to all the issues discussed in the 2017 Hayne Royal Commission. I wanted to contribute to those discussions and hopefully be part of that cultural shift.

TEC: Five years on from the Royal Commission, where do you see the role of the BFSO?

Elle: I have always strongly identified with the idea that the BFSO promotes that we are part of a profession, which means that we are more than just employees of our particular bank. Instead, being a professional means that you have competence, care and diligence about how you approach what it is that you do.

I think that what the Hayne Royal Commission showed was that people didn’t approach banking from that kind of noble profession mindset and the BFSO fills that void for the industry. As long as people consider what we do as a profession, we’ll go a long way to avoiding those outcomes in the future.

Max: I think the BFSO could be valued in any industry because what it mainly showed me was an insight into the value chain of what, in our case, the financial services sector provides. It has an impact on the broader economy, but certainly on everyday customers as well. It’s pretty easy to just view your job quite narrowly as the process that you play rather than looking at the impact of what you stand for and what the organisation does. It certainly gave me much better recognition for the role that financial services play and how they can do it better.

TEC: Do you feel there’s more of an appetite for financial institutions to allow time for participating in programs like the BFSO?

Michelle: The BFSO gave me courage and made me feel empowered to lean into something that I was always interested in, which is social justice and equality and equity. It brings people that are like-minded together who are quite young and new in their career, and it gives them this sense of empowerment and courage to actually lean into what we value and what our principles are and then echo and extend that out and amplify that within our organisations and then beyond.

When I started, five or six years ago, I was in the risk culture space and that was still quite new but now I’ve seen that space grow so much and there’s become a heavy focus into conduct and risk. I think organisations are really seeing the value of carving out dedicated time for ethical leadership and what that looks like, and mapping that back to my ethical framework for my organisation and what our purpose is.

TEC: Has it impacted the way you behave long-term in your role?

Elle: I feel very fortunate to have done the program very early in my career because it gives you a worldview, and a really clear way to interpret who you are and the work that you do day to day working in the financial services sector. And it translates to any role that I’ve had in the past five years. It’s helped me realise that not only that you should feel comfortable to speak up, but that I’m required to speak up and I should be speaking up.

TEC: What would you say to somebody wanting to apply to the BFSO?

Michelle: Give 110% and don’t be afraid to ask questions. And invest in the networks and relationships you’ll make. The biggest thing for me is the relationships.

Max: I would say the impacts can be exponential and they’ll last with you.

Applications for the 2024 BFSO Young Ambassador Program are now open until 15 March. Find out more here.

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

6 Things you might like to know about the rich

Opinion + Analysis

Business + Leadership

Understanding the nature of conflicts of interest

Explainer

Business + Leadership, Relationships

Ethics Explainer: Moral injury

Opinion + Analysis

Business + Leadership, Science + Technology

People first: How to make our digital services work for us rather than against us

Day trading is (nearly) always gambling

Day trading is (nearly) always gambling

Opinion + AnalysisBusiness + Leadership

BY The Ethics Centre 2 FEB 2024

It could just be our algorithms, but over the past couple of years there’s been huge increase in the number of online share trading apps advertising across social platforms, YouTube and broadcast/streaming TV, promising quick and easy access to the share trading market.

Historically, buying and selling shares through a stock broker and getting professional financial advice has been limited to high net worth individuals (with the cost of professional financial advice in Australia ranging from $2000 to $5000-plus per annum). But these apps promise an easy, low-barrier entry point and potential side hustle for people to start buying and selling shares from the comfort of their couch or even their bathroom.

You don’t need any knowledge of the market and its statistics to start buying and selling at this entry level. As noted in The Ethics Centre’s latest podcast Life and Shares, nearly 5000 Australians opened an online account and started trading in the months after Covid-19 hit our shores, holding their shares for one day on average, with 80% losing money (although most would be classified as ‘toe dippers’ rather than traders).

The issue is, many of these trading apps are gamified with incentives, like points accumulation and (relatively valueless) gifts or benefits the more trades you make. These companies charge a fee for every trade, so it’s in their interest for customers to make as many trades as possible. Research by the Ontario Securities Commission found that groups using gamified apps, “made around about 40% more trades than control groups.”

This implicitly encourages day trading, where buyers play on the volatility of the market by buying and selling securities quickly, often in the same day, in the hope of turning a quick profit.

“The general market wisdom is that time in the market generally beats timing the market,” says Angel Xiong, head of the finance department in the School of Economics at RMIT.

Day trading flips this by trying to predict rapid price movements based off the news of the day, and this is where it starts to verge into gambling-adjacent behaviour.

Cameron Buchanan, of ASIC accredited online training centre, the International Day Trading Academy, says, “people often treat trading like they are gambling. And the main reason is because most gamblers don’t expect to lose. So emotionally, a lot of us are hardwired to not [want to] experience loss. We want certainty. And if we are coming into the trading markets, there’s a lot of uncertainty in it.”

Even if you know your stats and have a plan for your buy and sell points, Cameron says, “I know from my own experience, you get so locked into that trade and the emotion is so strong. You don’t want to take that loss because of the hope that the market turns around and gives you that positive feeling.”

Angel Xiong, Head of Finance in the School of Economics at RMIT, studies behavioural financial biases. “One of these biases is something we call attribution bias,” Xiong says, “which means people tend to attribute their success to strategy and skills, but when they lose money, they attribute it to, ‘Oh, it’s just bad luck’. So in terms of whether they [day traders] have strategy, it is really up to debate.” Consequently, Xiong believes, “stock market trading and gambling in casinos or online betting are very similar.”

As Ravi Dutta Powell, Senior Advisor at the Behavioural Insights Team, notes in episode 4, “the more that you gamify it, the more it starts to move into that gambling space and becomes less about the underlying financial products and more about engagement with the app and trying to get people to spend more money even though that may not necessarily be in their best financial interests… The real cost is the increased risk of losing the money you’ve invested because you’re making more trades.”

Addiction specialists, like the clinical psychologist interviewed in episode 3, warn that day traders can develop trading habits that closely resemble gambling addiction. “Certain forms of trading are addictive in and of itself,” the psychologist says. “The more high volatility securities or platforms where you can be in debt to the platform. Those are more risky plays and those can become quite addictive. As somebody who works in gambling, share trading looks identical to that and actually responds to treatment for gambling.”

“As somebody who works in gambling, share trading looks identical to that and actually responds to treatment for gambling.”

Symptoms of addictive behaviour include developing an obsession with trading, chasing winning streaks, trying to recoup losses, investing larger and larger amounts to chase the thrill, buying into the Sunk Cost Fallacy, and investing money in shares you haven’t properly researched.

But even if you’re going for “time in the market,” researching your buys thoroughly and buying blue chip stocks, it can still go bad due to that aforementioned “volatility”. Look at the effect of the Covid-19 pandemic on the market, or the stock market crashes of 2002 and 2008, and it becomes clear that no stock is a “sure win”, especially when your emotions are pushing you to avoid a loss and hold on for the market to turn around.

All you can accurately control, as Life and Shares host Cris Parker puts it in episode 4, is to ask yourself, “What are my values? How much am I prepared to lose?” – and brush up on your financial literacy before you start dropping bundles.

Life and Shares unpicks the share market so you can make decisions you’ll be proud of. Listen now on Spotify and Apple Podcasts.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

The value of principle over prescription

Opinion + Analysis

Business + Leadership

Is it fair to expect Australian banks to reimburse us if we’ve been scammed?

Opinion + Analysis

Business + Leadership

The near and far enemies of organisational culture

Opinion + Analysis

Business + Leadership

How can Financial Advisers rebuild trust?

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethical redundancies continue to be missing from the Australian workforce

Ethical redundancies continue to be missing from the Australian workforce

Opinion + AnalysisBusiness + Leadership

BY The Ethics Centre Nina Hendy 16 JAN 2024

Telling staff that they have been made redundant is one of the most difficult parts of anyone’s job. But what’s consistently lacking from these hard conversations is human compassion.

All too often, there are glaring examples of a genuine lack of concern or care being shown by major companies forced to let people go.

Think about it for a second. A person’s identity is wrapped up in what they do for work. After introducing ourselves in a social setting, the next thing we usually share about ourselves is what we do for work.

When Director of The Ethics Centre’s Ethics Alliance, Cris Parker called out the need for a rethink of redundancy done #ethically on LinkedIn recently, plenty of workers chimed in about their own experiences of tragic redundancy stories.

One senior manager revealed he wasn’t even paid a redundancy when being laid off by an Australian company some years ago. Management got around this because the law states that they don’t have to pay redundancy if there are less than 15 employees in the company.

He admits that he still struggles with what happened to him all these years later and wants to see the law changed, admitting that the process was unethical, resulting in people left unsupported and left to struggle financially.

The closed door meeting you didn’t expect

No matter how committed you are to your role, suddenly being made redundant can be an emotionally crippling experience.

But it’s all too common. Media reports reveal that 2023 has been a year of mass redundancies as profits have been squeezed. Nearly 23,000 Australians have been laid off in reported redundancy rounds this year in response to rising interest rates and stubbornly high inflation.

But the real number of actual redundancies is likely to have been much higher as employers only need to notify Services Australia when they lay off more than 15 staff members.

Among the cuts has been KPMG, which announced 200 redundancies in February. Star Entertainment laid off 500 staff in May, while Telstra cut nearly 500 staff in July. The big banks have also been slashing jobs, collectively cutting more than 2000 jobs, according to media reports. There are also examples of companies pushing for staff to leave if they don’t return to the office.

Back in the pandemic, employers were more compassionate in many ways. We saw into our boss’s homes and personal lives and heard about the challenges they were facing. Bonds that haven’t been seen before in the workplace were formed.

At the time, a huge 99% of workers felt that they were working for an empathetic leader in the pandemic, according to KornFerry statistics. This is twice as high than pre-Covid, and it has shaped a new normal that employers need to recognise, even though they may now be facing financial pain amid a much more complex economic time.

Redundancies announced over the lunchroom speaker

Qantas Airways was among the many companies to downsize teams in the wake of Covid, as work was in short supply. In a bid to save a buck, Qantas Airways replaced the ground handling function with outsourced workers, which the Federal Court has since found to be illegal because the airline failed to engage in proper consultation and communication.

In a particularly brutal approach, Qantas reportedly told the 1700 workers about their upcoming dismissal via a lunchroom speaker with no prior warning, which doesn’t demonstrate empathy or compassion.

The case reminds Mollie Eckersley, ANZ operations manager for BrightHR of the US-based Better.com.au CEO who made headlines for making 900 of his staff redundant via an impersonal Zoom call.

She’s the first to accept that making redundancies can’t always be avoided in a bid to keep businesses viable, but cautions that how redundancies are announced to staff can have lasting ramifications on a person.

Approaching job cuts with a transparent, open and empathetic perspective will assist what is already a difficult experience. If not done well the negative impact on the culture is longstanding.

“The Qantas case has highlighted and served a cautionary tale for other businesses considering redundancy plans for its employees. There’s a clear need for robust records – otherwise businesses risk legal action and irreparable damage to reputation. Specific steps must be followed to ensure the process is fair and legal,” says Eckersley.

The rules state that the employer must initiate a meeting with the employee to discuss proposed changes, and that in that meeting, the staffer should be allowed to express concerns, provide feedback and suggest alternatives to redundancy.

But what about the ethical part of this process? People need to be treated with compassion, and giving the employee reasons for letting them go can help soften the blow.

Asking who might like to take a redundancy can be a good first step, because some employees might already have one foot out the door.

Organisations need to ensure they are acting in line with their values. Integrity, care and ethics need to be embedded into the process of making people redundant, particularly when those difficult decisions are made and there are very real human consequences.

This can be done by ensuring that your organisation has a purpose that can inspire those who remain, and be transparent about the reasons for the redundancy, rather than letting them wonder if they might be next.

A great deal of consideration also should be given to the timing of a redundancy announcement. Alternatives to redundancy, such as offering staff shorter working weeks or even reducing pay for a prescribed period of time, should also be considered.

Growing unemployment, mental health issues and the treatment of workers isn’t being addressed by the largest companies using redundancy as a lever amid economic woes, and our government seems intent on allowing the status quo to continue.

We’re all human beings, after all.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership

Do Australian corporations have the courage to rebuild public trust?

Opinion + Analysis

Business + Leadership

Who are corporations willing to sacrifice in order to retain their reputation?

Opinion + Analysis

Business + Leadership

Despite codes of conduct, unethical behaviour happens: why bother?

Explainer

Science + Technology, Business + Leadership