Ethics Explainer: Truth & Honesty

How do we know we’re telling the truth? If someone asks you for the time, do you ever consider the accuracy of your response?

In everyday life, truth is often thought of as a simple concept. Something is factual, false, or unknown. Similarly, honesty is usually seen as the difference between ‘telling the truth’ and lying (with some grey areas like white lies or equivocations in between). ‘Telling the truth’ is somewhat of a misnomer, though. Since honesty is mostly about sincerity, people can be honest without being accurate about the truth.

In philosophy, truth is anything but simple and weaves itself into a host of other areas. In epistemology, for example, philosophers interrogate the nature of truth by looking at it through the lens of knowledge.

After all, if we want to be truthful, we need to know what is true.

Figuring that out can be hard, not just practically, but metaphysically.

Theories of Truth

There are several competing theories that attempt to explain what truth is, the most popular of which is the correspondence theory. Correspondence refers to the way our minds relate to reality. In it, truth is a belief or statement that corresponds to how the world ‘really’ is independent of our minds or perceptions of it. As popular as this theory is, it does prompt the question: how do we know what the world is like outside of our experience of it?

Many people, especially scientists and philosophers, have to grapple with the idea that we are limited in our ability to understand reality. For every new discovery, there seems to be another question left unanswered. This being the case, the correspondence theory leads us to a problem of not being able to speak about things being true because we don’t have an accurate understanding of reality.

Another theory of truth is the coherence theory. This states that truth is a matter of coherence within and between systems of beliefs. Rather than the truth of our beliefs relying on a relation to the external world, it relies on their consistency with other beliefs within a system.

The strength of this theory is that it doesn’t depend on us having an accurate understanding of reality in order for us to speak about something being true. The weakness is that we can imagine there being several different comprehensive and cohesive system of beliefs that, and thus different people having different ‘true’ beliefs that are impossible to adjudicate between.

Yet another theory of truth is pragmatist, although there are a couple of varieties, as with pragmatism in general. Broadly, we can think of pragmatist truth as a more lenient and practical correspondence theory.

For pragmatists, what the world is ‘really’ like only matters as far as it impacts the usefulness of our beliefs in practice.

So, pragmatist truth is in a sense malleable; it, like the scientific method it’s closely linked with, sees truth as a useful tool for understanding the world, but recognises that with new information and experiment the ‘truth’ will change.

Ethical aspects of truth and honesty

Regardless of the theory of truth that you subscribe to, there are practical applications of truth that have a significant impact on how to behave ethically. One of these applications is honesty.

Honesty, in a simple sense, is speaking what we wholeheartedly believe to be true.

Honesty comes up a lot in classical ethical frameworks and, as with lots of ethical concepts, isn’t as straightforward as it seems.

In Aristotelian virtue ethics, honesty permeates many other virtues, like friendship, but is also a virtue in itself that lies between habitual lying and boastfulness or callousness. So, a virtue ethicist might say a severe lack of honesty would result in someone who is untrustworthy or misleading, while too much honesty might result in someone who says unnecessary truthful things at the expense of people’s feelings.

A classic example is a friend who asks you for your opinion on what they’re wearing. Let’s say you don’t think what they’re wearing is nice or flattering. You could be overly honest and hurt their feelings, you could lie and potentially embarrass them, or you could frame your honesty in a way that is moderate and constructive, like “I think this other colour/fit suits you better”.

This middle ground is also often where consequentialism lands on these kinds of interpersonal truth dynamics because of its focus on consequences. Broadly, the truth is important for social cohesion, but consequentialism might tell us to act with a bit more or a bit less honesty depending on the individual situations and outcomes, like if the truth would cause significant harm.

Deontology, on the other hand, following in the footsteps of Immanuel Kant, holds honesty as an absolute moral obligation. Kant was known to say that honesty was imperative even if a murderer was at your door asking where your friend was!

Outside of the general moral frameworks, there are some interesting ethical questions we can ask about the nature of our obligations to truth. Do certain people or relations have a stronger right to the truth? For example, many people find it acceptable and even useful to lie to children, especially when they’re young. Does this imply age or maturity has an impact on our right to the truth? If the answer to this is that it’s okay in a paternalistic capacity, then why doesn’t that usually fly with adults?

What about if we compare strangers to friends and family? Why do we intuitively feel that our close friends or family ‘deserve’ the truth from us, while someone off the street doesn’t?

If we do have a moral obligation towards the truth, does this also imply an obligation to keep ourselves well-informed so that we can be truthful in a meaningful way?

The nature of truth remains elusive, yet the way we treat it in our interpersonal lives is still as relevant as ever. Honesty is a useful and easier way of framing lots of conversations about truth, although it has its own complexities to be aware of, like the limits of its virtue.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships, Society + Culture

Yellowjackets and the way we hunger

Opinion + Analysis

Relationships

When are secrets best kept?

Opinion + Analysis

Business + Leadership, Relationships

Moving work online

Opinion + Analysis

Relationships

Freedom and disagreement: How we move forward

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Critical Race Theory

Ethics Explainer: Critical Race Theory

ExplainerPolitics + Human RightsRelationships

BY The Ethics Centre 12 SEP 2022

Critical Race Theory (CRT) seeks to explain the multitude of ways that race and racism have become embedded in modern societies. The core idea is that we need to look beyond individual acts of racism and make structural changes to prevent and remedy racial discrimination.

History

Despite debates about Critical Race Theory hitting the headlines relatively recently, the theory has been around for over 30 years. It was originally developed in the 1980s by Derrick Bell, a prominent civil rights activist and legal scholar. Bell argued that racial discrimination didn’t just occur because of individual prejudices but also because of systemic forces, including discriminatory laws, regulations and institutional biases in education, welfare and healthcare.

During the 1950s and 1960s in America, there were many legal changes that moved the country towards racial equality. Some of the most significant legal changes include the Supreme Court’s decision in Brown v. Board of Education, which explicitly banned racial apartheid in American schools, the Civil Rights Act of 1964 and the Voting Rights Act of 1965.

These rulings and laws formally criminalised segregation, legalised interracial marriage and reduced restrictions in access to the ballot box that had been commonplace in many parts of America since the 1870s. There was also a concerted effort across education and the media to combat racially discriminatory beliefs and attitudes.

However, legal scholars noticed that even in spite of these prominent efforts, racism persisted throughout the country. How could racial equality be legislated by the highest court in America, and yet racial discrimination still occur every day?

Overview

Critical race theory, often shortened to CRT, is an academic framework that was developed out of legal scholarship that wanted to explain how institutions like the law perpetuates racial discrimination. The theory evolved to have an additional focus on how to change structures and institutions to produce a more equitable world. Today, CRT is mostly confined to academia, and while some elements of CRT may inform parts of primary and secondary education, very few schools teach CRT in its full form.

Some of the foundational principles of CRT are:

- CRT asserts that race is socially constructed. This means that the social and behavioural differences we see between different racialised groups are products of the society that they live in, not something biological or “natural.”

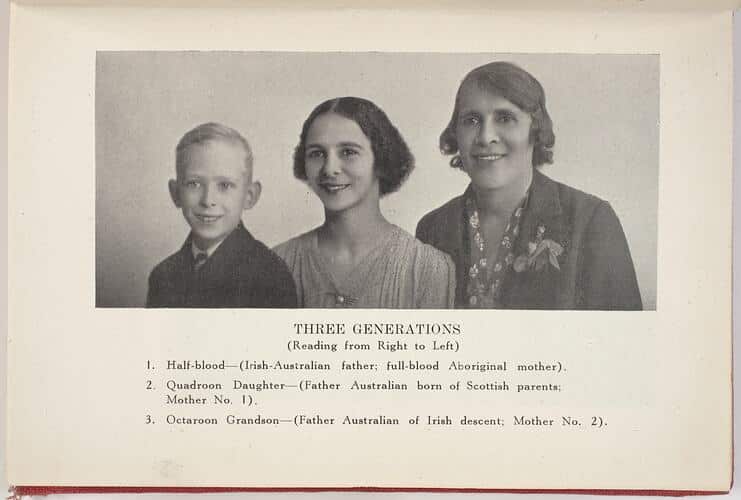

There is a long history of people using science to attempt to prove that there were significant social and psychological differences among people of different racial groups. They claimed these differences justified the poor treatment of people of different ‘inferior races’, or the ‘breeding out’ of certain races. This is how white Australians justified the atrocities committed in the Stolen Generations, such as the attempted ‘breeding out’ of Aboriginal people.

2. Racism is systemic and institutional. Imagine if everyone in the world magically erased all their racial biases. Racism would still exist, because there are systems and institutions that uphold racial discrimination, even if the people within them aren’t necessarily racist.

There are many examples of systemic and institutional racism around the world. They become evident when a system doesn’t have anything explicitly racist or discriminatory about it, but there are still differences in who benefits from that system. One example is the education system: it’s not explicitly racist, but students of different racial backgrounds have different educational outcomes and levels of attainment. In the US, this occurs because public schools are funded by both local and state governments, which means that children going to school in lower socioeconomic areas will be attending schools that receive less funding. Statistically, people of colour are more likely to live in lower socioeconomic areas of America. So, even though the education system isn’t explicitly racist (i.e., treating students of one racial background differently from students of a different racial background), their racial background still impacts their educational outcomes.

3. There is often more than one part of identity that can impact a person’s interaction with systems and institutions in society. Race is just one of many parts of identity that influences how a person will interact with the world. Different identities, including race, gender, sexuality, socioeconomic status, religion and ability, intersect with each other and compound. This is an idea known as “intersectionality.”

Most of the time, it’s not just one part of a person’s identity that is impacting their experiences in the world. Someone who is a Black woman will experience racism differently from a Black man, because gender will impact experience, just like race. A wealthy Chinese-Australian person will have a different experience living in Australia than a working class Chinese-Australian person. Ultimately, CRT tells us that we need to look at race in conjunction with other facets of identity that impact a person’s experience.

Critical Race Theory and racism in Australia

As Australians, it’s easy to point the finger at the US and think “well, at least we aren’t as bad as them.” However, this mentality of only focusing on the worst instances of racism means we often ignore the happenings closer to home. A 2021 survey conducted by the ABC found that 76% of Australians from a non-European background reported experiencing racial discrimination. One-third of all Australians have experienced racism in the workplace and two-thirds of students from non-Anglo backgrounds have experienced racism in school.

In addition to frequent instances of racism, Australia’s history is fraught with racism that is predominantly left out of high school history textbooks. From our early colonial history to racial discrimination during the gold rush in the 1850s to anti-immigration rhetoric today, we don’t need to look far for examples of racial discrimination. A little known part of Australian history is that non-British immigrants from 1901 until the 1960s were told that if they moved to Australia, they had to shed their languages and culture.

Even though CRT originates in the US, it is a useful framework for encouraging a closer analysis of Australia’s racist history and how this has caused the imbalances and inequalities we see today. And once we understand the systemic and institutional forces that promote or sustain racial injustice, we can take measures to correct them to produce more equitable outcomes for all.

If you want to learn more about how race has impacted the world today, here are some good places to start:

- Nell Painter’s Soul Murder and Slavery – her work has focused on the generational psychological impact of the trauma of slavery. Here is an interview where Painter talks a little bit about her work.

- Nikole Hannah-Jones’ 1619 Project, with the New York Times – you can listen to the podcast on Spotify, which has six great episodes on some of the less reported ways that slavery has impacted the functioning of US society.

- Dear White People – a Netflix show that deals with some of the complications of race on a US college campus.

- Ladies in Black – a movie about Sydney c. 1950s, shows many instances of the casual racism towards refugees and immigrants from Europe.

For a deeper dive on Critical Race Theory, Claire G. Coleman presents Words Are Weapons and Sisonke Msimang and Stan Grant present Precious White Lives as Festival of Dangerous Ideas 2022. Tickets on sale now.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Business + Leadership, Politics + Human Rights

Why fairness is integral to tax policy

Opinion + Analysis

Politics + Human Rights

Big Brother is coming to a school near you

Opinion + Analysis

Politics + Human Rights

Where do ethics and politics meet?

WATCH

Relationships

Virtue ethics

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Gender

Ethics Explainer: Gender

ExplainerPolitics + Human RightsRelationships

BY The Ethics Centre 10 AUG 2022

Gender is a complex social concept that broadly refers to characteristics, like roles, behaviours and norms, associated with masculinity and femininity.

Historically, gender in Western cultures has been a simple thing to define because it was seen as an extension of biological sex: ‘women’ were human females and ‘men’ were human males, where female and male were understood as biological categories.

This was due to a view that espouses the idea that biology (i.e., sex) predetermines or limits a host of social, psychological and behavioural traits that are inherently different between men and women, a view often referred to as biological determinism. This is where we get stereotypes like “men are rational and unemotional” and “women are passive and caring”.

While most people reject biologically deterministic views today, most still don’t distinguish between sex and gender. However, the conversation is slowly beginning to shift as a result of decades of feminist literature.

Additionally, it’s worth noting that outside of Western traditions, gender has been a much more fluid and complex concept for thousands of years. Hundreds of traditional cultures around the world have conceptions of gender that extend beyond the binary of men and women.

Feminist Gender Theory

Feminism has had a long history of challenging assumptions about gender, especially since the late twentieth century. Alongside some psychologists at the time, feminists began differentiating between sex and gender to argue that many of the differences between men and women that people took to be intrinsic were really the result of social and cultural conditioning.

Prior to this, sex and gender were thought be essentially the same thing. This encouraged people to confer biological differences onto social and cultural expectations. Feminists argue that this is a self-fulfilling misconception that produces oppression in many different ways; for example, socially and culturally limiting attitudes that prevent women from engaging in “masculine” activities and vice versa.

Really, they say, gender is social and sex is biological. Philosopher Simone de Beauvoir famously said: “One is not born, but rather becomes, a woman”.

Gender being social means that it’s a concept that is constructed and shaped by our perceptions of masculinity and femininity, and that it can vary between societies and cultures. Sex being biological means that it’s scientifically observable (though the idea of binary sex is also being questioned given there are over 100 million intersex people all over the world).

Philosophers like Simone de Beauvoir argued that gendered assumptions and expectations were so deeply engrained in our lives that they began to appear biologically predetermined, which gave credence to the idea of women being subservient because they were biologically so.

“Social discrimination produces in women moral and intellectual effects so profound that they appear to be caused by nature.”

Gender and Identity

Gender being socially constructed means that it is mutable. With this increasingly mainstream understanding, people with more diverse gender identities than simply that which they were assigned at birth (cisgender) have been able to identify themselves in ways that more closely reflect their experiences and expressions.

For example, some people identify with a different gender than what they were assigned at birth based on their sex (transgender); some people don’t identify as either man or woman, and instead feel that they are somewhere in between, or that the binary conception of gender doesn’t fit their experience and identity at all (non-binary). In many non-western cultures, gender has never been a binary concept.

Unfortunately, with the inherently identity-based nature of gender, a host of ethical issues arise mostly in the form of discrimination.

Transgender people, for example, are often the target of discrimination. This can be in areas as simple as what bathrooms they use to more complicated areas like participation in elite sports. Notably, these examples of discrimination are almost always targeted at transfeminine people (those who identify as women after being assigned men at birth).

Additionally, there are ethical considerations that have to be taken into account when young people, particularly minors, make decisions about affirming their gender. Currently, it’s standard medical practice for people under 18 to be barred from making decisions about permanent medical procedures, though this still allows them to (with professional, medical guidance) take puberty blockers that help to mitigate extra dysphoria linked to undergoing puberty in a gender the person doesn’t identify with.

Gender stereotypes in general also have negatives effects on all genders. Genderqueer people are often the targets of violence and discrimination. Women have historically been and are still oppressed in many ways because of systemic gender biases, like being discouraged to work in certain fields, being paid less for similar work or being harassed in various areas of their lives. Men also face harmful effects of rigid gender norms that often result in risk-taking behaviour, internalisation of mental health struggles, and encouraging violent or anti-social behaviour.

The Future of Gender

This has been an overview of the most common views on gender. However, there are also many variations on the traditional feminist view that other feminists argue are more accurate depictions of reality.

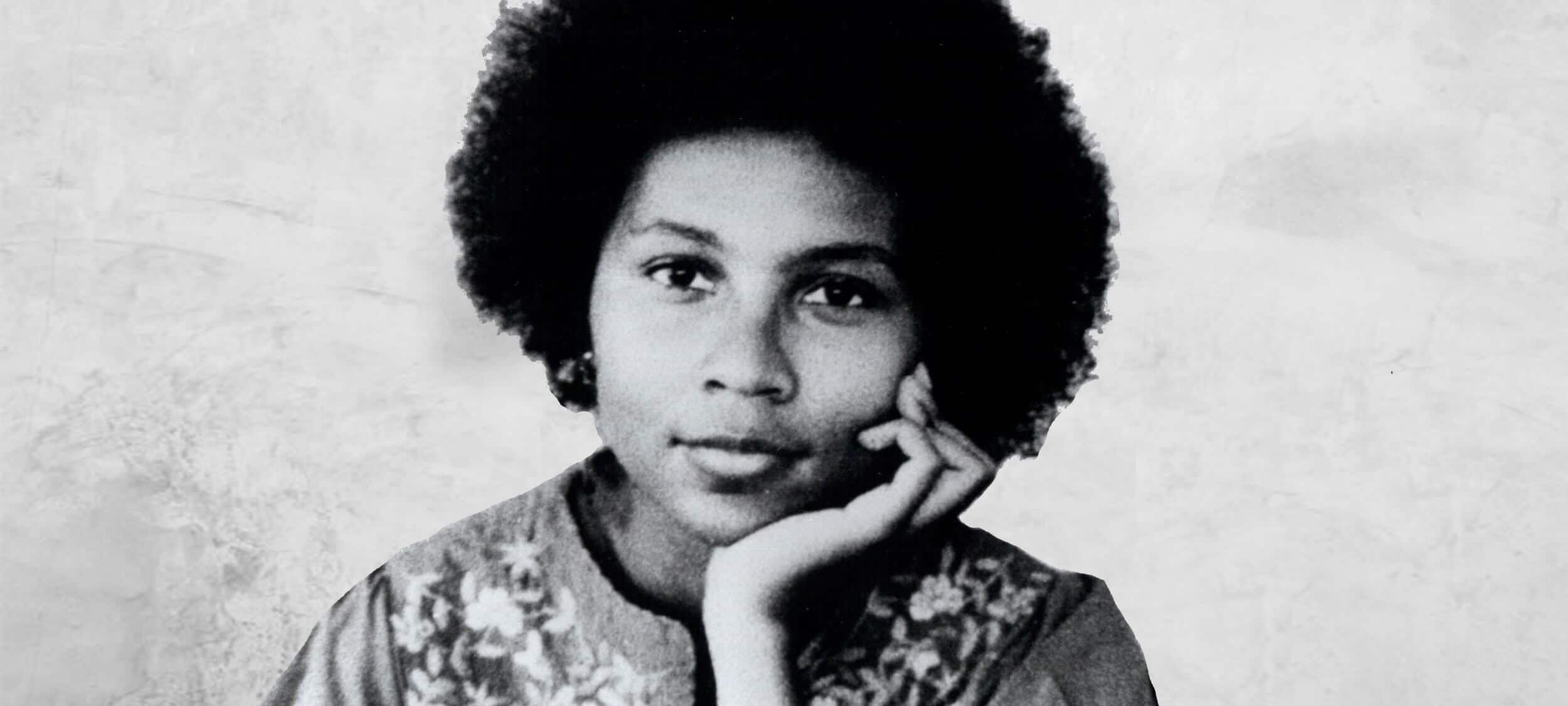

bell hooks was known to criticise some variations of gender that revolved around sexuality because they did not properly account for the way that class, race and socio-economic status changed the way that a woman was viewed and expected to behave. For example, many views of gender are from the perspective of white, western women and so fail to represent women in more marginalised circumstances.

Along similar lines, Judith Butler criticises the very idea of grouping people into genders, arguing that it is and will always be inherently normative and hence exclusionary. For Butler, gender is not simply about identity, it’s primarily about equality and justice.

Even some earlier gender theorists like Gayle Rubin argue for the eventual abolishment of gender from society, in which people are free to express themselves in whatever individual way they desire, free from any norms or expectations based on their biology and subsequent socialisation.

“The dream I find most compelling is one of an androgynous and genderless (though not sexless) society, in which one’s sexual anatomy is irrelevant to who one is, what one does, and with whom one makes love.”

Gender is currently a very active research and debate area, not only in philosophy, but also in sociology, politics and LGBTQI+ education. While theories about identity often result in conflict due to its inherently personal nature, it’s promising to see such a clear area where work by philosophers has significantly influenced public discourse with profound effects on many people’s lives.

For a deeper dive on gender, Alok Vaid-Menon presents Beyond the Gender Binary as part of Festival of Dangerous Ideas 2022. Tickets on sale now.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Politics + Human Rights, Society + Culture

There’s more to conspiracy theories than meets the eye

Opinion + Analysis

Relationships, Society + Culture

Greer has the right to speak, but she also has something worth listening to

Opinion + Analysis

Politics + Human Rights

The limits of ethical protest on university campuses

Opinion + Analysis

Climate + Environment, Relationships, Science + Technology

From NEG to Finkel and the Paris Accord – what’s what in the energy debate

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Social philosophy

Ethics Explainer: Social philosophy

ExplainerPolitics + Human RightsRelationships

BY The Ethics Centre 7 JUL 2022

Social philosophy is concerned with anything and everything about society and the people who live in it.

What’s the difference between a house and a cave, or a garden and a field of wildflowers? There are some things that are built by people, such as houses and gardens, that wouldn’t exist without human intervention. Similarly, there are some things that are natural, such as caves and fields of wildflowers, that would continue to exist as they were without humans. However, there is a grey area in the middle that social philosophers study, including topics like gender, race, ethics, law, politics, and relationships. Social philosophers spend their time parsing what parts of the world are constructed by humans and what parts are natural.

We can see the beginnings of the philosophical debate of social versus natural through Aristotle’s and Plato’s justifications for slavery. Aristotle believed that some people were incapable of being their own masters, and this was a natural difference between a slave and a free person. Plato, on the other hand, believed that anyone who was inferior to the Greeks could be enslaved, a difference that was made possible by the existence of Greek society.

Through the Middle Ages, attention turned to questioning religion and the divine right of monarchs. During this era, it was believed that monarchs were given their authority by God, which was why they had so much more power than the average person. British philosopher John Locke is well known for arguing that every man was created equally, and that everyone had an equal right to life, liberty, and pursuit of property. His conclusion was that these fundamental rights were natural to everyone, which contradicted the social norms that gave almost unlimited power to monarchs. The idea that a monarch naturally had the same fundamental rights as someone who worked the land would have to fundamentally change the structure of society.

During the 19th century, some philosophers began to question social categories and where they came from. Many people at the time held that social classes, or groups of people of the same socioeconomic status, were a result of biological, or natural, differences between people. Karl Marx, known for his 1848 pamphlet The Communist Manifesto, proposed his own theory about social classes. He argued that these socioeconomic differences that formed social differences were a result of the type of work that someone did and therefore social classes were socially, not biologically, constructed.

Today social philosophers are concerned with a variety of questions, including questions about race, gender, social change, and institutions that contribute to inequality. One example of a social philosopher who studies gender and race is Sally Haslanger. She has spent her time asking what are the defining characteristics of gender and race, and where these characteristics come from. In other cases, social philosophy is blended with cognitive psychology and behavioural studies, asking which of our behaviours are influenced by the society we live in and which behaviours are “natural,” or a product of our biology.

Social philosophy and ethics

Many of the questions social philosophers are concerned with are intertwined with ethics. Part of living in a society requires an (often unwritten) ethical code of conduct that ensures everything functions smoothly.

Thomas Hobbes’ social contract theory spells out the connection between a society and ethics. Hobbes believed that instead of ethics being something that existed naturally, a code of ethics and morality would arise when a group of free, self-interested, and rational people lived together in a society. Ethics would arise because people would find that better things could come from working together and trusting each other than would arise from doing everything on their own.

Today, much of how we act is determined by the societies we live in. The kinds of clothes we wear, the media we interact with, and how we talk to each other change depending on the norms of our society. This can complicate ethics: should we change our ethical code when we move to a different society with different norms? For example, one culture may say that it’s morally acceptable to eat meat, while a different culture may not. Should a person have to change the way they act moving from the meat-eating culture to the non-meat-eating culture? Moral relativists would say it is possible for both cultures to be morally right, and that we should act accordingly depending on which culture we are interacting with.

A significant reason that social philosophy is still such a nebulous field is that everyone has different life experiences and interacts with society differently. Additionally, different people feel like they owe different levels of commitment to the people around them. Ultimately, it’s a serious challenge for philosophers to come up with social theories that resonate with everyone the theory is supposed to include.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Explainer

Relationships, Society + Culture

Ethics Explainer: Ethical non-monogamy

Opinion + Analysis

Relationships, Society + Culture

Meet Eleanor, our new philosopher in residence

Opinion + Analysis

Health + Wellbeing, Relationships

How to deal with people who aren’t doing their bit to flatten the curve

Opinion + Analysis

Climate + Environment, Politics + Human Rights

Australia Day: Change the date? Change the nation

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Trust

Trust forms the foundation for relationships, cooperation, social interaction and the development of societies, but it is equally important as it is dangerous.

From hunter-gatherers to globalised societies, trust is the essential lubricant of social functioning at any scale.

Imagine something as simple as driving to get groceries. You leave your house, get in your car and drive to the shops. In doing so, you’re relying on several overlapping layers of trust that are engrained in our society. You trust that:

- your neighbours won’t break into your house while you’re gone

- the police will deal with them appropriately if they do

- the insurance company will deal with you fairly if they do

- the manufacturer of your car was responsible and competent

- the other drivers on the road will all obey the traffic laws

- your money has retained its value and that the shopkeeper will take it

and so on. Almost every aspect of our lives depends on these underlying relationships of trust with the people around us, and when they are betrayed – especially repeatedly – they can crumble and leave behind instability.

When we trust others, we’re depending on them to fulfil expectations that aren’t guaranteed to be met and that leaves us vulnerable or at risk.

Vulnerability is an important aspect of trust; it creates a tricky tension between our need to rely on others and our need to protect ourselves from risk and harm. To avoid vulnerability and guard against betrayal, we might decide not to trust anyone, but that leads to a miserable life: a life devoid of friendship or intimacy, and ultimately of convenience too, as we need to trust strangers on an almost daily basis, from taxi drivers to teachers to police.

So, we must trust others and learn how and when to be vulnerable to live a fulfilling life.

We trust people by giving them the space and freedom to do what they have been trusted to do, without necessary observation or oversight, with the expectation that they’re:

- Competent enough to do what they have been trusted to do and

- Willing to do it.

“Trust is an ability to rely on somebody to do what they have said they will do, even when no one is watching them” – Simon Longstaff AO

Trust and trustworthiness

An important distinction is the difference between trust and trustworthiness. Trust is an attitude that we have towards others (or sometimes ourselves!) that indicates our hope or expectation that the object of our trust is trustworthy.

Trustworthiness is a property or characteristic of others and in ideal situations has a reciprocal relationship with trust. That is, ideally, trust is an attitude towards trustworthy people, and trustworthy people will be trusted.

Of course, we have all experienced that we don’t live in an ideal world. Often untrustworthy people are perceived to be trustworthy because of lies, clever marketing or overwhelming charisma. Equally, trustworthy people can often be misrepresented to appear untrustworthy.

Interpersonal trust and institutional trust

There are two kinds of trust, the most common and intuitive kind being interpersonal trust – that is, trust between individuals. We might trust our friends with secrets or trust our family with babysitting or trust other drivers with our lives on the road.

A trickier kind of trust is that of institutions and government. They’re not as directly accessible as individual people

Nevertheless, we can and do trust institutions and governments to do as they say they will do, or what they are supposed to do: act in the interests of their people. This is a hallmark of society. But when this trust is eroded, we are left with an eroded society.

The ethics of trust

One of the first practical problems is knowing whom to trust. It’s easy to rely on perceived authority figures in our lives, and often we will simply need to trust that the people who are close to us are looking out for us, but in general we should be on the lookout for consistent moral behaviour.

Ultimately, there is no way to know whom to trust with certainty, but there are many indicators we can use to decide. Are they an honest person? Have they been reliable in the past? Are they self-centred or do they concern themselves with the wellbeing of others? Answering questions like these can help to minimise the risk you take on if you choose to trust someone.

- How do we rebuild trust?

- How should we act if we don’t trust someone?

- If someone breaks our trust, should we distrust them?

- When or how often should we re-examine our trust of someone?

- When is trust a good thing? When is it bad?

- What’s so important about vulnerability?

Answering these questions involves a complex mix of knowing how trust works, knowing the habits, motives and values of others, and knowing ourselves.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships

Israel Folau: appeals to conscience cut both ways

Explainer

Relationships

Ethics Explainer: Consequentialism

Opinion + Analysis

Relationships, Society + Culture

Meet David Blunt, our new Fellow exploring the role ethics can play in politics

Opinion + Analysis

Health + Wellbeing, Relationships

Australia’s paid parental leave reform is only one step in addressing gender-based disadvantage

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Teleology

Often, when we try to understand something, we ask questions like “What is it for?”. Knowing something’s purpose or end-goal is commonly seen as integral to comprehending or constructing it. This is the practice or viewpoint of teleology.

Teleology comes from two Greek words: telos, meaning “end, purpose or goal”, and logos, meaning “explanation or reason”.

From this, we get teleology: an explanation of something that refers to its end, purpose or goal.

For example, take a kitchen knife. We might ask why a knife takes the form and features that it does. If we referred to the past – to the process of its making, for example – that would be a causal (etiological) explanation. But a teleological explanation would be something that refers to its end, like: “Its purpose is to cut”. Someone might then ask: “But what makes a good knife?”, and the answer would be: “A good knife is a knife that cuts well.” It’s this guiding principle – knowing and focusing on the purpose – that allows knife-makers to make confident decisions in the smithing process and know that their knife is good, even if it’s never used.

What once was an acorn…

In Western philosophy, teleology originated in the writings and ideas of Plato and then Aristotle. For the Ancient Greeks, telos was a bit more grounded in the inherent nature of things compared to the man-made example of a knife.

For example, a seed’s telos is to grow into an adult plant. An acorn’s telos is to grow into an oak tree. A chair’s telos is to be sat on. For Aristotle, a telos didn’t necessarily need to involve any deliberation, intention or intelligence.

However, this is where teleological explanations have caused issue.

Teleological explanations are sometimes used in evolutionary biology as a kind of shorthand, much to the dismay of many scientists. This is because the teleological phrasing of biological traits can falsely present the facts as supporting some kind of intelligent design.

For example, take the long neck of giraffes. A shorthand teleological explanation of this trait might be that “evolution gave giraffes long necks for the purpose of reaching less competitive food sources”. However, this explanation wrongly implies some kind of forward-looking purpose for evolved traits, or that there is some kind of intention baked into evolution.

Instead, evolutionary biology suggests that giraffes with short necks were less likely to survive, leaving the longer-necked giraffes to breed and pass on their long-neck genes, eventually increasing the average length of their necks.

Notice how the accurate explanation doesn’t refer to any purpose or goal. This kind of description is needed when talking about things like nature or people (at least, if you don’t believe in gods), though teleological explanations can still be useful elsewhere.

Ethics and decision-making

Teleology is more helpful and impactful in ethics, or decision-making in general.

Aristotle was a big proponent of human teleology, seen in the concept of eudaimonia (flourishing). He believed that human flourishing was the goal or purpose of each person, and that we could all strive towards this “life well-lived” by living in moderation, according to various virtues.

Teleology is also often compared or confused with consequentialism, but they are not the same. If you were to take a business that specialises in home security, for example, a consequentialist would tell you to look at the consequences of your service to see if it is effective and good. Sometimes, though, it will be hard to tell if the outcome (e.g., fewer break-ins or attempted break-ins) can be attributed to your business and not other factors, like changes in laws, policing, homelessness, etc., or you might not yet have any outcomes to analyse.

Instead, teleological approaches to business decision-making would have you focus on the purpose of your service i.e., to prevent home intrusion and ensure security. With that in mind, you could construct your services to meet these goals in a variety of ways, keeping this purpose in mind when making hiring decisions, planning redundancies, etc., and be confident that your service would fulfil its purpose well (even if it is never needed!).

But how do we decide what a good purpose is?

Simply using a teleological lens doesn’t make us ethical. If we’re trying to be ethical, we want to make sure that our purpose itself is good. One option to do this is to find a purpose that is intrinsically good – things like justice, security, health and happiness, rather than things that are a means to an end, like profit or personal gain.

This viewpoint needn’t only apply to business. In trying to be better, more ethical people, we can employ these same teleological views and principles to inform our own decisions and actions. Rather than thinking about the consequences of our actions, we can instead think about what purpose we’re trying to achieve, and then form our decisions based on whether they align with that purpose.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Health + Wellbeing, Relationships

James Hird In Conversation Q&A

Opinion + Analysis

Society + Culture, Relationships

Whose fantasy is it? Diversity, The Little Mermaid and beyond

Opinion + Analysis

Health + Wellbeing, Relationships

To live well, make peace with death

Opinion + Analysis

Relationships

Violent porn denies women’s human rights

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Power

Ethics Explainer: Power

ExplainerBusiness + LeadershipPolitics + Human RightsRelationships

BY The Ethics Centre 11 MAR 2022

“If a white man wants to lynch me, that’s his problem. If he’s got the power to lynch me, that’s my problem. It’s not a question of attitude; it’s a question of power.” – Stokely Carmichael

A central concern of justice is who has power and how they should be allowed to use it. A central concern of the rest of us is how people with power in fact do use it. Both questions have animated ethicists and activists for hundreds of years, and their insights may help us as we try to create a just society.

A classic formulation is given by the eminent sociologist Max Weber, for whom power is “the probability that one actor within a social relationship will be in a position to carry out his own will despite resistance”. Michel Foucault, one of the century’s most prominent theorists of power, seems to echo this view: “if we speak of the structures or the mechanisms of power, it is only insofar as we suppose that certain persons exercise power over others”.

A rival view holds that instead of being a relation, power is a resource: like water, food, or money, power is a resource that a particular person or institution can accrue and it can therefore be justly or unjustly distributed. This view has been especially popular among feminist theorists who have used economic models of resource distribution to talk about gendered inequalities in social resources, including and especially power.

Susan Moller Okin is one prominent voice in this tradition:

“When we look seriously at the distribution of such critical social goods as power, self-esteem, opportunities for self-development … we find socially constructed inequalities between them, right down the list”.

What’s the difference between these two views? Why care? One answer is that our efforts to make power more just in society will depend on what kind of thing it is: if it’s a resource, such that problems of unfair power are problems of unequal distribution, we might be able to improve things by removing some power from some people – that way, they would no longer have more than others. This strategy would be less likely to work if power was a relation.

In addition to working out what power is, there are important moral questions about when it can be ethically used. This is a pressing question: As long as we live in societies, under democratic governments, or in states that use police forces and militaries to secure our goals, there will be at least one form of power to which everyone is subject: the power of the state.

The state is one of the only legitimate bearers of the power to use violence. If anyone else uses a weapon or a threat of imprisonment to secure their goals, we think they’re behaving illegitimately, but when the state does these things, we think it is – or can be – legitimate.

Since Plato, democracies have agreed that we need to allow and centralise some coercive power if we are to enforce our laws. Given the state’s unique power to use violence, it’s especially important that that power be just and fair. However, it’s challenging to spell what fair power is inside a democracy or how to design a system that will trend towards exemplifying it.

As Douglas Adams once wrote:

“The major problem with governing people – one of the major problems, for there are many – is that no-one capable of getting themselves elected should on any account be allowed to do the job”.

One recurring question for ‘fairness’ in political power is whether the people governed by the relevant political authority have a to obey that authority. When a state has the power to set laws and enforce them, for instance, does this issue a correlate duty for citizens to obey those laws? The state has duties to its people because it has so much power; but do people have reciprocal duties to their state, also rooted in its power?

Transposing this question into our personal lives, it’s sometimes thought that each of us has a kind of moral power to extract behaviour from others. If you don’t keep your promise, I can blame or sanction you into doing what you said you would. In other words, I can exercise my moral power to make claims of you. Does this sort of power work in the same way as political power? Is it possible for me to abuse my moral power over you; using it in ways that are unjust or unfair – and might you have a duty to obey that moral power?

Finally, we can ask valuable questions about what it is to be powerless. It’s certainly a site of complaint: many of us protest or object when we feel powerless. But how should we best understand it? Is powerlessness about actually being interfered with by others, or simply being susceptible to it, or vulnerable to it? For prominent philosopher Philip Pettit (AC), it’s the latter – to be “unfree” is to be vulnerable or susceptible to the other people’s whims, irrespective of whether they actually use their power against us.

If we want a more ethically ordered society, it’s important to understand how power works – and what goes wrong when it doesn’t.

Join us for the Ethics of Power on Thurs 14 March, 2024 at 6:30pm. Tickets available here.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships, Science + Technology

If humans bully robots there will be dire consequences

Opinion + Analysis

Climate + Environment, Politics + Human Rights, Relationships

This is what comes after climate grief

Opinion + Analysis

Health + Wellbeing, Relationships

Confirmation bias: ignoring the facts we don’t fancy

Opinion + Analysis

Relationships, Society + Culture

Meet Joseph, our new Fellow exploring society through pop culture

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Beauty

Research shows that physical appearance can affect everything from the grades of students to the sentencing of convicted criminals – are looks and morality somehow related?

Ancient philosophers spoke of beauty as a supreme value, akin to goodness and truth. The word itself alluded to far more than aesthetic appeal, implying nobility and honour – it’s counterpart, ugliness, made all the more shameful in comparison.

From the writings of Plato to Heraclitus, beautiful things were argued to be vital links between finite humans and the infinite divine. Indeed, across various cultures and epochs, beauty was praised as a virtue in and of itself; to be beautiful was to be good and to be good was to be beautiful.

When people first began to ask, ‘what makes something (or someone) beautiful?’, they came up with some weird ideas – think Pythagorean triangles and golden ratios as opposed to pretty colours and chiselled abs. Such aesthetic ideals of order and harmony contrasted with the chaos of the time and are present throughout art history.

Leonardo da Vinci, Vitruvian Man, c.1490

These days, a more artificial understanding of beauty as a mere observable quality shared by supermodels and idyllic sunsets reigns supreme.

This is because the rise of modern science necessitated a reappraisal of many important philosophical concepts. Beauty lost relevance as a supreme value of moral significance in a time when empirical knowledge and reason triumphed over religion and emotion.

Yet, as the emergence of a unique branch of philosophy, aesthetics, revealed, many still wondered what made something beautiful to look at – even if, in the modern sense, beauty is only skin deep.

Beauty: in the eye of the beholder?

In the ancient and medieval era, it was widely understood that certain things were beautiful not because of how they were perceived, but rather because of an independent quality that appealed universally and was unequivocally good. According to thinkers such as Aristotle and Thomas Aquinas, this was determined by forces beyond human control and understanding.

Over time, this idea of beauty as entirely objective became demonstrably flawed. After all, if this truly were the case, then controversy wouldn’t exist over whether things are beautiful or not. For instance, to some, the Mona Lisa is a truly wonderful piece of art – to others, evidence that Da Vinci urgently needed an eye check.

Consequently, definitions of beauty that accounted for these differences in opinion began to gain credence. David Hume famously quipped that beauty “exists merely in the mind which contemplates”. To him and many others, the enjoyable experience associated with the consumption of beautiful things was derived from personal taste, making the concept inherently subjective.

This idea of beauty as a fundamentally pleasurable emotional response is perhaps the closest thing we have to a consensus among philosophers with otherwise divergent understandings of the concept.

Returning to the debate at hand: if beauty is not at least somewhat universal, then why do hundreds and thousands of people every year visit art galleries and cosmetic surgeons in pursuit of it? How can advertising companies sell us products on the premise that they will make us more beautiful if everyone has a different idea of what that looks like? Neither subjectivist nor objectivist accounts of the concept seem to adequately explain reality.

According to philosophers such as Immanuel Kant and Francis Hutcheson, the answer must lie somewhere in the middle. Essentially, they argue that a mind that can distance itself from its own individual beliefs can also recognize if something is beautiful in a general, objective sense. Hume suggests that this seemingly universal standard of beauty arises when the tastes of multiple, credible experts align. And yet, whether or not this so-called beautiful thing evokes feelings of pleasure is ultimately contingent upon the subjective interpretation of the viewer themselves.

Looking good vs being good

If this seemingly endless debate has only reinforced your belief that beauty is a trivial concern, then you are not alone! During modernity and postmodernity, philosophers largely abandoned the concept in pursuit of more pressing matters – read: nuclear bombs and existential dread. Artists also expressed their disdain for beauty, perceived as a largely inaccessible relic of tired ways of thinking, through an expression of the anti-aesthetic.

Nevertheless, we should not dismiss the important role beauty plays in our day-to-day life. Whilst its association with morality has long been out of vogue among philosophers, this is not true of broader society. Psychological studies continually observe a ‘halo effect’ around beautiful people and things that see us interpret them in a more favourable light, leading them to be paid higher wages and receive better loans than their less attractive peers.

Social media makes it easy to feel that we are not good enough, particularly when it comes to looks. Perhaps uncoincidentally, we are, on average, increasing our relative spending on cosmetics, clothing, and other beauty-related goods and services.

Turning to philosophy may help us avoid getting caught in a hamster wheel of constant comparison. From a classical perspective, the best way to achieve beauty is to be a good person. Or maybe you side with the subjectivists, who tell us that being beautiful is meaningless anyway. Irrespective, beauty is complicated, ever-important, and wonderful – so long as we do not let it unfairly cloud our judgements.

Step through the mirror and examine what makes someone (or something) beautiful and how this impacts all our lives. Join us for the Ethics of Beauty on Thur 29 Feb 2024 at 6:30pm. Tickets available here.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Big thinker

Relationships, Society + Culture

Big Thinker: Socrates

Opinion + Analysis

Politics + Human Rights, Relationships

Want #MeToo to serve justice? Use it responsibly.

Opinion + Analysis

Society + Culture

Ask an ethicist: Am I falling behind in life “milestones”?

Opinion + Analysis

Relationships

Why we find conformity so despairing

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Pragmatism

Pragmatism is a philosophical school of thought that, broadly, is interested in the effects and usefulness of theories and claims.

Pragmatism is a distinct school of philosophical thought that began at Harvard University in the late 19th century. Charles Sanders Pierce and William James were members of the university’s ‘Metaphysical Club’ and both came to believe that many disputes taking place between its members were empty concerns. In response, the two began to form a ‘Pragmatic Method’ that aimed to dissolve seemingly endless metaphysical disputes by revealing that there was nothing to argue about in the first place.

How it came to be

Pragmatism is best understood as a school of thought born from a rejection of metaphysical thinking and the traditional philosophical pursuits of truth and objectivity. The Socratic and Platonic theories that form the basis of a large portion of Western philosophical thought aim to find and explain the “essences” of reality and undercover truths that are believed to be obscured from our immediate senses.

This Platonic aim for objectivity, in which knowledge is taken to be an uncovering of truth, is one which would have been shared by many members of Pierce and James’ ‘Metaphysical Club’. In one of his lectures, James offers an example of a metaphysical dispute:

A squirrel is situated on one side of a tree trunk, while a person stands on the other. The person quickly circles the tree hoping to catch sight of the squirrel, but the squirrel also circles the tree at an equal pace, such that the two never enter one another’s sight. The grand metaphysical question that follows? Does the man go round the squirrel or not?

Seeing his friends ferociously arguing for their distinct position led James to suggest that the correctness of any position simply turns on what someone practically means when they say, ‘go round’. In this way, the answer to the question has no essential, objectively correct response. Instead, the correctness of the response is contingent on how we understand the relevant features of the question.

Truth and reality

Metaphysics often talks about truth as a correspondence to or reflection of a particular feature of “reality”. In this way, the metaphysical philosopher takes truth to be a process of uncovering (through philosophical debate or scientific enquiry) the relevant feature of reality.

On the other hand, pragmatism is more interested in how useful any given truth is. Instead of thinking of truth as an ultimately achievable end where the facts perfectly mirror some external objective reality, pragmatism instead regards truth as functional or instrumental (James) or the goal of inquiry where communal understanding converges (Pierce).

Take gravity, for example. Pragmatism doesn’t view it as true because it’s the ‘perfect’ understanding and explanation for the phenomenon, but it does view it as true insofar as it lets us make extremely reliable predictions and it is where vast communal understanding has landed. It’s still useful and pragmatic to view gravity as a true scientific concept even if in some external, objective, all-knowing sense it isn’t the perfect explanation or representation of what’s going on.

In this sense, truth is capable of changing and is contextually contingent, unlike traditional views.. Pragmatism argues that what is considered ‘true’ may shift or multiply when new groups come along with new vocabularies and new ways of seeing the world.

To reconcile these constantly changing states of language and belief, Pierce constructed a ‘Pragmatic Maxim’ to act as a method by which thinkers can clarify the meaning of the concepts embedded in particular hypotheses. One formation of the maxim is:

Consider what effects, which might conceivably have practical bearings, we conceive the object of our conception to have. Then, our conception of those effects is the whole of our conception of the object.

In other words, Pierce is saying that the disagreement in any conceptual dispute should be describable in a way which impacts the practical consequences of what is being debated. Pragmatic conceptions of truth take seriously this commitment to practicality. Richard Rorty, who is considered a neopragmatist, writes extensively on a particular pragmatic conception of truth.

Rorty argues that the concept of ‘truth’ is not dissimilar to the concept of ‘God’, in the way that there is very little one can say definitively about God. Rorty suggests that rather than aiming to uncover truths of the world, communities should instead attempt to garner as much intersubjective agreement as possible on matters they agree are important.

Rorty wants us to stop asking questions like, ‘Do human beings have inalienable human rights?’, and begin asking questions like, ‘Should we work towards obtaining equal standards of living for all humans?’. The first question is at risk of leading us down the garden path of metaphysical disputes in ways the second is not. As the pragmatist is concerned with practical outcomes, questions which deal in ‘shoulds’ are more aligned with positing future directed action than those which get stuck in metaphysical mud.

Perhaps the pragmatists simply want us to ask ourselves: Is the question we’re asking, or hypothesis that we’re posing, going to make a useful difference to addressing the problem at hand? Useful, as Rorty puts it, is simply that which gets us more of what we want, and less of what we don’t want. If what we want is collective understanding and successful communication, we can get it by testing whether the questions we are asking get us closer to that goal, not further away.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships

What is the definition of Free Will ethics?

Opinion + Analysis

Health + Wellbeing, Relationships

Duties of care: How to find balance in the care you give

Big thinker

Relationships

Big Thinker: bell hooks

Opinion + Analysis

Health + Wellbeing, Relationships, Science + Technology

Philosophically thinking through COVID-19

BY The Ethics Centre

The Ethics Centre is a not-for-profit organisation developing innovative programs, services and experiences, designed to bring ethics to the centre of professional and personal life.

Ethics Explainer: Autonomy

Ethics Explainer: Autonomy

ExplainerPolitics + Human RightsRelationships

BY The Ethics Centre 22 NOV 2021

Autonomy is the capacity to form beliefs and desires that are authentic and in our best interests, and then act on them.

What is it that makes a person autonomous? Intuitively, it feels like a person with a gun held to their head is likely to have less autonomy than a person enjoying a meandering walk, peacefully making a choice between the coastal track or the inland trail. But what exactly are the conditions which determine someone’s autonomy?

Is autonomy just a measure of how free a person is to make choices? How might a person’s upbringing influence their autonomy, and their subsequent capacity to act freely? Exploring the concept of autonomy can help us better understand the decisions people make, especially those we might disagree with.

The definition debate

Autonomy, broadly speaking, refers to a person’s capacity to adequately self-govern their beliefs and actions. All people are in some way influenced by powers outside of themselves, through laws, their upbringing, and other influences. Philosophers aim to distinguish the degree to which various conditions impact our understanding of someone’s autonomy.

There remain many competing theories of autonomy.

These debates are relevant to a whole host of important social concerns that hinge on someone’s independent decision-making capability. This often results in people using autonomy as a means of justifying or rebuking particular behaviours. For example, “Her boss made her do it, so I don’t blame her” and “She is capable of leaving her boyfriend, so it’s her decision to keep suffering the abuse” are both statements that indirectly assess the autonomy of the subject in question.

In the first case, an employee is deemed to lack the autonomy to do otherwise and is therefore taken to not be blameworthy. In the latter case, the opposite conclusion is reached. In both, an assessment of the subject’s relative autonomy determines how their actions are evaluated by an onlooker.

Autonomy often appears to be synonymous with freedom, but the two concepts come apart in important ways.

Autonomy and freedom

There are numerous accounts of both concepts, so in some cases there is overlap, but for the most part autonomy and freedom can be distinguished.

Freedom tends to broader and more overt. It usually speaks to constraints on our ability to act on our desires. This is sometimes also referred to as negative freedom. Autonomy speaks to the independence and authenticity of the desires themselves, which directly inform the acts that we choose to take. This is has lots in common with positive freedom.

For example, we can imagine a person who has the freedom to vote for any party in an election, but was raised and surrounded solely by passionate social conservatives. As a member of a liberal democracy, they have the freedom to vote differently from the rest of their family and friends, but they have never felt comfortable researching other political viewpoints, and greatly fear social rejection.

If autonomy is the capacity a person has to self-govern their beliefs and decisions, this voter’s capacity to self-govern would be considered limited or undermined (to some degree) by social, cultural and psychological factors.

Relational theories of autonomy focus on the ways we relate to others and how they can affect our self-conceptions and ability to deliberate and reason independently.

Relational theories of autonomy were originally proposed by feminist philosophers, aiming to provide a less individualistic way of thinking about autonomy. In the above case, the voter is taken to lack autonomy due to their limited exposure to differing perspectives and fear of ostracism. In other words, the way they relate to people around them has limited their capacity to reflect on their own beliefs, values and principles.

One relational approach to autonomy focuses on this capacity for internal reflection. This approach is part of what is known as the ‘procedural theory of relational autonomy’. If the woman in the abusive relationship is capable of critical reflection, she is thought to be autonomous regardless of her decision.

However, competing theories of autonomy argue that this capacity isn’t enough. These theories say that there are a range of external factors that can shape, warp and limit our decision-making abilities, and failing to take these into account is failing to fully grasp autonomy. These factors can include things like upbringing, indoctrination, lack of diverse experiences, poor mental health, addiction, etc., which all affect the independence of our desires in various ways.

Critics of this view might argue that a conception of autonomy is that is broad makes it difficult to determine whether a person is blameworthy or culpable for their actions, as no individual remains untouched by social and cultural influences. Given this, some philosophers reject the idea that we need to determine the particular conditions which render a person’s actions truly ‘their own’.

Maybe autonomy is best thought of as merely one important part of a larger picture. Establishing a more comprehensively equitable society could lessen the pressure on debates around what is required for autonomous action. Doing so might allow for a broadening of the debate, focusing instead on whether particular choices are compatible with the maintenance of desirable societies, rather than tirelessly examining whether or not the choices a person makes are wholly their own.

Ethics in your inbox.

Get the latest inspiration, intelligence, events & more.

By signing up you agree to our privacy policy

You might be interested in…

Opinion + Analysis

Relationships, Society + Culture

Meet Josh, our new Fellow asking the practical philosophical questions

Opinion + Analysis

Business + Leadership, Relationships, Society + Culture

Extending the education pathway

Explainer

Society + Culture, Politics + Human Rights

Ethics Explainer: Just Punishment

Explainer

Relationships